27 methods × 8 windows variant-first benchmark (248 ready problems)#1

Merged

Conversation

- add manifest-level benchmark weighting support\n- expand profile coverage across multiple method manifests\n- refresh aggregates, docs, and paper assets\n- update HDL Graph SLAM short-sequence variants\n\nCo-authored-by: Copilot <223556219+Copilot@users.noreply.github.com>

- add CLINS fast/dense evaluator profiles\n- add a public ROS1 HDL-400 synth-time CLINS manifest\n- refresh aggregates, docs, paper assets, and handoff notes\n\nCo-authored-by: Copilot <223556219+Copilot@users.noreply.github.com>

- update README benchmark scope and selector references\n- clarify public ROS1 HDL-400 synth-time versus reference/native-time windows\n- refresh paper outline, claims, and table checklist counts\n\nCo-authored-by: Copilot <223556219+Copilot@users.noreply.github.com>

- mark the full-sequence HDL Graph SLAM policy as resolved\n- record 0009_full as default-only and 0061_full as skipped\n- refresh handoff notes to reflect the current draft PR state\n\nCo-authored-by: Copilot <223556219+Copilot@users.noreply.github.com>

- refresh PLAN.md to the current draft PR state\n- record the current branch, CI, counts, and commit stack\n- add a deeper Claude handoff section with resolved and unresolved work\n\nCo-authored-by: Copilot <223556219+Copilot@users.noreply.github.com>

Codex 向けタスク仕様書。docs/native_time_provenance.md の新規作成を依頼する。 synthetic-time と native-time の区別、exact reproduction に必要な source artifact 条件、解除チェックリストを明文化する作業。

HDL-400 ベンチマークにおける native per-point time と synthetic time の 2系統の違い、exact reproduction に必要な条件、blocking 状態の理由と 解除チェックリストを docs/native_time_provenance.md に記載。

Task 2: Expand 1-manifest methods to all dataset windows (~84 new manifests) Task 3: Full ctest pass + implementation quality audit (docs/implementation_notes.md)

- docs/implementation_notes.md: LOC, test coverage, fidelity for all 27 methods - Full ctest verified: 38/38 passed (49.85s) - All Python scripts pass syntax check

- Add expand_manifests.py to auto-generate missing window manifests - 162 new manifests created, all 330 validated as correct JSON - Every LiDAR method now covers Istanbul/HDL-400/MCD/KITTI windows - Handles KITTI-profile args, no-gt-seed, reference_role correctly

- 18 MCD manifests (6 methods × 3 windows) - 16 KITTI 200f manifests (8 methods × 2 windows) - 40 HDL-400 manifests (20 methods × 2 windows) - Move 80 data-less manifests to experiments/pending/ - Full docs refresh: 248 ready + 1 blocked + 1 skipped

Owner

Author

Owner

Author

Owner

Author

Owner

Author

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

Summary

27 LiDAR localization methods compared across 4 public dataset families with variant-first benchmarking.

--no-gt-seed)Key Results

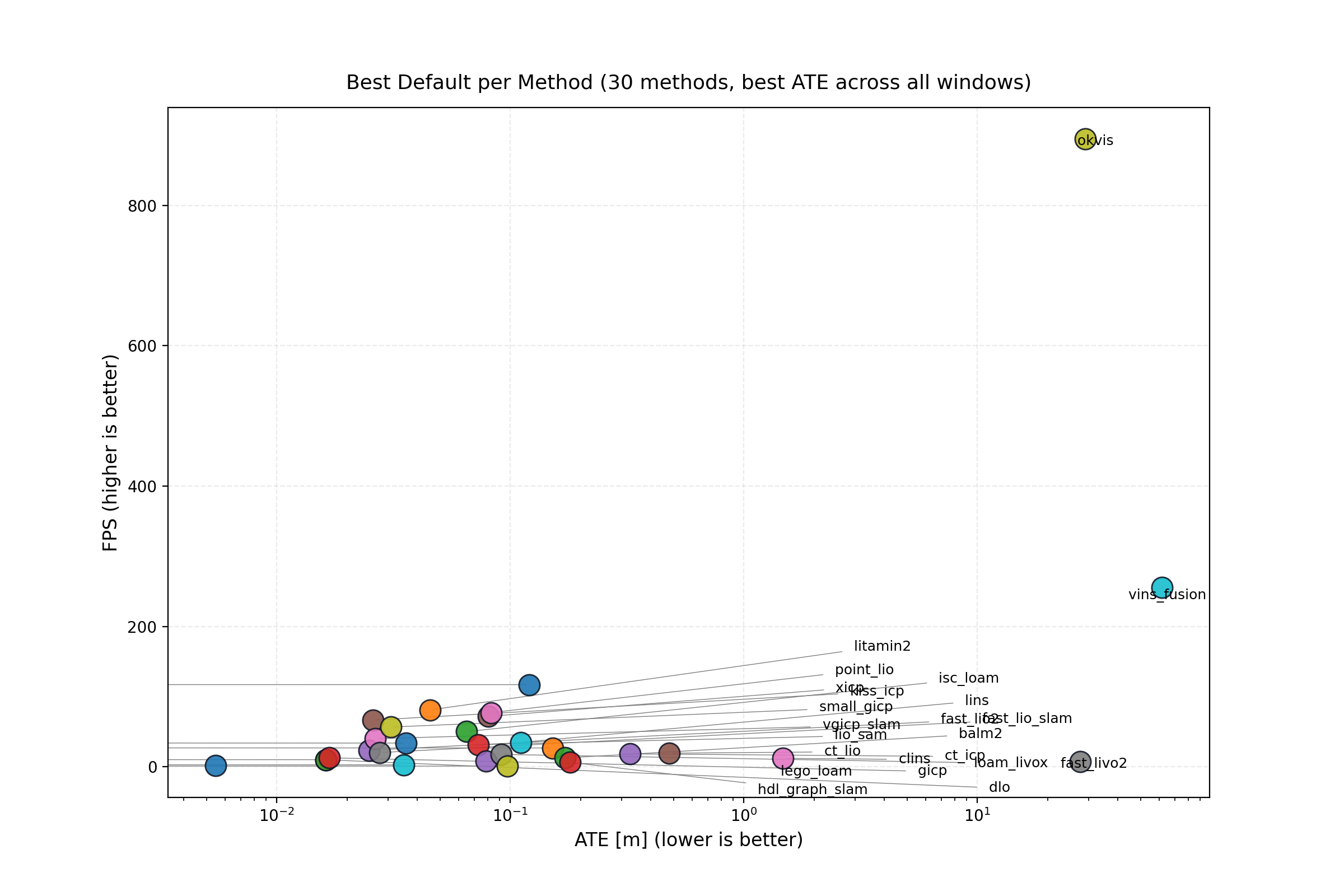

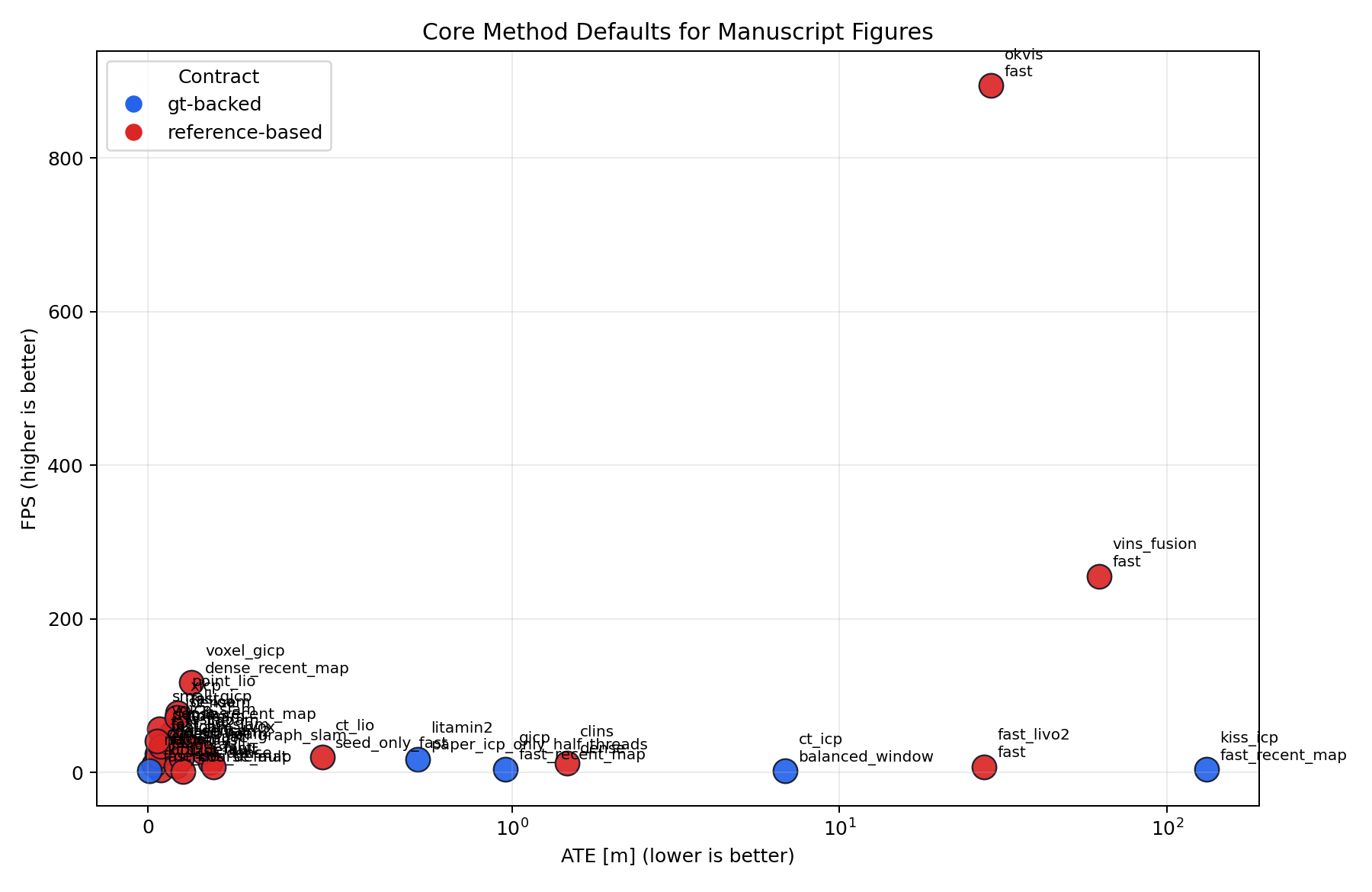

Pareto Front: Accuracy vs Throughput (248 defaults)

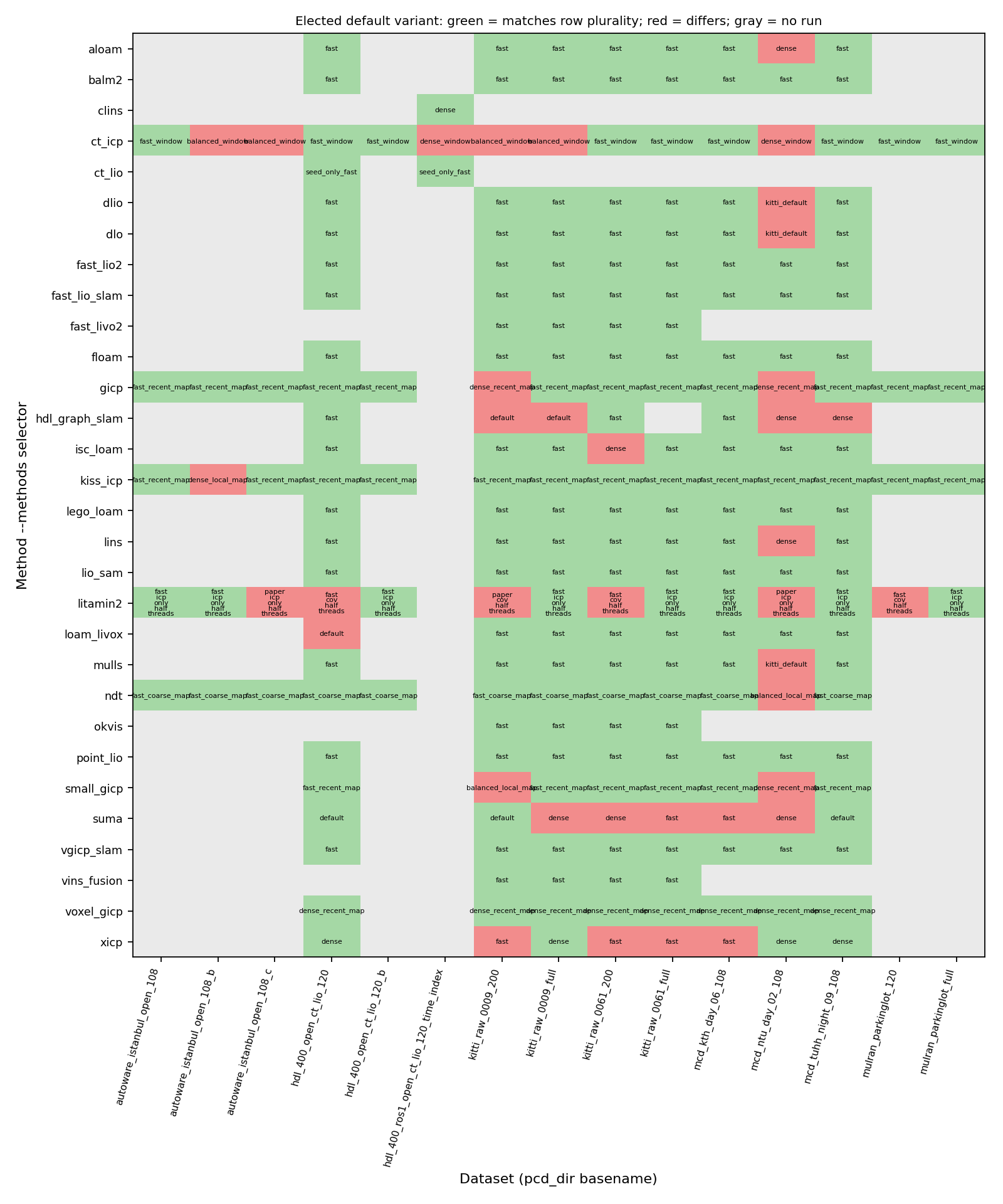

Default Variant Instability Across Datasets

Variant Fronts by Method Family

GT-Seed Ablation Finding

What's Included

evaluation/src/pcd_dogfooding.cpp— unified benchmark CLI (27 methods)experiments/*_matrix.json— 250 active + 80 pending manifestsexperiments/results/— all aggregate resultsdocs/variant_analysis.md— cross-dataset stability + profile impact analysisdocs/implementation_notes.md— 27 methods quality auditdocs/native_time_provenance.md— HDL-400 time provenance documentationDockerfile— reproducible build (Ceres 2.1/2.2 compatible)