DeepLabCut is a toolbox for markerless pose estimation of animals performing various tasks. Originally, we demonstrated the capabilities for trail tracking, reaching in mice and various Drosophila behaviors during egg-laying (see Mathis et al. for details). There is, however, nothing specific that makes the toolbox only applicable to these tasks and/or species. The toolbox has also already been successfully applied (by us and others) to rats, humans, various fish species, bacteria, leeches, various robots, cheetahs, mouse whiskers and race horses. This work utilizes the feature detectors (ResNets + readout layers) of one of the state-of-the-art algorithms for human pose estimation by Insafutdinov et al., called DeeperCut, which inspired the name for our toolbox (see references below).

VERSION 2.0: This is the Python package of DeepLabCut that is released with our Nature Protocols paper (preprint here). This package includes graphical user interfaces to label your data, and take you from data set creation to automatic behavioral analysis. It also introduces an active learning framework to efficiently use DeepLabCut on large experimental projects, and new data augmentation that improves network performance, especially in challenging cases (see panel b).

VERSION 1.0: The initial, Nature Neuroscience version of DeepLabCut can be found in the history of git, or here: https://github.com/AlexEMG/DeepLabCut/releases/tag/1.11

Please check out www.deeplabcut.org for more video demonstrations of automated tracking. Above: courtesy of the Murthy (mouse), Leventhal (rat), and Axel (fly) labs!

How to install DeeplabCut

An overview of the pipeline and workflow for project management. Please also read the Nature Protocols user-guide!

We provide several Jupyter Notebooks: one that walks you through a demo dataset to test your installation, and another Notebook to run DeepLabCut from the begining on your own data. We also show you how to use the code in Docker, and on Google Colab. Please also read the Nature Protocols paper.

-

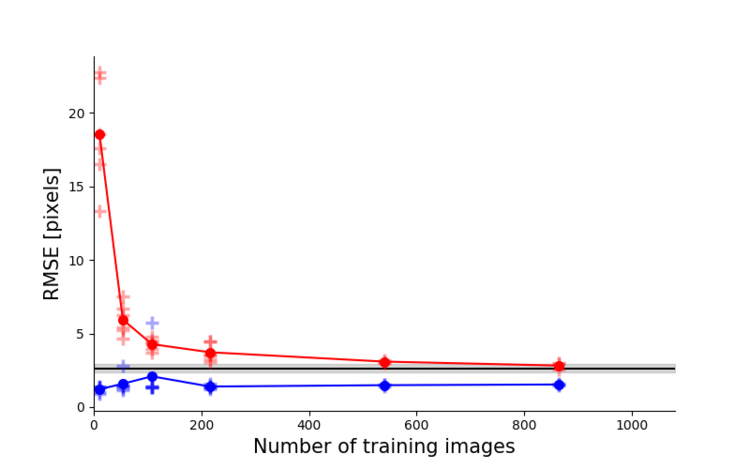

Top left: Due to transfer learning it requires little training data for multiple, challenging behaviors (see Mathis et al. for details).

-

Top Right: Video analysis is fast (see Mathis/Warren for details)

-

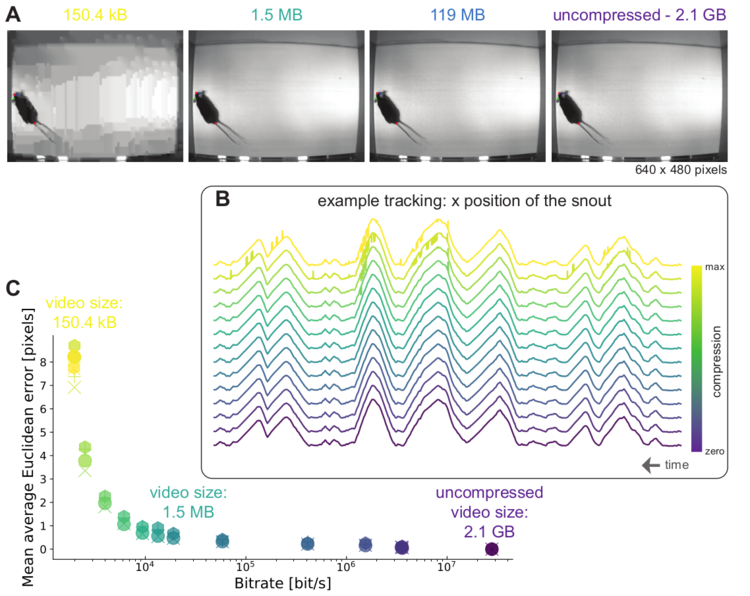

Mid Left: The feature detectors are robust to video compression (see Mathis/Warren for details)

-

Mid Right: It allows 3D pose estimation with a single network and camera (see Mathis/Warren for details)

-

Bottom: It allows 3D pose estimation with a single network trained on data from multple cameras together with standard triangulation methods (see Nath* and Mathis* et al. for details)

Alexander Mathis, Tanmay Nath, Mackenzie Mathis. The feature detector code is based on Eldar Insafutdinov's TensorFlow implementation of DeeperCut. DeepLabCut is an open-source tool and has benefited from suggestions and edits by many individuals including Richard Warren, Ronny Eichler, Jonas Rauber, Hao Wu, Federico Claudi, Gary Kane, Taiga Abe, and Jonny Saunders as well as the contributors. In particular, the authors thank Ronny Eichler for input on the modularized version.

This is an actively developed package and we welcome community development and involvement.

-

If you encounter a previously unreported bug/code issue, please post here (we encourage you to search issues first): https://github.com/AlexEMG/DeepLabCut/issues

-

For community sourced help and questions, we ask you to post them here:

-

If you want to contribute to the code, please make a pull request that includes how you modified the code and what new functionality it has, the OS it has been tested on, and the output of the testscript.py. Please see more here.

If you use this code or data please cite Mathis et al, 2018 and if you use the Python package (DeepLabCut2.0) please also cite Nath, Mathis et al, 2019.

Please check out the following references for more details:

@article{Mathisetal2018,

title={DeepLabCut: markerless pose estimation of user-defined body parts with deep learning},

author = {Alexander Mathis and Pranav Mamidanna and Kevin M. Cury and Taiga Abe and Venkatesh N. Murthy and Mackenzie W. Mathis and Matthias Bethge},

journal={Nature Neuroscience},

year={2018},

url={https://www.nature.com/articles/s41593-018-0209-y}

}

@article{NathMathisetal2019,

title={Using DeepLabCut for 3D markerless pose estimation across species and behaviors},

author = {Nath*, Tanmay and Mathis*, Alexander and Chen, An Chi and Patel, Amir and Bethge, Matthias and Mathis, Mackenzie W},

journal={Nature Protocols},

year={2019},

url={https://doi.org/10.1038/s41596-019-0176-0}

}

@article{insafutdinov2016eccv,

title = {DeeperCut: A Deeper, Stronger, and Faster Multi-Person Pose Estimation Model},

author = {Eldar Insafutdinov and Leonid Pishchulin and Bjoern Andres and Mykhaylo Andriluka and Bernt Schiele},

booktitle = {ECCV'16},

url = {http://arxiv.org/abs/1605.03170}

}

Our open-access pre-prints:

@article {NathMathis2018,

author = {Nath*, Tanmay and Mathis*, Alexander and Chen, An Chi and Patel, Amir and Bethge, Matthias and Mathis, Mackenzie W},

title = {Using DeepLabCut for 3D markerless pose estimation across species and behaviors},

year = {2018},

doi = {10.1101/476531},

publisher = {Cold Spring Harbor Laboratory},

URL = {https://www.biorxiv.org/content/early/2018/11/24/476531},

eprint = {https://www.biorxiv.org/content/early/2018/11/24/476531.full.pdf},

journal = {bioRxiv}

}

@article{mathis2018markerless,

title={Markerless tracking of user-defined features with deep learning},

author={Mathis, Alexander and Mamidanna, Pranav and Abe, Taiga and Cury, Kevin M and Murthy, Venkatesh N and Mathis, Mackenzie W and Bethge, Matthias},

journal={arXiv preprint arXiv:1804.03142},

year={2018}

}

@article {MathisWarren2018speed,

author = {Mathis, Alexander and Warren, Richard A.},

title = {On the inference speed and video-compression robustness of DeepLabCut},

year = {2018},

doi = {10.1101/457242},

publisher = {Cold Spring Harbor Laboratory},

URL = {https://www.biorxiv.org/content/early/2018/10/30/457242},

eprint = {https://www.biorxiv.org/content/early/2018/10/30/457242.full.pdf},

journal = {bioRxiv}

}

This project is licensed under the GNU Lesser General Public License v3.0. Note that the software is provided "as is", without warranty of any kind, express or implied. If you use this code or data, please cite us!.

-

Jun 2019: DLC 2.0.7 released with lots of updates. For updates see releases

-

Feb 2019: DeepLabCut joined twitter

-

Jan 2019: We hosted workshops for DLC in Warsaw, Munich and Cambridge. The materials are available here

-

Nov 2018: We posted a detailed guide for DeepLabCut 2.0 on BioRxiv. It also contains a case study for 3D pose estimation in cheetahs.

-

Nov 2018: Various (post-hoc) analysis scripts contributed by users (and us) will be gathered at DLCutils. Feel free to contribute! In particular, there is a script guiding you through importing a project into the new data format for DLC 2.0

-

Oct 2018: new pre-print on the speed video-compression and robustness of DeepLabCut on BioRxiv

-

Sept 2018: Nature Lab Animal covers DeepLabCut: Behavior tracking cuts deep

-

Kunlin Wei & Konrad Kording write a very nice News & Views on our paper: Behavioral Tracking Gets Real

-

August 2018: Our preprint appeared in Nature Neuroscience

-

August 2018: NVIDIA AI Developer News: AI Enables Markerless Animal Tracking

-

July 2018: Ed Yong covered DeepLabCut and interviewed several users for the Atlantic.

-

April 2018: first DeepLabCut preprint on arXiv.org