A multimodal AI system for the Pepper robot powered by advanced speech-to-speech AI models (OpenAI Realtime API, Google Gemini Live, x.ai Grok). It enables natural interactions through voice, touch, vision, and movement, with advanced function calling for robot control (navigation, gestures, animations), information tools (search, weather), and interactive tablet activities (quizzes, memory games, tic-tac-toe, drawing game).

- Screenshots

- Features

- Technology Stack

- Quick Start

- API Key Setup

- Security & Privacy

- Usage

- Core Features & System Capabilities

- Interactive Entertainment

- Architecture

- Development

- Troubleshooting

- Contributing

- License

- Acknowledgments

- Real-time Voice Chat - Natural conversations powered by speech-to-speech (S2S) AI models:

- OpenAI - gpt-realtime, gpt-realtime-mini, gpt-4o-realtime-preview, gpt-4o-mini-realtime-preview

- Azure OpenAI - Same models with network-level isolation and customer-managed encryption for enterprise compliance

- x.ai - Grok Voice Agent API with native web/X search

- Google - Gemini Live API with native audio model and 30 voices

- Audio Input Modes - Two optimized modes for voice capture:

- Direct Audio - Low-latency streaming with native voice activity detection

- Azure Speech STT - Speech is first transcribed to text via Azure Speech Services; performs better for regional dialects (e.g., Swiss German) and provides confidence scores that inform the AI when transcription quality may be uncertain

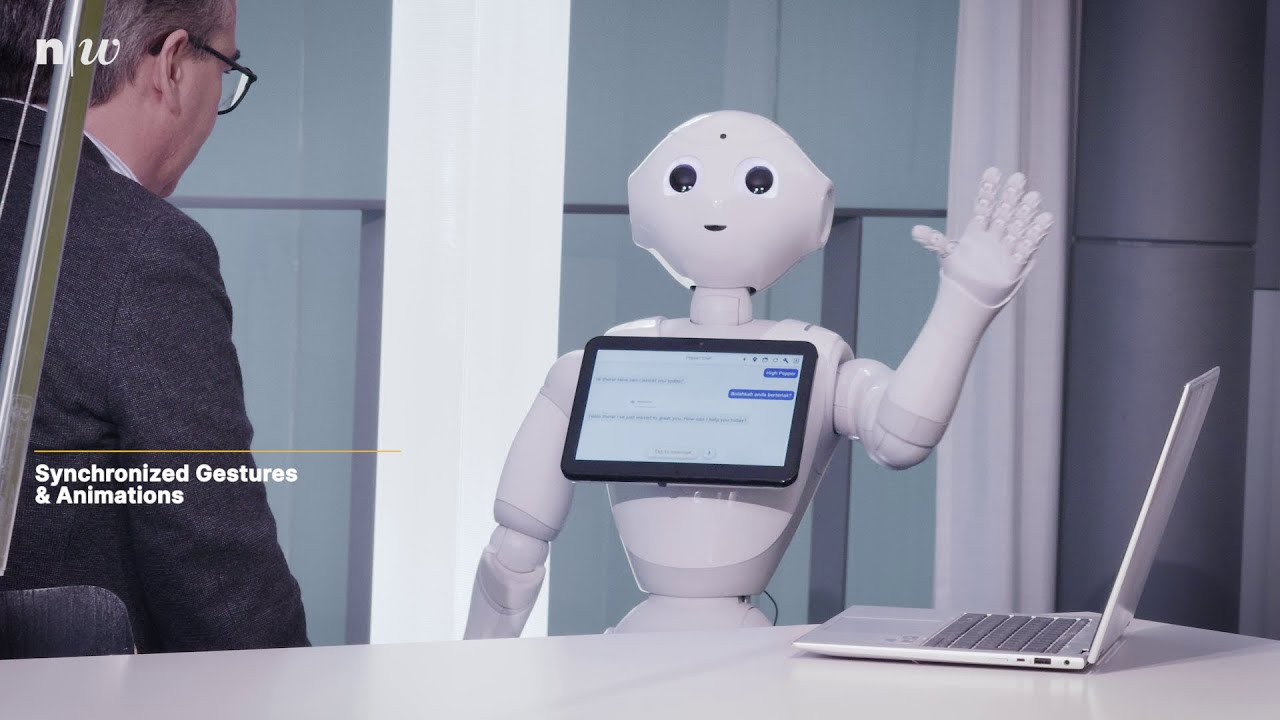

- Synchronized Gestures - Automatic body language during speech output for natural communication

- Dual Build Flavors - Two app variants for different use cases:

- Pepper Flavor - Full robot integration with QiSDK for Pepper hardware

- Standalone Flavor - Runs on any Android device for testing and development without robot hardware

- Expressive Animations - Rich library of robot animations triggered by voice commands (wave, bow, applause, kisses, laugh, etc.)

- Modern Tablet UI - Clean Jetpack Compose chat interface with interactive function cards, real-time overlays, and adaptive toolbar

- Gaze Control - Precise 3D head/eye positioning with duration control and automatic return

- Vision Analysis - Camera-based image understanding and analysis with intelligent obstacle detection

- Live Video Streaming - Continuous video stream (1 FPS) to Gemini Live API for real-time visual context (Google provider only)

- Touch Interaction - Responds to touches on head, hands, and bumpers with contextual AI reactions

- Navigation & Mapping - Complete room mapping and autonomous navigation system

- Human Approach & Following - Intelligent human detection, approaching, and continuous following

- Human Perception Dashboard - Real-time display of detected people with gaze direction, distance, and recognized names

- Local Face Recognition - On-device face identification running on Pepper's head (no cloud API needed)

- Event-Based Rule System - Define custom rules to automatically send context updates to AI based on perception events (person recognized, appeared, etc.)

- Internet Search - Real-time web search capabilities via Tavily API

- Weather Information - Current weather and forecasts via OpenWeatherMap API

- Interactive Quizzes - Dynamic quiz generation and interaction

- Tic Tac Toe Game - Play against the AI on the tablet with a visual board

- Memory Game - Card-matching game with multiple difficulty levels

- Drawing Game - Draw on the tablet and let the AI guess what you drew

- Melody Player - Play synthesized melodies like Happy Birthday

This project uses modern Android Studio versions without the deprecated Pepper SDK plugin. The plugin is no longer maintained and incompatible with recent Android Studio versions. Instead, we configure the project manually following the approach documented here: Pepper with Android Studio in 2024. This enables the use of the latest Android Studio versions, modern AndroidX libraries, Kotlin as the primary language, and the latest Gradle and build tools with improved IDE performance.

The entire application is written in Kotlin, leveraging modern language features:

- Null Safety - Compile-time null checks prevent NullPointerExceptions

- Coroutines - Structured concurrency for asynchronous operations

- Data Classes - Concise model definitions with automatic equals/hashCode/toString

- Extension Functions - Clean API extensions without inheritance

- Hilt Dependency Injection - Type-safe DI with KSP annotation processing

- Jetpack Compose - Modern declarative UI for the chat interface with LazyColumn, Material 3, and Coil image loading

Note on API 23 (Android 6.0) Compatibility:

Pepper v1.8 runs Android 6.0 (API Level 23). This limits some third-party libraries to older versions, as many newer releases require Android 8.0+ (API 26+) for features like java.util.Base64 and MethodHandle. Despite this constraint, the project uses the latest compatible versions of all dependencies and modern development tools (Gradle 8.13, Kotlin 2.0.21, Android Studio latest).

This project supports two build flavors to accommodate different use cases:

- 🤖 Pepper Flavor - Full application with all robot features (navigation, gestures, sensors, robot camera)

- 📱 Standalone Flavor - Runs on any Android device for testing conversational AI without robot hardware

Both flavors share the same core conversational AI system but differ in hardware integration. The standalone version uses stub implementations for robot-specific features and your device's camera instead of Pepper's camera.

- Target Robot: Pepper v1.8 running NAOqi OS 2.9.5

- Required IDE: Android Studio (latest stable version recommended)

- Build Configuration:

- Gradle:

8.13 - Android Gradle Plugin:

8.13.0 - Kotlin:

2.0.21 - CompileSdk / TargetSdk:

35 - MinSdk:

23(Android 6.0)

- Gradle:

- API Keys: For full functionality, API keys for various services are required (see "Configure API Keys" section below)

- Target Device: Any Android device running Android 6.0+ (API 23+)

- IDE: Android Studio (latest stable version recommended)

- Build Configuration: Same as above

- Purpose: Test conversational AI, tool system, and generic features without robot hardware

- Limitations: Robot-specific features (navigation, gestures, camera, sensors) are simulated with log output

- Clone and Configure

- Configure API Keys

- Build Your Flavor

- Open in Android Studio

- Connect to Pepper and Deploy (Pepper) / Install Standalone Version (Standalone)

Option A: From ZIP file (Code Submission)

- Extract the ZIP file to your desired location

- Open a terminal in the extracted project directory

Option B: From Git (when publicly available)

git clone https://github.com/[ANONYMIZED]/pepper-realtime-conversation.git

cd pepper-realtime-conversationCreate configuration file:

- Copy

local.properties.exampletolocal.propertiesin the project root directory - Important: If you create

local.propertiesmanually, you must add your Android SDK path at the top:(Android Studio adds this automatically when you sync the project)sdk.dir=C\:\\Users\\YOUR_USERNAME\\AppData\\Local\\Android\\Sdk

Edit local.properties and add your API keys. See the API Key Setup section below for detailed instructions on obtaining these keys.

# REALTIME API PROVIDERS (Choose one or configure multiple)

OPENAI_API_KEY=your_openai_api_key_here

AZURE_OPENAI_KEY=your_azure_openai_key_here

AZURE_OPENAI_ENDPOINT=your-resource.openai.azure.com

XAI_API_KEY=your_xai_api_key_here

GOOGLE_API_KEY=your_google_api_key_here

# OPTIONAL: Additional features

AZURE_SPEECH_KEY=your_azure_speech_key_here

AZURE_SPEECH_REGION=your_azure_region

GROQ_API_KEY=your_groq_key_here

TAVILY_API_KEY=your_tavily_key_here

OPENWEATHER_API_KEY=your_weather_key

YOUTUBE_API_KEY=your_youtube_api_key🤖 Pepper Flavor (Default)

./gradlew assemblePepperDebug📱 Standalone Flavor

./gradlew assembleStandaloneDebugIn Android Studio:

- Select build flavor from:

Build→Select Build Variant - Choose

pepperDebugfor Pepper robot - Choose

standaloneDebugfor testing on any device

- Open Android Studio (latest stable version)

- Select "Open" and choose the project directory

- Wait for Gradle sync to complete

- The project is now ready to build and deploy

- Enable Developer Mode on Pepper's tablet (Settings → About → Tap "Build number" 7 times)

- Enable USB debugging in Developer Options

- Ensure Pepper's tablet is connected to the same WiFi network as your computer

- On Pepper's tablet, swipe down to view Notifications

- Look for the notification showing the IP address (e.g.,

192.168.1.100) - Note this IP address under "For Run/Debug Config"

- Open the Terminal in Android Studio (bottom toolbar)

- Connect to Pepper (replace with Pepper's actual IP):

adb connect 192.168.1.100- On Pepper's tablet, an "Allow USB debugging?" popup will appear - Accept it (may be hidden behind notifications)

- Verify connection:

adb devices # Should show: 192.168.1.100:5555 device

- "Unable to connect": Check firewall settings (allow port 5555) or try enabling ADB over TCP:

# If you have USB access first: adb tcpip 5555 adb connect 192.168.1.100 - Device not listed: Ensure same WiFi network, check IP address, restart ADB server (

adb kill-server && adb start-server) - "Unauthorized": Accept the USB debugging popup on Pepper's tablet

- In Android Studio's toolbar, verify that "ARTNCORE LPT_200AR" appears in the device dropdown

- Click the green Run button (

▶️ ) in the toolbar - Android Studio will build and install the app on Pepper

- The app will start automatically on Pepper's tablet

Note: The ADB connection persists between sessions. You only need to reconnect if Pepper reboots or changes IP address.

Alternative: Manual APK Installation

# Build the Pepper APK

./gradlew assemblePepperDebug

# Install via ADB (APK path: app/build/outputs/apk/pepper/debug/app-pepper-debug.apk)

adb install -r app/build/outputs/apk/pepper/debug/app-pepper-debug.apkThe standalone version allows testing the conversational AI system on any Android phone or tablet without requiring Pepper hardware.

# Build the standalone APK

./gradlew assembleStandaloneDebug

# Connect your Android device via USB (USB debugging enabled in Developer Options)

adb devices

# Should show your device ID followed by "device"

# Install the APK (APK path: app/build/outputs/apk/standalone/debug/app-standalone-debug.apk)

adb install -r app/build/outputs/apk/standalone/debug/app-standalone-debug.apk- Device not detected: Install device drivers (manufacturer-specific), enable USB debugging

- "Unauthorized": Accept USB debugging prompt on device, check "Always allow from this computer"

- USB issues: Try different USB cable/port, or use Option 2 below

# Build the standalone APK

./gradlew assembleStandaloneDebug

# APK location: app/build/outputs/apk/standalone/debug/app-standalone-debug.apkTransfer the APK to your Android device:

- Via cloud storage (Google Drive, OneDrive, etc.)

- Via email attachment

- Via file transfer (USB in file mode)

On your Android device:

- Enable "Install from Unknown Sources" in Settings → Security

- Open the APK file in your file manager

- Tap "Install"

- ✅ Full conversational AI (Realtime API or Azure Speech)

- ✅ Vision analysis (uses device front camera for automatic photo capture)

- ✅ Internet search, weather, quizzes, games

- ✅ All generic tools and function calling

- ⏸️ Robot movements/gestures (simulated and logged)

- ⏸️ Navigation and mapping (simulated)

- ⏸️ Human Perception: No tracking or face recognition (requires Pepper's head server)

Note: The app requires camera permission for vision analysis. Grant camera access when prompted.

For local face recognition on Pepper (identify people by name without cloud APIs), you need to deploy additional software to Pepper's head computer.

Note: This is optional! The app works fully without face recognition - people will simply appear as "Unknown" in the perception dashboard.

- Docker installed on your PC

- SSH access to Pepper (default password:

nao)

cd tools/pepper-face-recognition

# Build packages (Windows)

.\build.ps1

# Build packages (Linux/macOS)

./build.sh

# Deploy to Pepper

.\deploy.ps1 -PepperIP "<PEPPER_IP>" # Windows

./deploy.sh <PEPPER_IP> # Linux/macOSAdd to your local.properties:

PEPPER_SSH_PASSWORD=naoThis allows the Android app to automatically start the face recognition server via SSH.

📖 Full documentation: See tools/pepper-face-recognition/README.md for detailed instructions, troubleshooting, and API documentation.

Choose one of the following speech-to-speech AI providers:

- Go to platform.openai.com

- Create an API key

- That's it! The app supports all Realtime API models:

gpt-realtime(GA model with built-in vision ✅)gpt-realtime-mini(Affordable GA model with built-in vision ✅)gpt-4o-realtime-preview(Older model - requires Groq for vision)gpt-4o-mini-realtime-preview(Older mini model - requires Groq for vision)

- Go to Azure Portal

- Create an Azure OpenAI resource

- Deploy one or more of the supported models:

gpt-realtime(GA model with built-in vision)gpt-realtime-mini(Affordable GA model with built-in vision)gpt-4o-realtime-preview(Older model - requires Groq for vision)gpt-4o-mini-realtime-preview(Older mini model - requires Groq for vision)

- Copy your API key and endpoint

Privacy & Compliance Advantages:

- Data Residency: Data processed and stored within your chosen Azure region (EU/Switzerland available for GDPR compliance)

- No Training on Your Data: Microsoft guarantees that customer data is not used for model training

- Enterprise Controls: Role-based access control, encryption at rest and in transit, comprehensive audit logging

- Compliance: Supports GDPR, HIPAA, and other regulatory frameworks

- Go to x.ai

- Create an API key

- Uses the Grok Voice Agent API (speech-to-speech model, OpenAI Realtime API compatible)

Unique Features:

- Native Web Search: Built-in web search without Tavily API (configurable in settings)

- Native X Search: Search posts on X/Twitter in real-time (configurable in settings)

- 5 Distinct Voices: Ara, Rex, Sal, Eve, Leo

- 100+ Languages: Multilingual support out of the box

- Note: Vision analysis requires

GROQ_API_KEY(Grok Voice Agent API doesn't support images)

- Go to Google AI Studio

- Create an API key

- Uses the Gemini Live API with native audio capabilities

Unique Features:

- Native Audio Model: End-to-end speech model (audio in, audio out) without separate STT/TTS

- Automatic Voice Activity Detection: Configurable start/end sensitivity, prefix padding, and silence duration

- Thinking Budget: Optional chain-of-thought reasoning with configurable token budget

- Show Thinking: Display AI thinking traces in chat bubbles (requires Thinking Budget > 0)

- Google Search Grounding: Real-time web search for improved accuracy and reduced hallucinations

- Proactive Audio: Allows Gemini to proactively decide not to respond when content is not relevant

- Live Video Streaming: Continuous 1 FPS video stream to Gemini for real-time visual context during conversations. Recommended for vision tasks - the video stream provides more reliable visual understanding than static image analysis, as Gemini Live is optimized for continuous video input.

- 30 Distinct Voices: Extensive selection including Puck, Charon, Kore, Fenrir, Aoede, and many more

- Note: Uses

v1alphaAPI version withgemini-2.5-flash-native-audio-previewmodel

- Default: App uses the AI model's native audio input (no separate key needed)

- Alternative: Azure Speech for better dialect recognition

- Setup:

- Go to Azure Portal

- Create a Speech Services resource

- Copy your API key and region

- In app: Settings → Audio Input → "Azure Speech (Best for Dialects)"

- Free Tier: 14,400 requests/day

- Get Key: console.groq.com

- Enables: Alternative vision analysis provider (gpt-realtime and gpt-realtime-mini have built-in vision, only required for vision analysis with gpt-4o-realtime-preview and gpt-4o-mini-realtime-preview)

- Free Tier: 1,000 searches/month

- Get Key: tavily.com

- Enables: Real-time web search

- Free Tier: 1,000 calls/day

- Get Key: openweathermap.org/api

- Enables: Weather information and forecasts

- Free Tier: 10,000 requests/day

- Get Key: console.cloud.google.com

- Enables: Video search and playback in popup window

- Current implementation stores API keys in

BuildConfig(compiled into APK) - convenient for development but not secure for production - Keys are accessible via APK decompilation

- For production: Use runtime entry with

EncryptedSharedPreferencesor proxy API calls through your backend server

This app sends data to third-party services when features are used:

- OpenAI/Azure (Realtime API), x.ai (Grok), or Google (Gemini Live): Audio, messages, images, tool results

- Azure Speech (optional): Audio for transcription

- Groq/Tavily/OpenWeather/YouTube (optional): Search queries, images, location data

Local Face Recognition:

- Face recognition runs locally on Pepper's head - no cloud API needed

- Face data stored only on the robot, never sent to external services

- GDPR/CCPA compliant by design - biometric data stays on-device

To disable optional features: Leave corresponding API keys empty in local.properties (or remove if already entered), or use Settings → Audio Input to switch modes.

Camera & Biometric Consent:

- Pepper (robot camera) / Standalone (front camera) used for vision analysis

- Local face recognition processes biometric data on-device only

- To disable vision: Leave

GROQ_API_KEYempty (or remove/revoke camera permission)

Local Storage: Chat history and maps stored locally; clear via "New Chat" button or Android Settings → Clear Data.

- Launch the app on your Pepper robot

- Wait for "Ready" status

- Speak naturally to start conversation

- Tap the Status Capsule at the bottom to interrupt robot speech (only active during speaking)

- Tap the Microphone Button next to the capsule to toggle mute (works in any state)

- Tap dashboard icon (👁️) in the top toolbar to toggle Human Perception Dashboard overlay

- Touch robot's head, hands, or bumpers for physical interaction

- Tap navigation icon (📍) in top toolbar to show/hide the map preview with saved locations

- Tap function call cards in chat to view detailed arguments and results

- Tap vision analysis photos in chat to view them in full-screen overlay

- "What do you see?" - Triggers vision analysis (uses gpt-realtime or Groq API)

- "What time is it?" or "What's the date?" - Gets current date and time information

- "What's the weather like?" - Gets weather information (requires OpenWeather API)

- "Search for [topic]" - Performs internet search (requires Tavily API)

- "Play [song/video]" - Searches and plays YouTube videos (requires YouTube API)

- "Tell me a joke" - Random joke from local database

- "Show me [animation]" - Plays Pepper animations (hello/wave, bow, applause, kisses, laugh, happy, etc.)

- "Start a Tic Tac Toe game" - Opens the game dialog for voice-controlled gameplay

- "Move [distance] [direction]" - Basic movement in any direction (0.1-4.0m)

- "Move 2 meters forward" - Simple forward movement

- "Move 1 meter forward and 2 meters right" - Combined diagonal movement

- "Move 0.5 meters backward and 1 meter left" - Combined movement in any direction

- "Turn [direction] [degrees]" - Rotate in place (left/right, 15-180 degrees)

- "Approach him/her" - Intelligently approach a detected person for interaction

- "Come to me" - Alternative command to approach the user

- "Follow me" - Continuously follow the nearest person at a specified distance

- "Follow me at 1 meter distance" - Follows the user maintaining 1m distance

- "Please follow me" - Uses default 1m distance

- Pepper tracks and follows the person until stopped or the person is lost

- "Stop following" - Stop the active follow action

- "Stop following me" - Alternative command to stop following

- "Look at [target]" - Directs Pepper's gaze towards a specific 3D position relative to robot base

- "Look at the ground in front of you" - AI calculates coordinates (1.0, 0.0, 0.0)

- "Look up at the ceiling" - AI calculates coordinates (0.0, 0.0, 2.5)

- "Look two meters to your left and one meter up" - AI calculates coordinates (0.0, 2.0, 1.0)

- "Look at the ground one meter ahead for 5 seconds" - Duration control with auto-return

- Perfect for Vision Analysis - "What do you see one meter in front of you on the ground?"

- AI automatically: 1) Looks at position 2) Captures image 3) Analyzes vision 4) Returns gaze

- "Create a map" - Start mapping the current environment

- "Move forward 2 meters" - Guide Pepper during mapping

- "Save this location as [name]" - Save current position with a name

- "Finish the map" - Complete and save the environment map

- "Go to [location]" - Navigate to any saved location

- "Navigate to [location]" - Alternative navigation command

- Tap the wrench icon (🔧) in the top-right toolbar or swipe from left edge to access settings drawer

- API Provider - Choose between OpenAI Direct, Azure OpenAI, x.ai Grok, or Google Gemini

- Model Selection - Select from available models (varies by provider)

- Voice Selection - Choose from available voices (varies by provider)

- Audio Input Mode - Switch between Direct audio streaming and Azure Speech Services STT

- System Prompt - Customize the AI's personality and behavior instructions

- Recognition Language - Set speech recognition language (German, English, French, Italian variants)

- Temperature - Adjust AI creativity/randomness (0-100%)

- Volume Control - Set audio output volume

- Silence Timeout - Configure required silence duration for speech recognition completion

- Tool Management - Enable/disable specific function tools (vision, weather, search, games, etc.)

- ASR Confidence Threshold - Set minimum confidence level for speech recognition acceptance

You can switch between different AI providers in the settings:

- OpenAI Direct (Realtime API): Supports all four models (

gpt-realtime,gpt-realtime-mini,gpt-4o-realtime-preview,gpt-4o-mini-realtime-preview) directly from OpenAI with 10 voices - Azure OpenAI (Realtime API): Supports all four models with your Azure deployment, network-level isolation, and customer-managed encryption for enterprise compliance

- x.ai Grok (Grok Voice Agent API): Speech-to-speech voice model with native web/X search capabilities and 5 unique voices

- Google Gemini (Live API): Native audio model with end-to-end speech, configurable VAD, thinking budget, and 30 voices

Note: Changing the API provider automatically updates available models and voices. Model/voice changes restart the session automatically.

The default system prompt is optimized following OpenAI's Realtime API Prompting Guide. It includes:

- Structured sections - Role, Personality, Tools, Instructions for better model understanding

- Sample phrases - Consistent greetings, acknowledgments, and closings

- Variety rules - Prevents repetitive responses ("I see" | "Got it" | "Understood")

- Tool integration - Natural tool usage without explicit announcements

- Physical embodiment - First-person perspective as the robot

To customize:

- Edit the system prompt in

app/src/main/assets/default_system_prompt.txt - Or modify it dynamically in Settings → System Prompt within the app

- Follow the Realtime Prompting Guide for best practices

Key tips from the guide:

- Use bullets over paragraphs for clarity

- Guide with specific examples

- Capitalize important rules for emphasis

- Add conversation flow states for complex interactions

The app provides comprehensive voice input and multilingual capabilities with two speech recognition modes and intelligent language handling.

The app supports two speech recognition modes, configurable in Settings → Audio Input:

- Simple Setup - No separate speech API key needed

- Lower Latency - Integrated audio processing with conversation flow

- Server VAD - Automatic speech detection handled by the model

- Async Transcripts - User speech transcripts appear after responses start

- Direct Audio Processing - Model processes audio directly for responses

- Note: The displayed transcript may not exactly match what the model understood, as transcription is generated asynchronously

- Best For - English and major languages, quick setup

- Superior Quality - Significantly better for regional dialects and low-resource languages

- Continuous Recognition - Transcription while speaking, with interim results in chat history

- Confidence Scores - Real-time feedback on transcription quality

- Full Transparency - Exact transcribed text sent to model (what you see = what the model receives)

- Configurable Silence Timeout - End-of-speech detection (default: 500ms, adjustable in settings)

- Requires -

AZURE_SPEECH_KEYinlocal.properties

- Open app Settings (tap wrench icon 🔧 in toolbar or swipe from right edge)

- Select Audio Input dropdown

- Choose preferred mode:

- "Direct Audio" - Default, no extra keys

- "Azure Speech" - Requires Azure Speech key

- Close settings - change takes effect immediately

Note: Only available in Azure Speech mode.

- Confidence Scoring - Each transcription gets a score (0-100%)

- Smart Tagging - Low-confidence speech tagged with "[Low confidence: XX%]"

- AI Clarification - AI can ask "Did you say X?" when uncertain

- Adjustable Threshold - Configurable in settings (default: 70%)

- Universal Language Support - The Realtime API can respond in any language, including regional dialects

- Instant Language Switching - The AI can switch languages mid-conversation when requested by the user or specified in the system prompt

- Contextual Language Use - AI automatically adapts to the user's preferred language based on conversation context

The app supports 30+ languages in both audio input modes:

Direct Audio Mode:

- Language setting affects the input audio transcription (Whisper-based) that appears in chat

- Does NOT affect what the model understands (model processes audio directly with automatic language detection)

- Transcription quality improves when correct language is configured

Azure Speech Mode:

- Language setting is critical - directly affects what text is sent to the model

- Must match the spoken language for accurate recognition

Supported languages (both modes):

- German variants: de-DE (Germany), de-AT (Austria), de-CH (Switzerland)

- English variants: en-US, en-GB, en-AU, en-CA

- French variants: fr-FR (France), fr-CA (Canada), fr-CH (Switzerland)

- Italian variants: it-IT (Italy), it-CH (Switzerland)

- Spanish variants: es-ES (Spain), es-AR (Argentina), es-MX (Mexico)

- Portuguese variants: pt-BR (Brazil), pt-PT (Portugal)

- Chinese variants: zh-CN (Mandarin Simplified), zh-HK (Cantonese Traditional), zh-TW (Taiwanese Mandarin)

- Asian languages: ja-JP (Japanese), ko-KR (Korean)

- European languages: nl-NL (Dutch), nb-NO (Norwegian), sv-SE (Swedish), da-DK (Danish)

- Other languages: ru-RU (Russian), ar-AE/ar-SA (Arabic)

- Settings Control - Recognition language can be changed in app settings

- Live Switching - Language changes take effect immediately during active sessions

- No App Restart - System reconfigures automatically

Direct Audio Mode:

- ✅ Model understands speech via automatic language detection (no configuration required)

- ⚙️ Language setting improves displayed transcript quality (Whisper-based)

- 💡 Recommendation: Configure correct language for better transcripts, but not strictly necessary

Azure Speech Mode:

⚠️ Language setting is critical - must match spoken language- Users must speak in the configured recognition language for accurate transcription

- If user speaks in different language than configured, recognition will fail or be very poor

- 💡 Recommendation: Always change language setting before switching spoken languages

AI Response Language:

- ✅ AI can respond in any language regardless of input mode or language setting

# System prompt in English, but AI can respond in other languages when requested

User (English): "Please respond in German from now on"

AI (German): "Gerne! Ab jetzt spreche ich auf Deutsch mit dir."

# User must change recognition language in settings to speak German

User: (Changes settings to de-DE, then speaks German)

"Erzähl mir einen Witz"

AI: "Gerne! Hier ist ein Witz für dich..."Due to Pepper's hardware limitations (no echo cancellation), the app uses an intelligent microphone management system to prevent the robot from hearing itself while ensuring users can still interrupt ongoing responses.

- Closed During Speech - Microphone automatically closes when Pepper is speaking or processing

- Open During Listening - Microphone only active when waiting for user input

- Hardware Constraint - Necessary because Pepper's older hardware lacks echo cancellation

The UI provides two separate controls for better user experience:

- Shows current robot state (Listening, Thinking, "Tap to interrupt" during Speaking)

- Tap during Speaking - Immediately stops speech

- No action in other states - Capsule is purely informational when not speaking

- Always visible next to status capsule

- Three visual states communicate clearly:

- Blue filled = Microphone active (listening to you)

- Gray outlined = Microphone paused (will activate when robot finishes speaking)

- Red with slash = Microphone muted (stays off until you unmute)

- Persistent intent - Muting during robot speech keeps mic off after robot finishes

- Pre-mute feature - Tap mute while robot is speaking to prevent auto-reactivation

- Only visible when using Google Gemini Live API provider

- Toggles live video streaming at 1 FPS to provide visual context during conversations

- Three visual states:

- Green pulsing = Video streaming active (camera capturing and sending frames)

- Amber outlined = Video paused (will resume when robot finishes speaking)

- Gray = Video streaming off

- Camera Preview - Small preview window appears in the top-right corner when streaming

- Token Usage - Video streaming consumes additional tokens; disabled by default

Certain events automatically interrupt ongoing responses to provide immediate feedback:

- Touch Events - Physical touch triggers new contextual response

- Navigation Updates - Status messages like "[MAP LOADED]" or "[NAVIGATION ERROR]"

- Game Events - Memory game matches, quiz answers, TicTacToe moves

- Event Rules - Rules configured with "Interrupt & Respond" action type

- Instant Stop - Responses halt immediately when interrupted

- Queue Management - Audio buffers cleared to prevent delayed playback

- State Coordination - Turn management ensures proper microphone timing

- Seamless Recovery - Smooth transition back to listening mode

The chat interface is built with Jetpack Compose, providing a modern declarative UI with smooth animations and efficient list rendering via LazyColumn.

- Expandable Cards - Each function call appears as a collapsible card with status icon (✅/⏳)

- Tool Icons - Unique emoji for each tool (🌐 search, 🌤️ weather, 👁️ vision, etc.)

- Tap to Expand - Shows arguments and results in readable format

- Rule Trigger Display - When an event rule fires, a card appears in chat showing which rule was triggered

- Expandable Details - Click to see the full context message sent to AI

- Rule Reference - Shows rule name and event type for easy identification

- Thumbnail View - Compact preview images in chat flow

- Tap to Expand - Full-screen overlay with high resolution

- Auto Cleanup - Photos managed automatically per session

The Human Perception Dashboard provides real-time visualization of all detected people around Pepper using a custom Head-Based Perception System running on Pepper's head computer. This system provides stable person tracking, face recognition, and gaze detection via WebSocket streaming.

Why a Custom System?

- The QiSDK's built-in PeoplePerception offered age, gender, and emotion detection, but these were highly unreliable in practice

- The

ALFaceDetectionmodule for face identification is no longer available on newer Android-based Pepper robots - Our custom solution focuses on reliable person tracking and face recognition without the unreliable metadata

- Tap the dashboard symbol in the status bar to toggle the dashboard overlay

- Dashboard appears in the top-right corner as a floating overlay

- Four tabs: Live (people list), Radar (visual map), Faces (management), Settings (parameters)

- Tap close button (×) or dashboard symbol again to hide

Real-time list of all detected people showing:

- Track ID - Stable identifier that persists across frames

- Name - Recognized name from face database (or "Unknown")

- Distance - Estimated optically from face size

- Position - World coordinates (yaw, pitch angles)

- Gaze - Whether person is looking at the robot (with duration)

- Duration - How long this person has been tracked

Visual representation of people around the robot:

- Top-down view - Shows relative positions

- Distance circles - 1m, 2m, 3m range indicators

- Person markers - Color-coded by recognition status

- Gaze indicators - Shows who's looking at the robot

Manage the face recognition database:

- View registered faces - Thumbnails with names

- Register new face - Capture from camera

- Delete faces - Remove from database

- Real-time sync - Changes reflected immediately

Configure perception parameters in real-time. A "Reset to Defaults" button restores all values.

Detection:

- Detection Confidence (0.5-0.99) - Min confidence for face detection (filters blur/noise)

Recognition:

- Recognition Threshold (0.3-0.9) - Lower = stricter matching

- Recognition Cooldown (1-10s) - Time between recognition attempts

Tracker:

- Max Angle Distance (5-30°) - Tracking tolerance for movement

- Track Timeout (1-10s) - Time before removing lost tracks

- Confirm Count (1-10) - Detections needed before track is confirmed

- Lost Buffer (0.5-10s) - How long lost tracks stay for recovery

- World Match Distance (0.3-2.0m) - Max 3D distance for track matching

Gaze & Performance:

- Gaze Center Tolerance (0.05-0.5) - How off-center is still "looking at robot"

- Cycle Delay (0-1000ms, default 100ms) - Pause between tracking cycles

- Camera Resolution - Detection resolution (QQVGA/QVGA/VGA) - affects range and speed

Pepper Head (Python) Pepper Tablet (Android)

┌─────────────────────┐ ┌──────────────────────────┐

│ camera_daemon.py │ │ PerceptionWebSocketClient│

│ (Python 2.7) │ │ (OkHttp WebSocket) │

│ • VGA camera (SHM) │ └───────────┬──────────────┘

│ • 5050 (internal) │ │

└─────────┬───────────┘ │

│ │

┌─────────▼───────────┐ ┌───────────▼──────────────┐

│ face_recognition_ │ │ PerceptionService.kt │

│ server.py │ │ • Flow collection │

│ (Python 3.7) │──────────│ • Event detection │

│ • Detection (YuNet) │ WebSocket│ • UI updates │

│ • Recognition(SFace)│ :5002 │ │

│ • Tracking │ └──────────────────────────┘

│ • Gaze detection │

│ • 5000 (HTTP legacy)│

└─────────────────────┘

- Stable Tracking - Track IDs persist across frames using angle-based matching

- Real-time Updates - WebSocket streaming at 3-5 Hz

- Local Face Recognition - Runs entirely on Pepper's head (no cloud API)

- Gaze Detection - Determines if person is looking at robot based on face position

- Configurable Parameters - All settings adjustable in real-time via the dashboard

- Event System Integration - Events trigger custom rules (see Event Rules section)

- Personalized Greetings - Recognize returning visitors by name

- Social Interaction - Understand who's interested in engaging

- Approach Decisions - See which person to approach first

- Group Dynamics - Monitor multiple people simultaneously

- Research - Study human-robot interaction patterns

- Head-Based Processing - Detection and recognition run on Pepper's head (Intel Atom)

- WebSocket Streaming - Real-time bidirectional communication

- Persistent Camera - Camera daemon keeps streams open for minimal latency

- Async Recognition - Face recognition runs in separate thread to avoid blocking

- Cached Encodings - Face database cached in memory for fast matching

- QiSDK Navigation - QiSDK HumanAwareness is still used for approach/follow behaviors

📖 Full technical documentation: See tools/pepper-face-recognition/README.md

The application features a powerful event management system that allows you to define custom rules for automatically sending context updates to the AI model based on robot perception events.

- Tap the lightning bolt icon (⚡) in the top toolbar to open the Event Rules overlay

- Create, edit, and delete rules through the visual rule builder

- View triggered events in the chat history as expandable cards

Rules are evaluated continuously based on perception events and trigger context updates when conditions match. Each rule consists of:

| Component | Description |

|---|---|

| Event Type | The trigger event (e.g., person recognized, person appeared) |

| Conditions | Optional AND-linked filters to refine when the rule applies |

| Action Type | How the context update is sent (interrupt, append, or silent) |

| Message Template | The text sent to the AI, with dynamic placeholders |

| Cooldown | Minimum time between triggers (prevents spam) |

| Event | Description |

|---|---|

PERSON_RECOGNIZED |

A known person was identified by face recognition |

PERSON_APPEARED |

A new person entered the robot's field of view |

PERSON_DISAPPEARED |

A person left the robot's field of view |

PERSON_LOOKING |

A person started looking at the robot |

PERSON_STOPPED_LOOKING |

A person stopped looking at the robot |

PERSON_APPROACHED_CLOSE |

A person came within 1.5 meters |

PERSON_APPROACHED_INTERACTION |

A person came within 3 meters (interaction distance) |

Filter rules with additional conditions on these fields:

| Field | Description | Example Value |

|---|---|---|

personName |

Name from face recognition | "Max" |

distance |

Distance in meters | "2.0" |

isLooking |

Is person looking at robot | "true" |

gazeDuration |

Time looking at robot (ms) | "3000" |

trackAge |

Time since person detected (ms) | "10000" |

peopleCount |

Number of detected people | "3" |

robotState |

Current robot state | "LISTENING" |

| Action | Behavior |

|---|---|

| Interrupt and Respond | Stops current speech, sends context update, triggers new AI response |

| Append and Respond | Adds context without interruption, triggers AI response |

| Silent Context Update | Adds information to context without triggering a response |

Templates support dynamic placeholders that are automatically replaced with real-time data:

{personName} - Name from face recognition (or "Unknown")

{distance} - Distance in meters (e.g., "2.3m")

{isLooking} - Whether person is looking at robot (true/false)

{gazeDuration} - How long looking at robot (e.g., "5s")

{trackAge} - How long person has been tracked (e.g., "30s")

{peopleCount} - Number of people detected

{robotState} - Robot state (LISTENING, SPEAKING, THINKING, IDLE)

{timestamp} - Current time (HH:mm:ss)

{

"name": "Greet known visitors",

"eventType": "PERSON_RECOGNIZED",

"conditions": [

{ "field": "distance", "operator": "LESS_THAN", "value": "2.5" }

],

"actionType": "INTERRUPT_AND_RESPOND",

"template": "A person you know named {personName} has appeared at {distance} distance.",

"cooldownMs": 5000

}- Visual Rule Builder - Intuitive UI for creating and editing rules

- Enable/Disable Toggle - Quickly activate or deactivate individual rules

- Import/Export - Save and share rule configurations as JSON

- Auto-Save - Rules are automatically persisted to device storage

- Live Feedback - See triggered events as expandable cards in the chat interface

- Cooldown Protection - Prevents the same rule from firing too frequently

When rules are triggered, they appear in the chat history as expandable event cards showing:

- Rule name and event type

- Action type (interrupt/append/silent)

- The exact message sent to the AI model

Pepper responds to physical touch on various sensors. When touched, the AI receives context and can respond naturally in conversation.

- 🧠 Head Touch - Touch the top of Pepper's head

- 🤲 Hand Touch - Touch either left or right hand

- ⚡ Bumper Sensors - Front left, front right, and back bumper sensors

- Contextual AI Integration - Touch events like "[User touched your head]" are sent to AI for natural responses

- Debounce Protection - 500ms delay prevents multiple rapid touches

- Smart Pausing - Automatically pauses during navigation/localization

User: "Create a map of this room"

Robot: "I have started mapping. I have cleared 3 existing locations (printer, kitchen, entrance) since they would be invalid with the new map coordinate system. Guide me through the room..."Note: Creating a new map automatically deletes all existing saved locations since they become invalid with the new coordinate system.

User: "Move forward 2 meters"

User: "Turn right 90 degrees"

User: "Move forward 1 meter"

# Continue exploring all areas you want mappedUser: "Save this location as entry"

Robot: "Saved location 'entry' with high precision during mapping"

User: "Save this location as kitchen"

Robot: "Saved location 'kitchen' with high precision during mapping"User: "Finish the map"

Robot: "Map completed and saved successfully. Ready for navigation!"User: "Go to the entry"

Robot: "Navigating to entry... I have arrived at the entry."

User: "Navigate to kitchen"

Robot: "Navigating to kitchen (high-precision location)..."The AI automatically knows all available locations and can suggest corrections:

User: "Go to dorm"

Robot: "I have these locations: Door, Kitchen, Entry. Did you mean 'Door'?"

User: "Yes, door"

Robot: "Navigating to door..."- 🎯 High-Precision Locations: Saved during mapping for maximum accuracy

- 🧠 Intelligent Suggestions: AI suggests similar location names

- 📍 Persistent Storage: Locations survive app restarts within the same map

- ⚡ Dynamic Updates: AI knows new locations immediately

- 🛡️ Error Prevention: No "location not found" errors

- 🗑️ Auto-Cleanup: New maps automatically clear old locations to prevent confusion

- When finishing a map: Pepper should be at the same position where mapping started

- When loading a saved map (after app restart): Place Pepper at the same starting position as when the map was created

- If Pepper is not at the starting position during localization, orientation may fail or take significantly longer

- The starting position serves as the reference point for the entire coordinate system

Tap the navigation icon (📍) in the toolbar to toggle a floating map preview (320x240dp) showing:

- Environment Map - Room layout from Pepper's sensors

- Saved Locations - Cyan markers with labels

- Navigation Status - "No map available", "Localizing...", "Navigating...", or live view when localized

When Pepper's movement is blocked, the AI automatically analyzes what's in the way:

User: "Move forward 2 meters"

Robot: "Something is blocking my path. Let me see what it is..."

# AI automatically: look_at_position → analyze_vision → return gaze

Robot: "I can see a chair blocking my path. Could you remove it?"Key Features:

- 🎯 Automatic Activation - Triggers when movement fails due to obstacles

- 📍 Smart Analysis - Looks forward and captures obstacle image automatically

- 🔄 No Manual Commands - User doesn't need to ask "what do you see?"

The app offers multiple interactive games and activities that can be started through voice commands or direct interaction.

- Voice-Activated - Start with "Let's play a quiz" or "Start a quiz game"

- Dynamic Questions - AI generates quiz questions on various topics

- Multiple Choice - Interactive answer selection with visual feedback

- Score Tracking - Real-time score display and progress tracking

- Educational & Fun - Combines learning with entertainment

Play against the AI with voice command ("Let's play Tic Tac Toe") - the game dialog opens automatically.

- Touch Interaction - Tap board positions to make your moves (you are X)

- AI Opponent - AI responds with a function call to place its O and comments naturally

- Auto-Close - Game closes 5 seconds after win/draw

The Memory Game provides a classic card-matching experience with customizable difficulty levels.

User: "Let's play a memory game"

Robot: "Starting memory game! Find matching pairs by flipping two cards."

# Memory game dialog opens with card grid- Card Grid: Face-down cards arranged in a grid pattern

- Match Pairs: Flip two cards at a time to find matching symbols

- Visual Feedback: Cards show colorful emojis when flipped

- Progress Tracking: Live counter shows moves and matched pairs

- Easy: 4 pairs (8 cards) - Perfect for beginners

- Medium: 8 pairs (16 cards) - Balanced challenge

- Hard: 12 pairs (24 cards) - Memory expert level

- Choose difficulty when starting (defaults to medium)

- Tap cards to flip them and reveal symbols

- Find matching pairs - matched cards stay face-up

- Complete the game when all pairs are found

- View stats showing total moves and completion time

- 🎨 Rich Symbols: Over 80 different emojis (animals, food, objects, etc.)

- ⏱️ Timer: Live timer tracks your completion time

- 📊 Statistics: Move counter and pairs remaining

- 🔄 Randomization: Different symbol combinations each game

- 📱 Touch Interface: Responsive card flipping animations

Draw on the tablet and let the AI guess what you drew.

User: "Let's play a drawing game"

# Canvas opens - draw with your finger

# After 2 seconds of inactivity, drawing is sent to AI

User: "What did I draw?"

Robot: "That looks like a cat!"- Touch Canvas: Freehand drawing with smooth strokes

- Smart Detection: Auto-sends after 2 seconds of inactivity

- Parallel Chat: Talk while drawing - ask anytime

- Topic Support: AI can suggest what to draw

Play simple melodies using synthesized sine waves - perfect for singing Happy Birthday!

User: "Sing Happy Birthday for me"

# Animated overlay appears with progress bar

# Robot plays the melody using generated tones

Robot: "Happy Birthday! 🎂"- Synthesized Audio: Pure sine wave tones (no MP3 files needed)

- Visual Overlay: Animated audio bars and progress indicator

- Cancellable: User can stop anytime via Stop button

- Auto-Mute: Microphone muted during playback to avoid interference

- Pre-configured: Happy Birthday melody included in tool description

- Lazy Validation - API keys only checked when features are used

- Graceful Degradation - Missing keys don't break core functionality

- Dynamic Registration - Only available tools are registered with AI

- Clean Separation - Core and optional features are independent

┌─────────────────────────────────────────────────────────────────────┐

│ ChatActivity │

│ (UI View Layer) │

└──────────────┬──────────────────────────────────────┬───────────────┘

│ │

▼ ▼

┌──────────────────────────────┐ ┌───────────────────────────────┐

│ ChatViewModel │ │ ChatLifecycleController │

│ (State & Logic) │ │ (Lifecycle & Services) │

└──────────────┬───────────────┘ └───────────────┬───────────────┘

│ │

│ │

┌───────▼────────┐ ┌───────▼────────┐

│ RealtimeSession│ │ RobotLifecycle│

│ Manager │ │ Bridge │

│ (WebSocket) │ │ (Flavor) │

└───────┬────────┘ └───────┬────────┘

│ │

│ │

┌───────▼────────┐ ┌───────▼────────┐

│ RealtimeEvent │ │ RobotController│

│ Handler │◄────────────────────┤ (Flavor) │

└───────┬────────┘ └───────┬────────┘

│ │

│ │

┌───────▼────────────────────────────────┐ │

│ ToolRegistry │ │

│ (Dynamic Tool Registration) │ │

└───────┬────────────────────────────────┘ │

│ │

│ │

┌───────▼────────────────────────────────┐ │

│ ToolContext │ │

│ (Execution Environment) │◄────┘

└───────┬────────────────────────────────┘

│

│

┌───────▼────────────────────────────────┐

│ Tool Layer │

├────────────────────────────────────────┤

│ Vision │ Movement │ Navigation │

│ Gesture │ Perception│ Internet │

│ Weather │ Face Rec │ Games ... │

└────────────────────────────────────────┘

│

│

┌───────▼────────────────────────────────┐

│ Hardware/Service Adapters │

├────────────────────────────────────────┤

│ Pepper: QiSDK, Robot Camera, │

│ Head-Based Perception (WS) │

│ Standalone: Device Camera, Stubs │

└────────────────────────────────────────┘

Flavor-Based Abstraction:

- Pepper Flavor: Uses QiSDK, robot sensors, navigation

- Standalone Flavor: Uses device camera, simulates robot features

1. User Speaks

│

▼

┌─────────────────────────────────────────────────────────────┐

│ Audio Input Mode Selection │

├─────────────────────────────┬───────────────────────────────┤

│ Mode A: Direct Audio │ Mode B: Azure Speech STT │

│ (Default - Simple) │ (Better for Dialects) │

└──────────┬──────────────────┴────────────┬──────────────────┘

│ │

│ Audio Stream │ Audio Stream

▼ ▼

┌─────────────┐ ┌──────────────┐

│ Realtime │ │ Azure Speech │

│ API WS │ │ Service │

│ (Server │ │ (STT) │

│ VAD) │ └──────┬───────┘

└─────┬───────┘ │

│ │ Transcript

│ Audio + Transcript │

│ │

└────────────┬───────────────────┘

│

▼

┌────────────────┐

│ Realtime API │

│ WebSocket │

│ (OpenAI gpt-4o │

│ or x.ai Grok) │

└────────┬───────┘

│

│ Response Events

│

┌────────▼─────────────────────────────┐

│ RealtimeEvent Handler │

│ (Process response events) │

└────┬────────────────────┬────────────┘

│ │

│ │

┌────────────▼─────────┐ ┌──────▼──────────────┐

│ response.audio.delta │ │ response.function_ │

│ (Direct Answer) │ │ call_arguments. │

│ │ │ delta │

│ No tool needed - │ │ │

│ AI responds directly │ │ (Tool Call) │

└────────┬─────────────┘ └──────┬──────────────┘

│ │

│ ▼

│ ┌─────────────────┐

│ │ ToolRegistry │

│ │ & Context │

│ └────────┬────────┘

│ │

│ │ Execute Tool

│ ▼

│ ┌─────────────────────────────┐

│ │ Tool Execution │

│ ├─────────────────────────────┤

│ │ • Vision: Capture + Analyze │

│ │ • Movement: Move/Turn │

│ │ • Gesture: Play Animation │

│ │ • Search: Query Internet │

│ │ • Navigation: Go to location│

│ └────────┬────────────────────┘

│ │

│ │ Result

│ ▼

│ ┌─────────────────┐

│ │ conversation. │

│ │ item.create │

│ │ (Tool Output) │

│ └────────┬────────┘

│ │

│ ▼

│ ┌─────────────────┐

│ │ Realtime API │

│ │ Processes │

│ │ Tool Result │

│ └────────┬────────┘

│ │

└───────────────────┘

│

│ response.audio.delta

▼

┌─────────────────┐

│ Audio Player │

│ (TTS Output) │

└────────┬────────┘

│

▼

┌─────────────────┐

│ Synchronized │

│ Gestures │

│ (If available) │

└─────────────────┘

│

▼

User Hears

Key Flow Characteristics:

- Model Flexibility: Supports OpenAI (gpt-4o-realtime, gpt-4o-mini-realtime) and x.ai Grok Voice Agent

- Dual Audio Input: Realtime API (simple) or Azure Speech (dialect quality)

- Server-side VAD: Realtime API handles turn detection automatically

- Conditional Tool Calls: AI decides when tools are needed (not every response uses tools)

- Direct Responses: Simple queries get immediate audio responses without tool execution

- Streaming Function Calls: Tool call deltas assembled in real-time when needed

- Synchronous Execution: Tools run immediately, results sent back

- Audio Streaming: TTS audio played as it arrives (low latency)

- Gesture Coordination: Animations triggered alongside speech

This application makes extensive use of Pepper Android SDK (QiSDK) high-level functions for robot control, navigation, perception, and interaction. The QiSDK provides a modern, Java-based API that integrates seamlessly with Android development.

QiSDK Limitations:

- Limited to high-level abstractions (navigation, gestures, perception)

- No direct access to low-level robot functions available in the older NAOqi SDK

- Some advanced robot capabilities (e.g., low-level motor control, direct sensor access) are not exposed

Extension via Head Server:

- The app maintains a WebSocket connection to the robot's head for the perception system

- This bidirectional communication channel could be extended to access additional low-level NAOqi functions:

- Access sensors not exposed in QiSDK

- Implement low-level motor control

- Integrate custom NAOqi modules

- Debug and monitor system-level robot state

Development Approach:

- Primary: Use QiSDK high-level functions for standard features (recommended for stability)

- Advanced: Extend the head server with custom NAOqi integrations when QiSDK is insufficient

Challenge: When using external TTS audio (Realtime API or Azure Speech), Pepper's built-in gesture generation system does not activate automatically. The robot's native TTS system typically generates synchronized gestures, but this integration is lost when streaming audio from external sources.

Solution: The app implements a gesture animation loop that runs during speech output:

- Random Explanatory Gestures: A selection of appropriate "explaining" gestures (body talk animations) are played in a continuous loop

- Duration-Based: Gestures run for the entire duration of the audio playback

- Natural Movement: Creates the impression of natural communication through continuous body language

- Non-Repetitive: Random selection prevents robotic, repetitive patterns

Implementation:

- Gestures start when audio playback begins

- Loop continues until speech output completes

- Animation selection from Pepper's built-in gesture library

- Synchronized with turn management to prevent overlap

This approach provides natural-looking robot behavior even when using external audio sources, maintaining the illusion of integrated speech and movement.

# Debug build

./gradlew assembleDebug

# Release build

./gradlew assembleRelease

# Run tests

./gradlew testapp/src/

├── main/java/ch/fhnw/pepper_realtime/ # Shared Kotlin code for all flavors

│ ├── controller/ # Application logic controllers

│ │ ├── AudioInputController.kt # Audio input & STT management

│ │ ├── AudioVolumeController.kt # System volume control

│ │ ├── VideoInputController.kt # Video streaming interface

│ │ ├── ChatInterruptController.kt # Interruption logic

│ │ ├── ChatLifecycleController.kt # App lifecycle orchestration

│ │ ├── ChatRealtimeHandler.kt # WebSocket event bridging

│ │ ├── ChatRobotLifecycleHandler.kt # Robot lifecycle events

│ │ ├── ChatSessionController.kt # Session management

│ │ ├── ChatSpeechListener.kt # Speech recognition callbacks

│ │ ├── ChatTurnListener.kt # Turn state management

│ │ └── RobotFocusManager.kt # Robot lifecycle focus management

│ ├── di/ # Dependency Injection (Hilt)

│ │ ├── AppModule.kt # App-level bindings

│ │ ├── ControllerModule.kt # Controller bindings

│ │ └── CoroutineModule.kt # Coroutine dispatcher bindings

│ ├── manager/ # Application managers

│ │ ├── ApiKeyManager.kt # API key management

│ │ ├── AudioPlayer.kt # Audio playback engine

│ │ ├── DashboardManager.kt # Dashboard state management

│ │ ├── DrawingGameManager.kt # Drawing game state & logic

│ │ ├── EventRulesManager.kt # Event rules & persistence

│ │ ├── FaceManager.kt # Face recognition & settings

│ │ ├── MelodyManager.kt # Melody playback coordination

│ │ ├── MemoryGameManager.kt # Memory game state & logic

│ │ ├── NavigationManager.kt # Navigation overlay state

│ │ ├── PermissionManager.kt # Android permission handling

│ │ ├── QuizGameManager.kt # Quiz game state & logic

│ │ ├── RealtimeAudioInputManager.kt # Audio input for Realtime API

│ │ ├── SessionImageManager.kt # Temporary image cleanup

│ │ ├── SettingsRepository.kt # Settings data repository

│ │ ├── SpeechRecognizerManager.kt # Azure Speech integration

│ │ ├── TicTacToeGameManager.kt # TicTacToe game state & logic

│ │ ├── TurnManager.kt # Conversation turn state machine

│ │ └── YouTubePlayerManager.kt # YouTube playback management

│ ├── network/ # Network & API communication

│ │ ├── HttpClientManager.kt # Shared HTTP client with suspend functions

│ │ ├── RealtimeApiProvider.kt # API provider definitions

│ │ ├── RealtimeEventHandler.kt # WebSocket event parsing

│ │ ├── RealtimeEvents.kt # WebSocket event data classes

│ │ ├── RealtimeSessionManager.kt # WebSocket connection handling

│ │ └── SshConnectionHelper.kt # SSH utilities

│ ├── service/ # Background services (shared)

│ │ ├── LocalFaceRecognitionService.kt # Local face recognition (Pepper head)

│ │ └── YouTubeSearchService.kt # YouTube Data API client

│ ├── robot/ # Robot abstraction interfaces

│ │ ├── RobotController.kt # Interface for robot control

│ │ └── RobotLifecycleBridge.kt # Interface for lifecycle events

│ ├── data/ # Data models and providers

│ │ ├── BasicEmotion.kt # Emotion definitions

│ │ ├── LocationProvider.kt # Map location management

│ │ ├── PerceptionData.kt # Human perception data classes

│ │ └── SavedLocation.kt # Location data class

│ ├── ui/ # UI Components and Helpers

│ │ ├── ChatActivity.kt # Main UI entry point

│ │ ├── ChatMessage.kt # Message data class

│ │ ├── ChatViewModel.kt # MVVM ViewModel (UI state management)

│ │ ├── UiState.kt # UI state data classes

│ │ ├── YouTubePlayerDialog.kt # YouTube player UI

│ │ └── compose/ # Jetpack Compose UI

│ │ ├── MainScreen.kt # Main app scaffold & navigation

│ │ ├── ChatScreen.kt # Main chat LazyColumn

│ │ ├── ChatTheme.kt # App theme & colors

│ │ ├── MessageBubble.kt # User/Robot message bubbles

│ │ ├── FunctionCallCard.kt # Expandable function call cards

│ │ ├── ChatImage.kt # Image messages with Coil

│ │ ├── NavigationOverlay.kt # Map & navigation overlay

│ │ ├── dashboard/ # Perception dashboard (modular)

│ │ │ ├── DashboardOverlay.kt # Main dashboard container

│ │ │ ├── DashboardComponents.kt # Shared colors, dialogs, sliders

│ │ │ ├── LiveViewContent.kt # Tab 1: Live human detection

│ │ │ ├── RadarViewContent.kt # Tab 2: Radar canvas view

│ │ │ ├── KnownFacesContent.kt # Tab 3: Face management

│ │ │ └── PerceptionSettingsContent.kt # Tab 4: Settings

│ │ ├── games/ # Game UI components

│ │ │ ├── DrawingCanvasDialog.kt # Drawing canvas UI

│ │ │ ├── TicTacToeDialog.kt # TicTacToe game UI

│ │ │ ├── QuizDialog.kt # Quiz question UI

│ │ │ └── MemoryGameDialog.kt # Memory game UI

│ │ └── settings/ # Settings UI

│ │ ├── SettingsScreen.kt # Main settings screen

│ │ └── SettingsComponents.kt # Reusable settings components

│ └── tools/ # Tool implementations (shared logic)

│ ├── Tool.kt # Tool interface

│ ├── ToolContext.kt # Shared tool context

│ ├── ToolContextFactory.kt # Tool context creation

│ ├── ToolRegistry.kt # Tool registration system

│ ├── games/ # Game tools

│ │ ├── DrawingGameTool.kt # Start drawing game tool

│ │ ├── MemoryCard.kt # Memory card data class

│ │ ├── MemoryGameTool.kt # Start memory game tool

│ │ ├── QuizTool.kt # Present quiz question tool

│ │ ├── TicTacToeGame.kt # TicTacToe game logic

│ │ ├── TicTacToeStartTool.kt # Start TicTacToe tool

│ │ └── TicTacToeMoveTool.kt # Make TicTacToe move tool

│ └── [other categories]/... # Other tools (entertainment, information, etc.)

│

├── pepper/java/ch/fhnw/pepper_realtime/ # Pepper-specific implementations

│ ├── controller/

│ │ ├── GestureController.kt # Real Pepper animations

│ │ ├── MovementController.kt # Real robot movement

│ │ └── VideoInputControllerImpl.kt # Pepper camera video streaming

│ ├── manager/

│ │ ├── NavigationServiceManager.kt # Real navigation system

│ │ ├── LocalizationCoordinator.kt # Real localization logic

│ │ └── TouchSensorManager.kt # Real touch sensor handling

│ ├── service/

│ │ ├── PerceptionService.kt # Real human detection (QiSDK)

│ │ └── VisionService.kt # Pepper head camera

│ ├── robot/

│ │ ├── RobotControllerImpl.kt # Real QiContext implementation

│ │ ├── RobotLifecycleBridgeImpl.kt # QiSDK lifecycle integration

│ │ └── RobotSafetyGuard.kt # Movement safety checks

│ ├── data/

│ │ └── NavigationMapCache.kt # Real map data caching

│ └── ui/

│ └── MapPreviewView.kt # Real map visualization view

│

└── standalone/java/ch/fhnw/pepper_realtime/ # Standalone stub implementations

├── controller/

│ ├── GestureController.kt # Stub (log only)

│ ├── MovementController.kt # Stub (log only)

│ └── VideoInputControllerImpl.kt # Android Camera2 video streaming

├── manager/

│ ├── NavigationServiceManager.kt # Stub (simulated navigation)

│ ├── LocalizationCoordinator.kt # Stub (simulated localization)

│ └── TouchSensorManager.kt # Stub (log only)

├── service/

│ ├── PerceptionService.kt # Stub (simulated detection)

│ └── VisionService.kt # Android Camera2 API implementation

├── robot/

│ ├── RobotControllerImpl.kt # Stub implementation

│ ├── RobotLifecycleBridgeImpl.kt # Simulated lifecycle

│ └── RobotSafetyGuard.kt # Stub implementation

├── data/

│ └── NavigationMapCache.kt # Stub (no map data)

└── ui/

└── MapPreviewView.kt # Stub (empty view)

local.properties.example- API key templateapp/build.gradle- Build configuration and key injectionapp/src/main/assets/jokes.json- Joke database

- Check your OpenAI, Azure OpenAI, x.ai, or Google API key and endpoint

- Verify internet connectivity

- For Azure: Ensure the deployment name matches your Azure setup

- Vision works automatically with

gpt-realtimeandgpt-realtime-minimodels (built-in) - For other models (preview models, x.ai Grok): Add

GROQ_API_KEYtolocal.propertiesfor Groq-based vision - Check camera permissions and robot focus

- Each feature shows helpful setup instructions

- Add the corresponding API key to

local.properties - Rebuild and reinstall the app

"No map available for navigation"

- Create an environment map first using "Create a map"

- Guide Pepper through the room completely

- Finish the mapping process with "Finish the map"

"Location not found"

- The AI now suggests available locations automatically

- Check spelling and pronunciation of location names

- Use the suggested location names from the AI

"Mapping timeout"

- Ensure room has good lighting and visual features

- Avoid rooms with mostly blank walls or mirrors

- Try mapping smaller sections of large rooms

- Check for obstacles blocking Pepper's movement

"High precision vs standard locations"

- Locations saved during active mapping are most accurate

- Locations saved after mapping are less precise but still functional

- For best results, save important locations during the mapping process

"My saved locations disappeared"

- Creating a new map automatically deletes all existing locations

- This prevents navigation errors caused by incompatible coordinate systems

- Each map creates its own coordinate system, making old locations invalid

- Simply re-save your important locations after creating the new map

The app provides detailed logs for debugging:

# View all logs

adb logcat | grep -E "(ChatActivity|ApiKeyManager|ToolExecutor)"

# View navigation logs specifically

adb logcat | grep -E "(MovementController|ToolExecutor.*navigate|ToolRegistry.*location)"

# View mapping logs

adb logcat | grep -E "(LocalizeAndMap|mapping|create_environment_map)"- Fork the repository

- Create a feature branch

- Add your improvements

- Test thoroughly

- Submit a pull request

- Follow existing Kotlin code style and conventions

- Use Kotlin idioms (null safety, extension functions, data classes)

- Add proper error handling with Result types or sealed classes

- Update documentation

- Test with missing API keys

This project is actively maintained. Issues are monitored, and pull requests for bug fixes and feature enhancements are welcome and will be reviewed.

This project is licensed under the MIT License - see the LICENSE file for details.

- OpenAI - Realtime API

- x.ai - Grok Voice Agent API

- SoftBank Robotics - Pepper robot platform

- Microsoft - Azure Speech and OpenAI services

- Groq - Alternative vision analysis provider

- Tavily - Internet search API

- OpenWeatherMap - Weather data

Happy coding with Pepper! 🤖✨