Conversation

|

@feich-ms I tried this. I'd like to use this PR to iterate on refinements. Can you try this sample (follow readme to do cross-train as well as luis:build) and figure out why the output luis appIds are not printed on screen (without --out specified)? I also see |

|

@vishwacsena, figured out the cause and pushed a

|

|

Thanks @feich-ms can you help try this sample E2E? I just pulled your latest and tried it and I see an empty QnA Maker KB although qnamaker:build says everything was published. |

|

@vishwacsena, just tried it and indeed there is no content in kb. I tried to reduce the QA pairs and questions in chitchat.qna, and then it works, so I'm assuming that QnA has number limits for questions of single KB pair as our deferToLuis pair will have father's QnA questions, here is chitchat.qna. I looked at the cross-trained qna file. There are more than thousands of questions in deferToLuis pair in two of the qna files, so maybe this is the root cause. |

|

Aah. yes. could we add logic to break any of these into multiple questions with the same answer just so we are not past the qna limits? https://docs.microsoft.com/en-us/azure/cognitive-services/qnamaker/limits#knowledge-base-content-limits I mean in cross-train we validate for qna pairs and split up if needed.. It is also odd that the service does not return an error or does it? |

|

Seems it didn't throw any errors, will double check. I will also break large size qna pair into smaller one today. |

…terances and optimize luConverter to make sure there is only one whitespace between words in utterances

…er in cross train and fix error not thrown issue in qnamaker

|

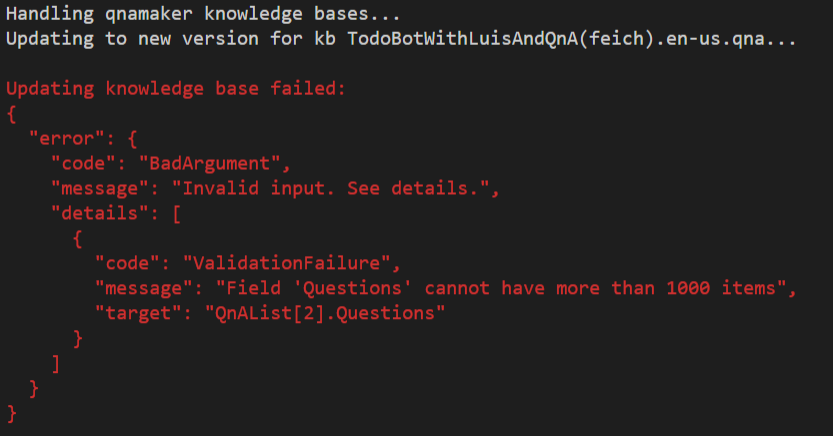

@vishwacsena, I added the logic to break the large QA pair into multiple smaller ones in cross-train. I figured out that the limit of question number for an answer in replace API seems to be 1000 instead of 300. I tested that manully, if question number is over 1000, like 1001, it will throw error like below. Number no more than 1000 will work well. Unit tests are also added for the split logic. I also fixed the logic to throw out the errors from API. Now it can throw any API call failures to console like above screenshot. |

|

@feich-ms can you explain why we need to lowercase the file names? This is hard to explain to the user because the recognizer configuration (casing) does not match because we are lower casing the file names. |

|

Rest looks good. |

|

@vishwacsena, the lower case is introduced when resolving issue |

|

@vishwacsena, the file name casing issue is resolved by the latest commit in cross-train. Now it will write out the cross-trained content with the original file names(no lower casing). The PR is still resolving the issue |

vishwacsena

left a comment

vishwacsena

left a comment

There was a problem hiding this comment.

Verified changes functionally.

|

Thanks @feich-ms. All looks good. Approved. |

Resolve cross train related issues in #800

Cross train

--config is relative to –in and not relative to the pwd()

Resolved: fixed in this PR for both luis and qnamaker cross train

Cross-train config is file name case sensitive.

Resolved: fixed in this PR and add tests to cover it. All file ids in config and luObject array will be lower case

Cross-train should only copy over files specified in the cross-train config to output but all references should be fully resolved before they are copied over.

Resolved: fixed in this PR and adjust tests to cover it. Only lu files and corresponding qna files in config will be written out to --out folder

QnA meta-data property is not applied to references

Resolved: fixed in this PR. All references from import will be resolved in current content and meta-data will be added too

Apply cross-train for .lu and .qna documents after fully resolving imports.

Resolved: fixed in this PR. All imports are fully resolved

If multiple source .lu or .qna files are pulling in the same reference, the for QnA, meta-data pairs need to be added to the same QnA pair (e.g. root as well as dialog A pulling in some chitchat utterances)

Resolved: fixed automatically after imports are resolved. Please notice that QnAMaker don't allow meta-date to be same key with multiple values in single KB pair, so there will be two KB pairs if there are same key with two different values in meta-data

Cross-train does not work when the child dialog does not have any LU content. AllowInterruption=true on that dialog does not seem to work.

Resolved: fixed in this PR to support cross train empty files which means empty lu or qna files can have cross-trained results even if the file is empty.

All above issues are covered by unit tests.