- Documentation: http://www.cril.univ-artois.fr/pyxai/

- Git: https://github.com/crillab/pyxai

- Installation: http://www.cril.univ-artois.fr/pyxai/documentation/installation/

PyXAI (Python eXplainable AI) is a Python library (version 3.6 or later) allowing to bring explanations of various forms suited to (regression or classification) tree-based ML models (Decision Trees, Random Forests, Boosted Trees, ...). In contrast to many approaches to XAI (SHAP, Lime, ...), PyXAI algorithms are model-specific. Furthermore, PyXAI algorithms guarantee certain properties about the explanations generated, that can be of several types:

-

Abductive explanations for an instance

$X$ are intended to explain why$X$ has been classified in the way it has been classified by the ML model (thus, addressing the “Why?” question). For the regression tasks, abductive explanations for$X$ are intended to explain why the regression value on$X$ is in a given interval. -

Contrastive explanations for

$X$ is to explain why$X$ has not been classified by the ML model as the user expected it (thus, addressing the “Why not?” question).

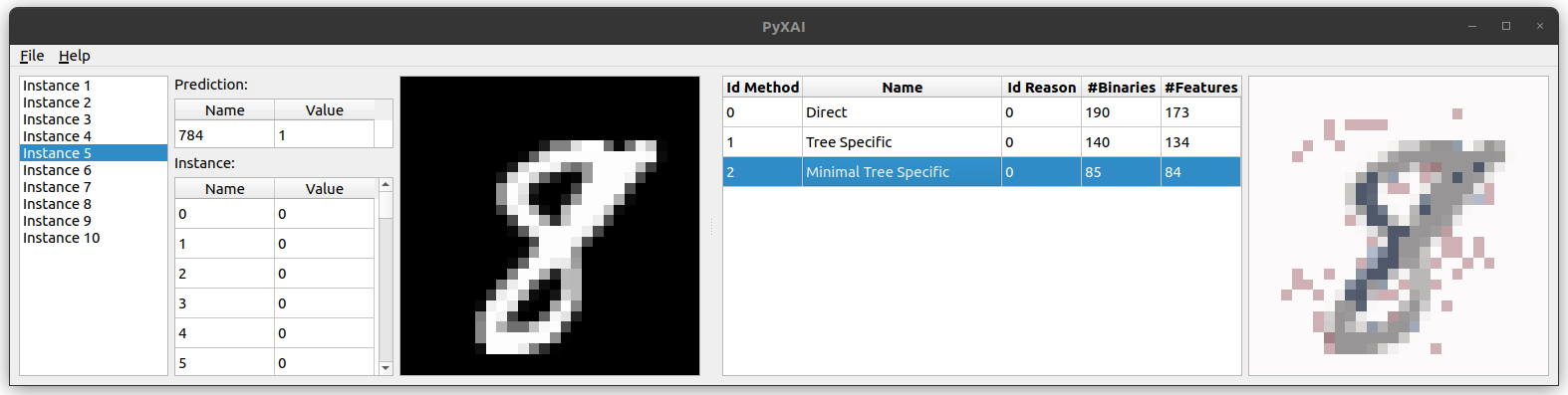

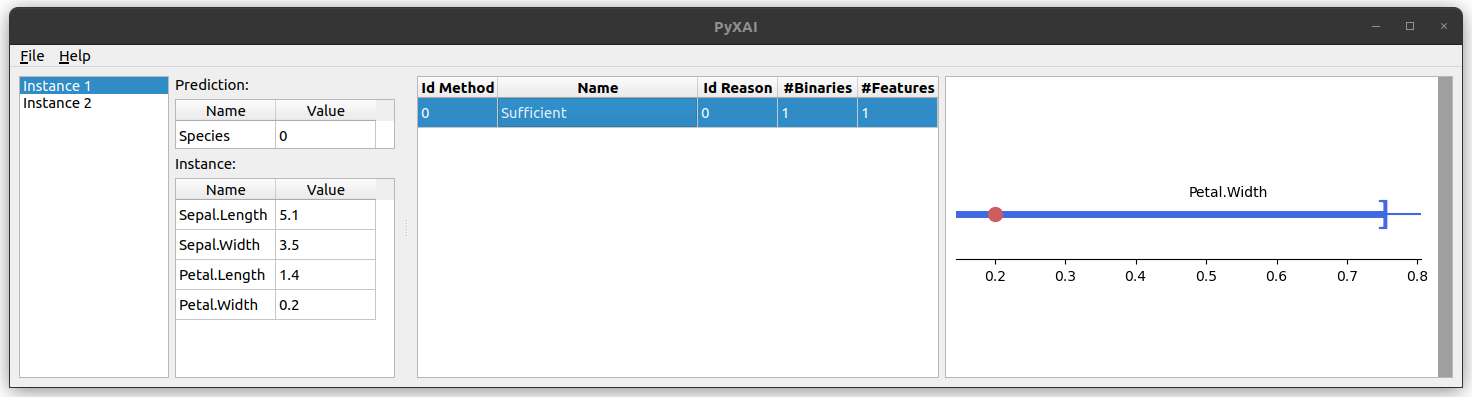

PyXAI's main steps for producing explanations. PyXAI's Graphical User Interface (GUI) for visualizing explanations.New features in version 1.0.0:

- Regression for Boosted Trees with XGBoost or LightGBM

- Adding Theories (knowledge about the dataset)

- Easier model import (automatic detection of model types)

- PyXAI's Graphical User Interface (GUI): displaying, loading and saving explanations.

- Supports multiple image formats for imaging datasets

- Supports data pre-processing (tool for preparing and cleansing a dataset)

- Unit Tests with the unittest module

The most popular approaches (SHAP, Lime, ...) to XAI are model-agnostic, but do not offer any guarantees of rigor. A number of works have highlighted several misconceptions about informal approaches to XAI (see the related papers). Contrastingly, PyXAI algorithms rely on logic-based, model-precise approaches for computing explanations. Although formal explainability has a number of drawbacks, particularly in terms of the computational complexity of logical reasoning needed to derive explanations, steady progress has been made since its inception.

Models are the resulting objects of an experimental ML protocol through a chosen cross-validation method (for example, the result of a training phase on a classifier). Importantly, in PyXAI, there is a complete separation between the learning phase and the explaining phase: you produce/load/save models, and you find explanations for some instances given such models. Currently, with PyXAI, you can use methods to find explanations suited to different ML models for classification or regression tasks:

- Decision Tree (DT)

- Random Forest (RF)

- Boosted Tree (Gradient boosting) (BT)

In addition to finding explanations, PyXAI also provides methods that perform operations (production, saving, loading) on models and instances. Currently, these methods are available for three ML libraries:

- Scikit-learn: a software machine learning library

- XGBoost: an optimized distributed gradient boosting library

- LightGBM: a gradient boosting framework that uses tree based learning algorithms

It is possible to also leverage PyXAI to find explanations suited to models learned using other libraries.

In this website, you can find all what you need to know about PyXAI, with more than 10 Jupyter Notebooks, including:

- The installation guide and the quick start

- About obtaining models:

- How to prepare and clean a dataset using the PyXAI preprocessor object?

- How to import a model, whatever its format? Importing Models

- How to generate a model using a ML cross-validation method? Generating Models

- How to build a model from trees directly built by the user? Building Models

- How to save and load models with the PyXAI learning module? Saving/Loading Models

- About obtaining explanations:

- The concepts of the PyXAI explainer module: Concepts

- How to use a time limit? Time Limit

- The PyXAI library offers the possibility to process user preferences (prefer some explanations to others or exclude some features): Preferences

- Theories are knowledge about the dataset. PyXAI offers the possibility of encoding a theory when calculating explanations in order to avoid calculating impossible explanations: Theories

- How to compute explanations for classification tasks? Explaining Classification

- How to compute explanations for regression tasks? Explaining Regression

- How to use the PyXAI's Graphical User Interface (GUI) for visualizing explanations?

Here is an example (it comes from the Quick Start page):

from pyxai import Learning, Explainer

learner = Learning.Scikitlearn("tests/iris.csv", learner_type=Learning.CLASSIFICATION)

model = learner.evaluate(method=Learning.HOLD_OUT, output=Learning.DT)

instance, prediction = learner.get_instances(model, n=1, correct=True, predictions=[0])

explainer = Explainer.initialize(model, instance)

print("instance:", instance)

print("binary representation:", explainer.binary_representation)

sufficient_reason = explainer.sufficient_reason(n=1)

print("sufficient_reason:", sufficient_reason)

print("to_features:", explainer.to_features(sufficient_reason))

instance, prediction = learner.get_instances(model, n=1, correct=False)

explainer.set_instance(instance)

contrastive_reason = explainer.contrastive_reason()

print("contrastive reason", contrastive_reason)

print("to_features:", explainer.to_features(contrastive_reason, contrastive=True))

explainer.open_GUI()As illustrated by this example, with a few lines of code, PyXAI allows you to train a model, extract instances, and get explanations about the classifications made.