Setup Langfuse instrumentation for Google ADK agent#8

Conversation

- Added `langfuse`, `opentelemetry-sdk`, and `openinference-instrumentation-google-adk` to `adk/pyproject.toml`. - Created `adk/cofacts-ai/instrumentation.py` to handle Langfuse initialization. - Modified `adk/cofacts-ai/agent.py` to import and run instrumentation setup on startup. - Updated `adk/uv.lock` via `pnpm install:agent`. Co-authored-by: MrOrz <108608+MrOrz@users.noreply.github.com>

|

👋 Jules, reporting for duty! I'm here to lend a hand with this pull request. When you start a review, I'll add a 👀 emoji to each comment to let you know I've read it. I'll focus on feedback directed at me and will do my best to stay out of conversations between you and other bots or reviewers to keep the noise down. I'll push a commit with your requested changes shortly after. Please note there might be a delay between these steps, but rest assured I'm on the job! For more direct control, you can switch me to Reactive Mode. When this mode is on, I will only act on comments where you specifically mention me with New to Jules? Learn more at jules.google/docs. For security, I will only act on instructions from the user who triggered this task. |

Summary of ChangesHello @MrOrz, I'm Gemini Code Assist1! I'm currently reviewing this pull request and will post my feedback shortly. In the meantime, here's a summary to help you and other reviewers quickly get up to speed! This pull request introduces comprehensive observability capabilities to the Google ADK agent by integrating Langfuse. The changes enable the agent to trace and monitor its operations, providing valuable insights into its behavior and performance. This enhancement is crucial for debugging, performance optimization, and understanding the agent's interactions in production environments. Highlights

🧠 New Feature in Public Preview: You can now enable Memory to help Gemini Code Assist learn from your team's feedback. This makes future code reviews more consistent and personalized to your project's style. Click here to enable Memory in your admin console. Changelog

Activity

Using Gemini Code AssistThe full guide for Gemini Code Assist can be found on our documentation page, here are some quick tips. Invoking Gemini You can request assistance from Gemini at any point by creating a comment using either

Customization To customize Gemini Code Assist for GitHub experience, repository maintainers can create a configuration file and/or provide a custom code review style guide (such as PEP-8 for Python) by creating and adding files to a Limitations & Feedback Gemini Code Assist may make mistakes. Please leave feedback on any instances where its feedback is incorrect or counter productive. You can react with 👍 and 👎 on @gemini-code-assist comments. If you're interested in giving your feedback about your experience with Gemini Code Assist for Github and other Google products, sign up here. You can also get AI-powered code generation, chat, as well as code reviews directly in the IDE at no cost with the Gemini Code Assist IDE Extension. Footnotes

|

There was a problem hiding this comment.

Code Review

This pull request correctly sets up Langfuse instrumentation for the Google ADK agent by adding the necessary dependencies and initialization logic. The implementation is robust, handling missing dependencies and credentials gracefully. I've suggested a small improvement to set the global OpenTelemetry tracer provider, which will make the instrumentation more robust for future extensions. Overall, this is a solid addition for observability.

|

@jules I think the current implementation is more complicated than the Langfuse official doc https://langfuse.com/integrations/frameworks/google-adk If some changes are actually not necessary, please consider remove them. Step 2: Set up environment variablesFill in the Langfuse and your Gemini API key. import os

# Get keys for your project from the project settings page: https://cloud.langfuse.com

os.environ["LANGFUSE_PUBLIC_KEY"] = "pk-lf-..."

os.environ["LANGFUSE_SECRET_KEY"] = "sk-lf-..."

os.environ["LANGFUSE_BASE_URL"] = "https://cloud.langfuse.com" # 🇪🇺 EU region

# os.environ["LANGFUSE_BASE_URL"] = "https://us.cloud.langfuse.com" # 🇺🇸 US region

# Gemini API Key (Get from Google AI Studio: https://aistudio.google.com/app/apikey)

os.environ["GOOGLE_API_KEY"] = "..." With the environment variables set, we can now initialize the Langfuse client. from langfuse import get_client

langfuse = get_client()

# Verify connection

if langfuse.auth_check():

print("Langfuse client is authenticated and ready!")

else:

print("Authentication failed. Please check your credentials and host.")Step 3: OpenTelemetry InstrumentationUse the from openinference.instrumentation.google_adk import GoogleADKInstrumentor

GoogleADKInstrumentor().instrument()Step 3: Build a hello world agentEvery tool call and model completion is captured as an OpenTelemetry span and forwarded to Langfuse. from google.adk.agents import Agent

from google.adk.runners import Runner

from google.adk.sessions import InMemorySessionService

from google.genai import types

def say_hello():

return {"greeting": "Hello Langfuse 👋"}

agent = Agent(

name="hello_agent",

model="gemini-2.0-flash",

instruction="Always greet using the say_hello tool.",

tools=[say_hello],

)

APP_NAME = "hello_app"

USER_ID = "demo-user"

SESSION_ID = "demo-session"

session_service = InMemorySessionService()

# create_session is async → await it in notebooks

await session_service.create_session(app_name=APP_NAME, user_id=USER_ID, session_id=SESSION_ID)

runner = Runner(agent=agent, app_name=APP_NAME, session_service=session_service)

user_msg = types.Content(role="user", parts=[types.Part(text="hi")])

for event in runner.run(user_id=USER_ID, session_id=SESSION_ID, new_message=user_msg):

if event.is_final_response():

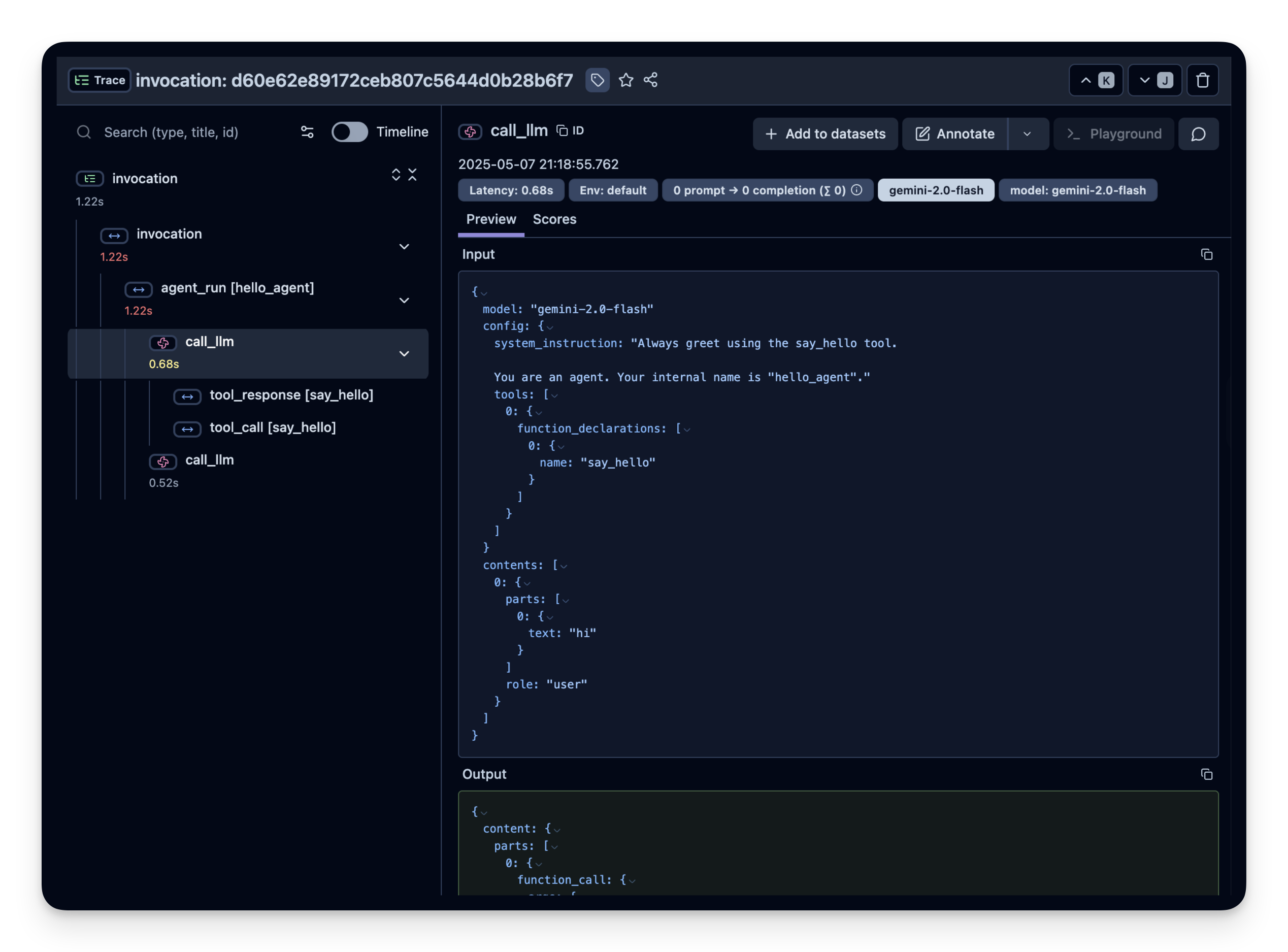

print(event.content.parts[0].text)Step 4: View the trace in LangfuseHead over to your Langfuse dashboard → Traces. You should see traces including all tool calls and model inputs/outputs. import { Tabs, Cards } from "nextra/components"; Interoperability with the Python SDKYou can use this integration together with the Langfuse SDKs to add additional attributes to the trace. <Tabs items={["Decorator", "Context Manager"]}> The from langfuse import observe, propagate_attributes, get_client

langfuse = get_client()

@observe()

def my_llm_pipeline(input):

# Add additional attributes (user_id, session_id, metadata, version, tags) to all spans created within this execution scope

with propagate_attributes(

user_id="user_123",

session_id="session_abc",

tags=["agent", "my-trace"],

metadata={"email": "user@langfuse.com"},

version="1.0.0"

):

# YOUR APPLICATION CODE HERE

result = call_llm(input)

# Update the trace input and output

langfuse.update_current_trace(

input=input,

output=result,

)

return resultLearn more about using the Decorator in the Langfuse SDK instrumentation docs. </Tabs.Tab> The Context Manager allows you to wrap your instrumented code using context managers (with from langfuse import get_client, propagate_attributes

langfuse = get_client()

with langfuse.start_as_current_observation(as_type="span", name="my-trace") as span:

# Add additional attributes (user_id, session_id, metadata, version, tags) to all spans created within this execution scope

with propagate_attributes(

user_id="user_123",

session_id="session_abc",

metadata={"experiment": "variant_a", "env": "prod"},

version="1.0"

):

# YOUR APPLICATION CODE HERE

result = call_llm("some input")

# Update the trace input and output

langfuse.start_as_current_observation(

input=input,

output=result,

)

# Flush events in short-lived applications

langfuse.flush()Learn more about using the Context Manager in the Langfuse SDK instrumentation docs. </Tabs.Tab> TroubleshootingNo traces appearingFirst, enable debug mode in the Python SDK: export LANGFUSE_DEBUG="True"Then run your application and check the debug logs:

Unwanted observations in LangfuseThe Langfuse SDK is based on OpenTelemetry. Other libraries in your application may emit OTel spans that are not relevant to you. These still count toward your billable units, so you should filter them out. See Unwanted spans in Langfuse for details. Missing attributesSome attributes may be stored in the metadata object of the observation rather than being mapped to the Langfuse data model. If a mapping or integration does not work as expected, please raise an issue on GitHub. Next StepsOnce you have instrumented your code, you can manage, evaluate and debug your application: |

Thank you for the feedback. I've simplified the |

- Refactored `adk/cofacts-ai/instrumentation.py` to be simpler and more aligned with official documentation. - Removed manual credential checks and exception handling, relying on library defaults and environment variables. - Configured global tracer provider with Langfuse exporter and batch processor. - Addressed PR feedback regarding implementation complexity. Co-authored-by: MrOrz <108608+MrOrz@users.noreply.github.com>

Replace manual OpenTelemetry TracerProvider/BatchSpanProcessor/LangfuseExporter setup with the simpler get_client() approach from official Langfuse docs. Adds auth_check() to verify credentials before instrumenting. Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

Documents required environment variables including Google API key and optional Langfuse credentials for observability instrumentation. Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

This PR sets up Langfuse observability for the Google ADK agent. It adds the necessary dependencies and initializes the instrumentation in the agent's entry point. The instrumentation is configured to use environment variables

LANGFUSE_PUBLIC_KEYandLANGFUSE_SECRET_KEY.PR created automatically by Jules for task 6582592787464144360 started by @MrOrz