Implement Standard Metrics - Free Memory#708

Implement Standard Metrics - Free Memory#708reyang merged 45 commits intocensus-instrumentation:masterfrom

Conversation

contrib/opencensus-ext-azure/opencensus/ext/azure/metrics_exporter/__init__.py

Outdated

Show resolved

Hide resolved

| exporter.enable_standard_metrics() | ||

| # Separate thread for recording of metrics | ||

| transport.get_recorder_thread(exporter.record_standard_metrics, | ||

| interval=options_.export_interval) |

There was a problem hiding this comment.

Not sure if the recording interval should be the same as export interval. What are the other SDKs doing today?

There was a problem hiding this comment.

In the Node SDK, the intervals for recording and exporting are different and can be configured. I was using the same interval because I was thinking of simplicity for the user so they do not have to configure anything in options. Perhaps derive the recording interval from the export interval (maybe interval-%age), as long as the recording happens prior.

There was a problem hiding this comment.

@reyang We can also add another option in Options. What do you think?

There was a problem hiding this comment.

I wonder how we plan to do this in OpenCensus/OpenTelemetry, it is not supposed to be exporter specific I guess?

There was a problem hiding this comment.

If it won't be exporter specific, we can abstract out Options to have OpenCensus default behaviour and then implement exporter specific behaviour. Just an idea.

| install_requires=[ | ||

| 'opencensus >= 0.7.dev0, < 1.0.0', | ||

| 'requests >= 2.19.0', | ||

| 'psutil >= 5.6.3', |

opencensus/metrics/transport.py

Outdated

| :return: A running thread responsible for executing record_metrics(). | ||

|

|

||

| """ | ||

| tt = MetricExporterTask(interval, record_metrics) |

There was a problem hiding this comment.

Maybe we should find another name for MetricExporterTask as we started to use it for non-exporting tasks.

opencensus/metrics/transport.py

Outdated

| :return: A running thread responsible for executing record_metrics(). | ||

|

|

||

| """ | ||

| tt = MetricExporterTask(interval, record_metrics) |

There was a problem hiding this comment.

Maybe we should find another name for MetricExporterTask as we started to use it for non-exporting tasks.

There was a problem hiding this comment.

Good idea. I will use something more appropriate.

| def enable_standard_metrics(self): | ||

| # Sets up stats related objects to begin | ||

| # recording standard metrics | ||

| stats_ = stats.stats |

There was a problem hiding this comment.

Curious, why not use stats_module.stats for consistency?

There was a problem hiding this comment.

I'll use stats_module.stats for consistency.

| # Function called periodically to record standard metrics | ||

| vmem = psutil.virtual_memory() | ||

| available_memory = vmem.available | ||

| available_measure = self.standard_metrics_measure_map[StandardMetricType.AVAILABLE_MEMORY] |

There was a problem hiding this comment.

The 'available_measure' name is confusing.

There was a problem hiding this comment.

Good catch. I will match with the previous naming.

| # Uniqueness should be based off the name of the view and | ||

| # measure. A warning message in the logs will be shown | ||

| # if there are duplicate names | ||

| self.standard_metrics_map = stats_recorder.new_measurement_map() |

There was a problem hiding this comment.

Consider decoupling the actual logic from the exporter class. We will need to add several default metrics, need to control the size of this file and have a better organized way.

c24t

left a comment

c24t

left a comment

There was a problem hiding this comment.

Have you considered using gauges for this? This is the ideal use case: instantaneous measurements that don't need to be aggregated and can be collected at export time.

If you use a derived gauge here it'll call psutil only when you collect the metrics for export:

import psutil

from opencensus.metrics.export.gauge import Registry

from opencensus.metrics.export.gauge import DerivedLongGauge

def get_available_memory():

return psutil.virtual_memory().available

gauge = DerivedLongGauge('available_memory', 'Bytes of available memory on the host', 'byte', [])

gauge.create_default_time_series(get_available_memory)

reg = Registry()

reg.add_gauge(gauge)

[metric] = reg.get_metrics()

print(metric)

print(metric.time_series)

print(metric.time_series[0].points[0].value.value)gives:

>>> Metric(time_series=<1 TimeSeries>, descriptor.name="available_memory")

>>> [TimeSeries([ValueLong(6316986368)], label_values=(), start_timestamp=2019-07-03 22:17:44.561518)]

>>> 6316986368

Gauges are relatively new, and you might discover they still need some work when you use them in the exporter, but since this solution doesn't rely on views at all it's more future-proof.

| class StandardMetricsType(object): | ||

| # Memory that can be given instantly to | ||

| # processes without the system going into swap | ||

| AVAILABLE_MEMORY = "\\Memory\\Available Bytes" |

There was a problem hiding this comment.

Where does this come from, and why the backslashes?

There was a problem hiding this comment.

This is the namespace that is used in Azure Monitor that uniquely identifies certain standard metrics.

There was a problem hiding this comment.

Gotcha, any reason not to move this up to the module?

| # the storage only uses one single data structure | ||

| # Uniqueness should be based off the name of the view and | ||

| # measure. A warning message in the logs will be shown | ||

| # if there are duplicate names |

There was a problem hiding this comment.

Nit, here and above: what's with the short text wrapping?

There was a problem hiding this comment.

It's to pass the lint checks for maximum characters. I just eyeballed the length.

|

@c24t The gauges look really cool! DerivedGauge seems to fit exactly what we need. We can also get rid of the recorder thread this way. If this is implemented for standard metrics, then the exporter would essentially be "getting metrics" from two different places, the gauges registry and the measure to view map. So before they are exported, these two lists should be merged. There is a case where measure_to_view_map produces metrics that have the same name as the certain metrics produces from the gauges registry. But since we are in control of the naming for standard metrics, I don't think this is a huge issue. What do you think? |

|

So there's good news and bad news. 👍 The good news is that everyone that needs to sign a CLA (the pull request submitter and all commit authors) have done so. Everything is all good there. 😕 The bad news is that it appears that one or more commits were authored or co-authored by someone other than the pull request submitter. We need to confirm that all authors are ok with their commits being contributed to this project. Please have them confirm that here in the pull request. Note to project maintainer: This is a terminal state, meaning the ℹ️ Googlers: Go here for more info. |

|

Implementation now uses Gauges instead of measures and views. StandardMetricProducer and BaseStandardMetric are also abstracted into the core sdk. Developers can make their own Producers and StandardMetrics that extend from these classes. |

contrib/opencensus-ext-azure/opencensus/ext/azure/metrics_exporter/standard_metrics.py

Outdated

Show resolved

Hide resolved

| # https://psutil.readthedocs.io/en/latest/ | ||

| class AvailableMemoryStandardMetric(BaseStandardMetric): | ||

| """ Metric for Available Memory | ||

| Avaliable memoru is defined as memory that can be given |

| execution_context.set_measure_to_view_map({}) | ||

| stats_module.stats = stats_module._Stats() | ||

|

|

||

| def test_constructor_and_setup(self): |

There was a problem hiding this comment.

Is this intentionally empty (since the purpose is to call setUp)?

There was a problem hiding this comment.

My mistake I should have commented this out. I tend to leave writing tests once the design changes have been approved.

opencensus/metrics/transport.py

Outdated

| Get a `PeriodicTask` that periodically calls: | ||

|

|

||

| exporter.export_metrics(metric_producer.get_metrics()) | ||

| exporter.export_metrics(all_metrics) |

There was a problem hiding this comment.

Consider putting the actual code example here (instead of the description below).

Consider itertools.chain.

|

|

||

|

|

||

| def get_exporter_thread(metric_producer, exporter, interval=None): | ||

| def get_exporter_thread(metric_producers, exporter, interval=None): |

There was a problem hiding this comment.

I have a design question, do we intend to have one MetricProducer taking multiple metrics, or multiple MetricProducer (which I guess is the case)? If it is the latter, what's the main consideration of having multiple producers (e.g. just to categorize by ownership, or ext lib, or we expect each producer to export individually?)

There was a problem hiding this comment.

Yes we intend to take multiple MetricProducer. The idea is simply as you noted to categorize ownership of the types of metrics that each Producer produces. My thought was to separate these based on logic (separate standard from custom in this case). If a developer would like to produce some other set of metrics using a different Producer, they can simply create their own MetricProducer and implement "get_metrics".

There was a problem hiding this comment.

@c24t would you take a look at the changes to the core library? Thanks.

There was a problem hiding this comment.

This change looks fine, but the other option is to add a metric producer class that calls other metric producers under the hood. Since we're likely to make a similar change for exporters we might want to revisit this later. But note that this change changes the API, the other way doesn't.

tests/unit/metrics/test_transport.py

Outdated

| exporter.export_metrics.assert_called_once_with(metrics) | ||

| finally: | ||

| task.cancel() | ||

| task.join() No newline at end of file |

| options = Options(**options) | ||

| exporter = MetricsExporter(options=options) | ||

| transport.get_exporter_thread(stats.stats, | ||

| options_ = Options(**options) |

There was a problem hiding this comment.

I don't have a strong opinion here, but I was curious on why do we need options_ instead of using options = Options(**options).

There was a problem hiding this comment.

It was to differentiate the parameter from the local variable. I didn't want to name it something completely different.

| 'test', | ||

| 'test', | ||

| []) | ||

| test_gauge.create_default_time_series(lambda x: (1)) |

| # Definitions taken from psutil docs | ||

| # https://psutil.readthedocs.io/en/latest/ | ||

| class AvailableMemoryStandardMetric(BaseStandardMetric): | ||

| """ Metric for Available Memory |

There was a problem hiding this comment.

minor, the comments are using different styles, it'll be good to keep a consistent style.

c24t

left a comment

c24t

left a comment

There was a problem hiding this comment.

Stepping back, I don't think there's a good case for standard_metrics.py in the core library, but the changes in the azure exporter look fine. Some comments on the code, structure, and style.

| self.assertEqual(len(exporter_mock.call_args[0][0]), 2) | ||

|

|

||

| @mock.patch('opencensus.ext.azure.metrics_exporter' + | ||

| '.transport.get_exporter_thread', return_value=mock.Mock()) |

There was a problem hiding this comment.

These will join without the +, and isn't returning a mock the default behavior?

| def __init__(self): | ||

| super(StandardMetricsProducer, self).__init__() | ||

|

|

||

| """ |

| using gauges | ||

| """ | ||

| def __init__(self): | ||

| super(StandardMetricsProducer, self).__init__() |

There was a problem hiding this comment.

You'd call the super init anyway if this init didn't exist.

|

|

||

| class StandardMetricsProducer(Registry): | ||

| """Implementation of the producer of standard metrics | ||

| using gauges |

There was a problem hiding this comment.

Last comment about docstring line wrapping:

class StandardMetricsProducer(Registry):

"""Some short description up to 79 chars.

Longer flowing text isn't indented, and can wrap and wrap and wrap and wrap

and wrap and wrap and wrap and wrap and wrap.

:type some_arg: document init args in the class docstring

:param some_arg: :class:`OrOmitIfThereArentAny`

"""

def __init__(self):

...There was a problem hiding this comment.

Really we ought to catch this in lint to avoid this kind of comment in review.

| instantly to processes without the system going into swap | ||

| """ | ||

| def __init__(self): | ||

| super(AvailableMemoryStandardMetric, self).__init__() |

| types = [a for a in attributes | ||

| if not(a[0].startswith('__') and | ||

| a[0].endswith('__'))] | ||

| self.assertEquals(len(producer.metrics), len(types)) |

There was a problem hiding this comment.

It'd be a lot clearer just to list these out (especially since there's only one metric at the moment) than call inspect in the test.

opencensus/metrics/transport.py

Outdated

| export(itertools.chain(*all_gets)) | ||

|

|

||

| :type metric_producer: | ||

| where all_gets is the concatenation of all metrics produced by |

There was a problem hiding this comment.

Dedent this to get it to render as text, and consider wrapping the code blocks in backticks. You might want to generate the docs to see how this looks.

| metric_producer.get_metrics() | ||

|

|

||

| :type metric_producers: list | ||

| :param metric_producers: The list of metric producers to use to get metrics |

|

|

||

|

|

||

| def get_exporter_thread(metric_producer, exporter, interval=None): | ||

| def get_exporter_thread(metric_producers, exporter, interval=None): |

There was a problem hiding this comment.

This change looks fine, but the other option is to add a metric producer class that calls other metric producers under the hood. Since we're likely to make a similar change for exporters we might want to revisit this later. But note that this change changes the API, the other way doesn't.

CHANGELOG.md

Outdated

| # Changelog | ||

|

|

||

| ## Unreleased | ||

| - Refactored PeriodicMetricTask |

There was a problem hiding this comment.

The changelog should generally describe user-facing changes.

|

@c24t Thanks for reviewing. My initial design idea was to abstract the metric types and producers so that developers can write their own standard metrics and producers. However I realize that Metric_producer class does essentially this for ALL metric producers; there is no need to have an interface for STANDARD metric producer. |

|

@c24t I removed the standard metrics file from the core sdk as per your suggestion. It doesn't make a lot of sense to include it. I have also made changes to the docstrings to adhere to the recommended practices for python docstrings. Can you take a look when you have the time? |

| md = metric.descriptor | ||

| # Each time series will be uniquely | ||

| # identified by it's label values | ||

| # Each time series will be uniquely identified by it's |

There was a problem hiding this comment.

| # Each time series will be uniquely identified by it's | |

| # Each time series will be uniquely identified by its |

|

|

||

| self.assertEqual(exporter.options.instrumentation_key, iKey) | ||

| self.assertEqual(len(exporter_mock.call_args_list), 1) | ||

| self.assertEqual(len(exporter_mock.call_args[0][0]), 2) |

There was a problem hiding this comment.

Why not check that the the actual metric producer is in the arg list here?

| print("Done recording metrics") | ||

|

|

||

|

|

||

| if __name__ == "__main__": |

|

@reyang Ready to merge! |

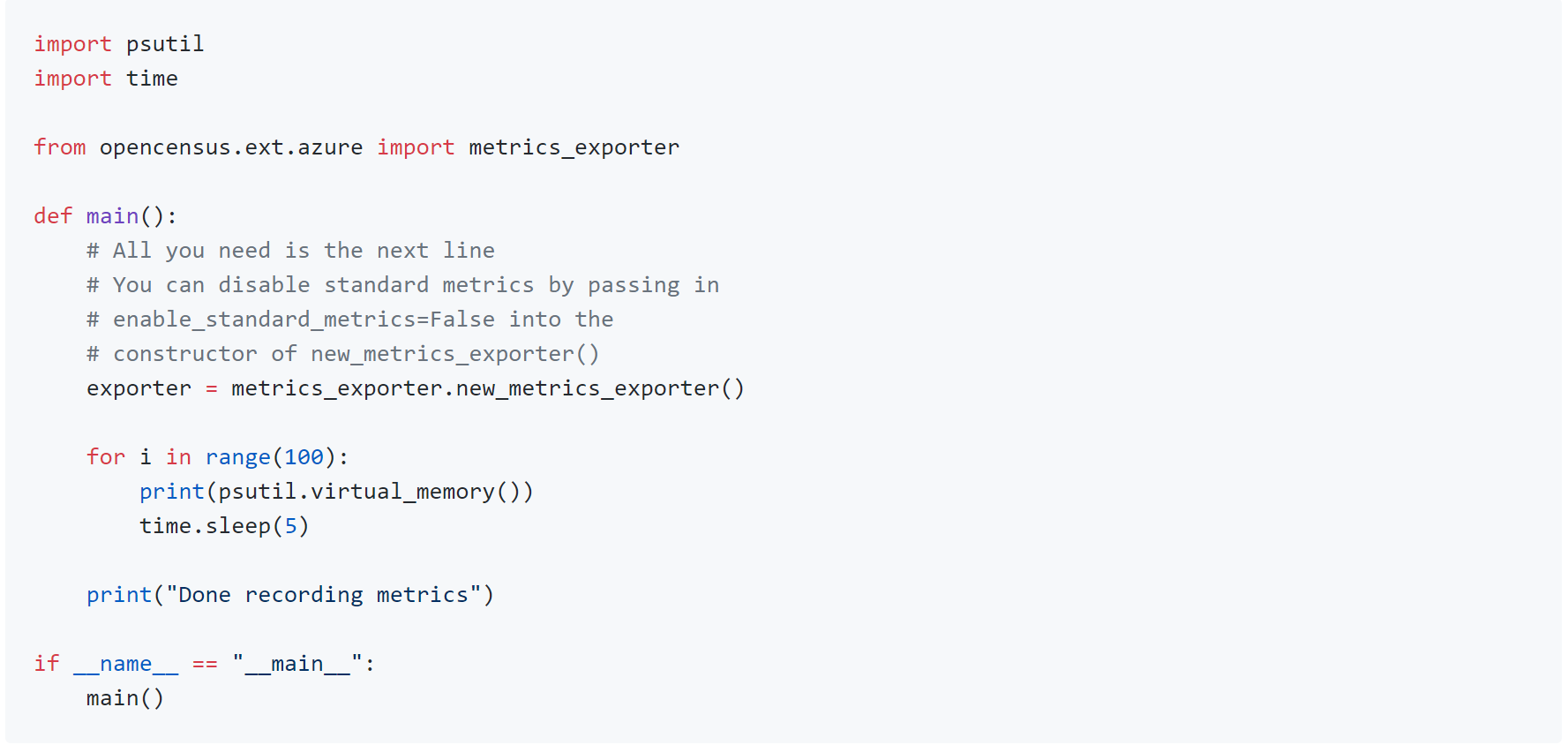

Initial implementation of standard metrics. [#695]

Standard Metrics are defined as metrics that will be exported by default using the metrics exporter.

Standard metrics are enabled by default as suggested by @c24t

The first one that I am including is Free Memory in bytes.

Free Memory is defined as: memory not being used at all (zeroed) that is readily available; note that this doesn’t reflect the actual memory available (use available instead). total - used does not necessarily match free. Defined in https://psutil.readthedocs.io/en/latest/

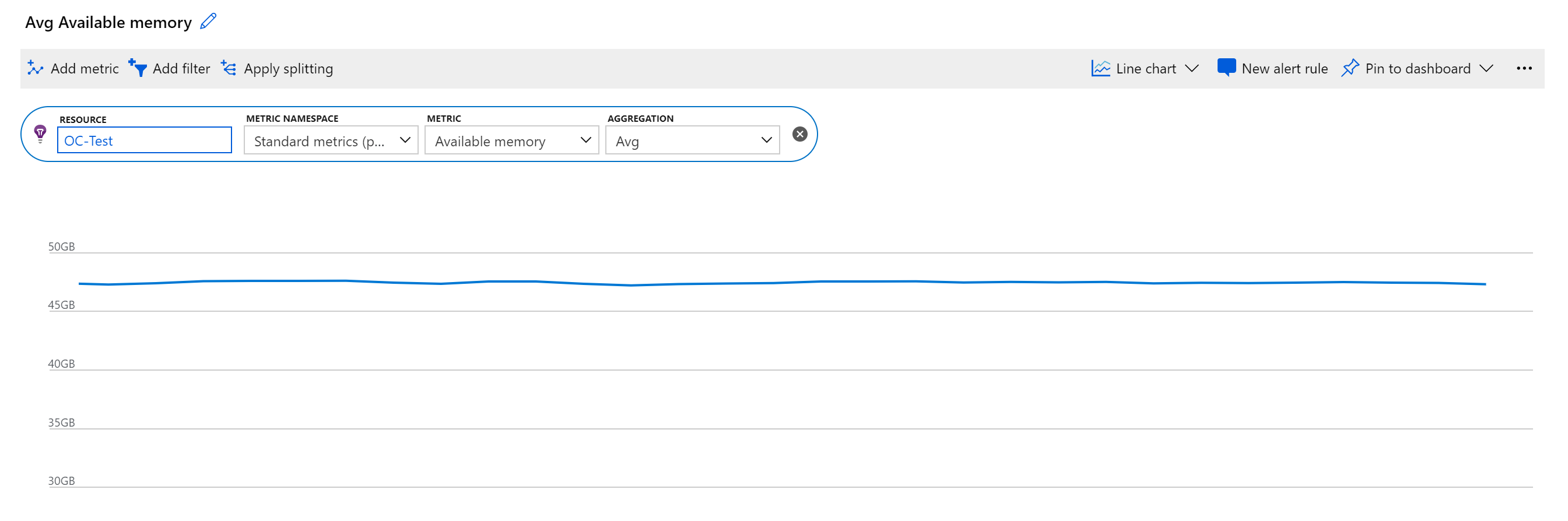

In Azure Monitor, there are special namespace names to denote a metric as being "standard". For Free Memory, you can view the telemetry in metrics explorer by going to Standard Metrics (preview) namespace and Available Memory under Performance Counters.

As for architectural decisions, the periodic recording of the standard metrics must be non-blocking to the main thread. So I chose to use a separate recording thread (initiated by MetricExporterTask) to perform this. The exporter and recorder threads should be decoupled to perform their specific jobs (the simpler the task, the better on cpu and memory).

There is a possible race condition in which the exporting is called before the recording, but the metric will then be simply delayed by one interval, which I don't think is a huge issue.