A PyTorch implementation of Single Shot MultiBox Detector from the 2016 paper by Wei Liu, Dragomir Anguelov, Dumitru Erhan, Christian Szegedy, Scott Reed, Cheng-Yang, and Alexander C. Berg. The official and original Caffe code can be found here.

- Install PyTorch by selecting your environment on the website and running the appropriate command.

- Clone this repository.

- Note: We only guarantee full functionality with Python 3.

- Then download the dataset by following the instructions below.

- We now support Visdom for real-time loss visualization during training!

- To use Visdom in the browser:

# First install Python server and client pip install visdom # Start the server (probably in a screen or tmux) python -m visdom.server

- Then (during training) navigate to http://localhost:8097/ (see the Train section below for training details).

- Note: For training, we currently only support VOC, but are adding COCO and hopefully ImageNet soon.

To make things easy, we provide a simple VOC dataset loader that enherits torch.utils.data.Dataset making it fully compatible with the torchvision.datasets API.

# specify a directory for dataset to be downloaded into, else default is ~/data/

sh data/scripts/VOC2007.sh # <directory># specify a directory for dataset to be downloaded into, else default is ~/data/

sh data/scripts/VOC2012.sh # <directory>Ensure the following directory structure (as specified in VOCdevkit):

VOCdevkit/ % development kit

VOCdevkit/VOC2007/ImageSets % image sets

VOCdevkit/VOC2007/Annotations % annotation files

VOCdevkit/VOC2007/JPEGImages % images

VOCdevkit/VOC2007/SegmentationObject % segmentations by object

VOCdevkit/VOC2007/SegmentationClass % segmentations by class

- First download the fc-reduced VGG-16 PyTorch base network weights at: https://s3.amazonaws.com/amdegroot-models/vgg16_reducedfc.pth

- By default, we assume you have downloaded the file in the

ssd.pytorch/weightsdir:

mkdir weights

cd weights

wget https://s3.amazonaws.com/amdegroot-models/vgg16_reducedfc.pth- To train SSD using the train script simply specify the parameters listed in

train.pyas a flag or manually change them.

python train.py- Training Parameter Options:

'--version', default='v2', help='conv11_2(v2) or pool6(v1) as last layer'

'--basenet', default='vgg16_reducedfc.pth', help='pretrained base model'

'--jaccard_threshold', default=0.5, type=float, help='Min Jaccard index for matching'

'--batch_size', default=16, type=int, help='Batch size for training'

'--num_workers', default=4, type=int, help='Number of workers used in dataloading'

'--iterations', default=120000, type=int, help='Number of training epochs'

'--cuda', default=True, type=bool, help='Use cuda to train model'

'--lr', '--learning-rate', default=1e-3, type=float, help='initial learning rate'

'--momentum', default=0.9, type=float, help='momentum'

'--weight_decay', default=5e-4, type=float, help='Weight decay for SGD'

'--gamma', default=0.1, type=float, help='Gamma update for SGD'

'--log_iters', default=True, type=bool, help='Print the loss at each iteration'

'--visdom', default=True, type=bool, help='Use visdom to for loss visualization'

'--save_folder', default='weights/', help='Location to save checkpoint models'- Note:

- For training, an NVIDIA GPU is strongly recommended for speed.

- Currently we only support training on v2 (the newest version).

- For instructions on Visdom usage/installation, see the Installation section.

To evaluate a trained network:

python test.pyYou can specify the parameters listed in the test.py file by flagging them or manually changing them.

- We are trying to provide PyTorch

state_dicts(dict of weight tensors) of the latest SSD model definitions trained on different datasets. - Currently, we provide the following PyTorch models:

- SSD300 v2 trained on VOC0712 (newest version)

- SSD300 v1 (original/old pool6 version) trained on VOC07

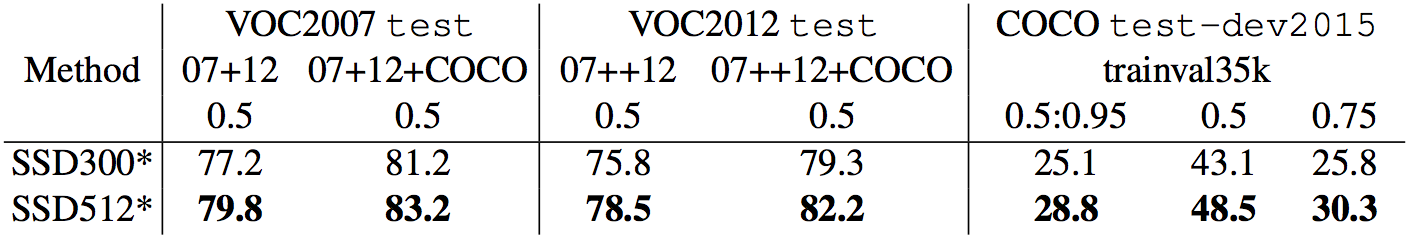

- Our goal is to reproduce this table from the original paper

- Make sure you have jupyter notebook installed.

- Two alternatives for installing jupyter notebook:

# make sure pip is upgraded

pip3 install --upgrade pip

# install jupyter notebook

pip install jupyter

# Run this inside ssd.pytorch

jupyter notebook- Now navigate to

demo.ipynbat http://localhost:8888 (by default) and have at it!

We have accumulated the following to-do list, which you can expect to be done in the very near future

- Complete data augmentation (in progress)

- Train SSD300 with batch norm (in progress)

- Webcam demo (in progress)

- Add support for SSD512 training and testing

- Add support for COCO dataset

- Create a functional model definition for Sergey Zagoruyko's functional-zoo (in progress)

- Wei Liu, et al. "SSD: Single Shot MultiBox Detector." ECCV2016.

- Original Implementation (CAFFE)

- A list of other great SSD ports that were sources of inspiration (especially the Chainer repo):