[path]

-```

-

-**Examples**

-

-Generating a home page:

-

-```plaintext

-~/redwood-app$ yarn redwood generate page home /

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood g page home /

- ✔ Generating page files...

- ✔ Writing `./web/src/pages/HomePage/HomePage.test.js`...

- ✔ Writing `./web/src/pages/HomePage/HomePage.js`...

- ✔ Updating routes file...

-Done in 1.02s.

-```

-

-The page returns jsx telling you where to find it:

-

-```javascript

-// ./web/src/pages/HomePage/HomePage.js

-

-const HomePage = () => {

- return (

-

-

HomePage

-

Find me in ./web/src/pages/HomePage/HomePage.js

-

QuotePage

- Find me in "./web/src/pages/QuotePage/QuotePage.js"

-

- My default route is named "quote", link to me with `

- Quote 42`

-

- The parameter passed to me is {id}

-

- )

-}

-

-export default QuotePage

-```

-

-And the route is added to `Routes.js`, with the route parameter added:

-

-```javascript{6}

-// ./web/src/Routes.js

-

-const Routes = () => {

- return (

-

-

-

-

- )

-}

-```

-

-### generate scaffold

-

-Generate Pages, SDL, and Services files based on a given DB schema Model. Also accepts ``.

-

-```terminal

-yarn redwood generate scaffold

-```

-

-A scaffold quickly creates a CRUD for a model by generating the following files and corresponding routes:

-

-- sdl

-- service

-- layout

-- pages

-- cells

-- components

-

-The content of the generated components is different from what you'd get by running them individually.

-

-| Arguments & Options | Description |

-| -------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

-| `model` | Model to scaffold. You can also use `` to nest files by type at the given path directory (or directories). For example, `redwood g scaffold admin/post` |

-| `--force, -f` | Overwrite existing files |

-| `--typescript, --ts` | Generate TypeScript files Enabled by default if we detect your project is TypeScript |

-

-**Usage**

-

-See [Creating a Post Editor](tutorial/chapter2/getting-dynamic.md#creating-a-post-editor).

-

-**Nesting of Components and Pages**

-

-By default, redwood will nest the components and pages in a directory named as per the model. For example (where `post` is the model):

-`yarn rw g scaffold post`

-will output the following files, with the components and pages nested in a `Post` directory:

-

-```plaintext{9-20}

- √ Generating scaffold files...

- √ Successfully wrote file `./api/src/graphql/posts.sdl.js`

- √ Successfully wrote file `./api/src/services/posts/posts.js`

- √ Successfully wrote file `./api/src/services/posts/posts.scenarios.js`

- √ Successfully wrote file `./api/src/services/posts/posts.test.js`

- √ Successfully wrote file `./web/src/layouts/PostsLayout/PostsLayout.js`

- √ Successfully wrote file `./web/src/pages/Post/EditPostPage/EditPostPage.js`

- √ Successfully wrote file `./web/src/pages/Post/PostPage/PostPage.js`

- √ Successfully wrote file `./web/src/pages/Post/PostsPage/PostsPage.js`

- √ Successfully wrote file `./web/src/pages/Post/NewPostPage/NewPostPage.js`

- √ Successfully wrote file `./web/src/components/Post/EditPostCell/EditPostCell.js`

- √ Successfully wrote file `./web/src/components/Post/Post/Post.js`

- √ Successfully wrote file `./web/src/components/Post/PostCell/PostCell.js`

- √ Successfully wrote file `./web/src/components/Post/PostForm/PostForm.js`

- √ Successfully wrote file `./web/src/components/Post/Posts/Posts.js`

- √ Successfully wrote file `./web/src/components/Post/PostsCell/PostsCell.js`

- √ Successfully wrote file `./web/src/components/Post/NewPost/NewPost.js`

- √ Adding layout import...

- √ Adding set import...

- √ Adding scaffold routes...

- √ Adding scaffold asset imports...

-```

-

-If it is not desired to nest the components and pages, then redwood provides an option that you can set to disable this for your project.

-Add the following in your `redwood.toml` file to disable the nesting of components and pages.

-

-```

-[generate]

- nestScaffoldByModel = false

-```

-

-Setting the `nestScaffoldByModel = true` will retain the default behavior, but is not required.

-

-Notes:

-

-1. The nesting directory is always set to be PascalCase.

-

-**Namespacing Scaffolds**

-

-You can namespace your scaffolds by providing ``. The layout, pages, cells, and components will be nested in newly created dir(s). In addition, the nesting folder, based upon the model name, is still applied after the path for components and pages, unless turned off in the `redwood.toml` as described above. For example, given a model `user`, running `yarn redwood generate scaffold admin/user` will nest the layout, pages, and components in a newly created `Admin` directory created for each of the `layouts`, `pages`, and `components` folders:

-

-```plaintext{9-20}

-~/redwood-app$ yarn redwood generate scaffold admin/user

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood g scaffold admin/user

- ✔ Generating scaffold files...

- ✔ Successfully wrote file `./api/src/graphql/users.sdl.js`

- ✔ Successfully wrote file `./api/src/services/users/users.js`

- ✔ Successfully wrote file `./api/src/services/users/users.scenarios.js`

- ✔ Successfully wrote file `./api/src/services/users/users.test.js`

- ✔ Successfully wrote file `./web/src/layouts/Admin/UsersLayout/UsersLayout.js`

- ✔ Successfully wrote file `./web/src/pages/Admin/User/EditUserPage/EditUserPage.js`

- ✔ Successfully wrote file `./web/src/pages/Admin/User/UserPage/UserPage.js`

- ✔ Successfully wrote file `./web/src/pages/Admin/User/UsersPage/UsersPage.js`

- ✔ Successfully wrote file `./web/src/pages/Admin/User/NewUserPage/NewUserPage.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/EditUserCell/EditUserCell.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/User/User.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/UserCell/UserCell.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/UserForm/UserForm.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/Users/Users.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/UsersCell/UsersCell.js`

- ✔ Successfully wrote file `./web/src/components/Admin/User/NewUser/NewUser.js`

- ✔ Adding layout import...

- ✔ Adding set import...

- ✔ Adding scaffold routes...

- ✔ Adding scaffold asset imports...

-Done in 1.21s.

-```

-

-The routes wrapped in the [`Set`](router.md#sets-of-routes) component with generated layout will be nested too:

-

-```javascript{6-11}

-// ./web/src/Routes.js

-

-const Routes = () => {

- return (

-

-

-

-

-

-

-

-

-

- )

-}

-```

-

-Notes:

-

-1. Each directory in the scaffolded path is always set to be PascalCase.

-2. The scaffold path may be multiple directories deep.

-

-**Destroying**

-

-```

-yarn redwood d scaffold

-```

-

-Notes:

-

-1. You can also use `` to destroy files that were generated under a scaffold path. For example, `redwood d scaffold admin/post`

-2. The destroy command will remove empty folders along the path, provided they are lower than the folder level of component, layout, page, etc.

-3. The destroy scaffold command will also follow the `nestScaffoldbyModel` setting in the `redwood.toml` file. For example, if you have an existing scaffold that you wish to destroy, that does not have the pages and components nested by the model name, you can destroy the scaffold by temporarily setting:

-

-```

-[generate]

- nestScaffoldByModel = false

-```

-

-### generate sdl

-

-Generate a GraphQL schema and service object.

-

-```terminal

-yarn redwood generate sdl

-```

-

-The sdl will inspect your `schema.prisma` and will do its best with relations. Schema to generators isn't one-to-one yet (and might never be).

-

-

-

-| Arguments & Options | Description |

-| -------------------- | ------------------------------------------------------------------------------------ |

-| `model` | Model to generate the sdl for |

-| `--crud` | Set to `false`, or use `--no-crud`, if you do not want to generate mutations |

-| `--force, -f` | Overwrite existing files |

-| `--tests` | Generate service test and scenario [default: true] |

-| `--typescript, --ts` | Generate TypeScript files Enabled by default if we detect your project is TypeScript |

-

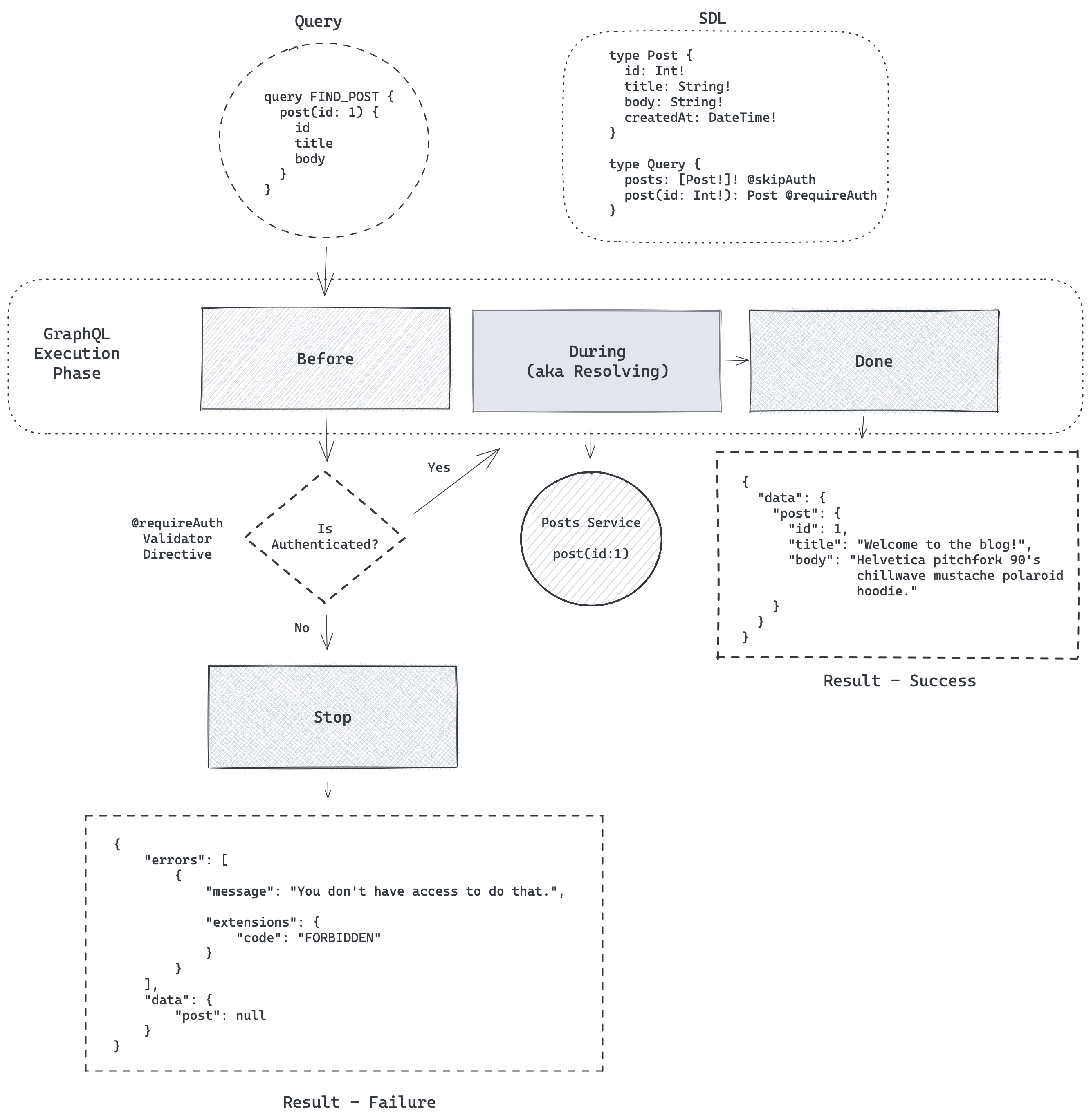

-> **Note:** The generated sdl will include the `@requireAuth` directive by default to ensure queries and mutations are secure. If your app's queries and mutations are all public, you can set up a custom SDL generator template to apply `@skipAuth` (or a custom validator directive) to suit you application's needs.

-

-**Regenerating the SDL**

-

-Often, as you iterate on your data model, you may add, remove, or rename fields. You still want Redwood to update the generated SDL and service files for those updates because it saves time not having to make those changes manually.

-

-But, since the `generate` command prevents you from overwriting files accidentally, you use the `--force` option -- but a `force` will reset any test and scenarios you may have written which you don't want to lose.

-

-In that case, you can run the following to "regenerate" **just** the SDL file and leave your tests and scenarios intact and not lose your hard work.

-

-```

-yarn redwood g sdl --force --no-tests

-```

-

-**Example**

-

-```terminal

-~/redwood-app$ yarn redwood generate sdl user --force --no-tests

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood g sdl user

- ✔ Generating SDL files...

- ✔ Writing `./api/src/graphql/users.sdl.js`...

- ✔ Writing `./api/src/services/users/users.js`...

-Done in 1.04s.

-```

-

-**Destroying**

-

-```

-yarn redwood d sdl

-```

-

-**Example**

-

-Generating a user sdl:

-

-```terminal

-~/redwood-app$ yarn redwood generate sdl user

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood g sdl user

- ✔ Generating SDL files...

- ✔ Writing `./api/src/graphql/users.sdl.js`...

- ✔ Writing `./api/src/services/users/users.scenarios.js`...

- ✔ Writing `./api/src/services/users/users.test.js`...

- ✔ Writing `./api/src/services/users/users.js`...

-Done in 1.04s.

-```

-

-The generated sdl defines a corresponding type, query, create/update inputs, and any mutations. To prevent defining mutations, add the `--no-crud` option.

-

-```javascript

-// ./api/src/graphql/users.sdl.js

-

-export const schema = gql`

- type User {

- id: Int!

- email: String!

- name: String

- }

-

- type Query {

- users: [User!]! @requireAuth

- }

-

- input CreateUserInput {

- email: String!

- name: String

- }

-

- input UpdateUserInput {

- email: String

- name: String

- }

-

- type Mutation {

- createUser(input: CreateUserInput!): User! @requireAuth

- updateUser(id: Int!, input: UpdateUserInput!): User! @requireAuth

- deleteUser(id: Int!): User! @requireAuth

- }

-`

-```

-

-The services file fulfills the query. If the `--no-crud` option is added, this file will be less complex.

-

-```javascript

-// ./api/src/services/users/users.js

-

-import { db } from 'src/lib/db'

-

-export const users = () => {

- return db.user.findMany()

-}

-```

-

-For a model with a relation, the field will be listed in the sdl:

-

-```javascript{8}

-// ./api/src/graphql/users.sdl.js

-

-export const schema = gql`

- type User {

- id: Int!

- email: String!

- name: String

- profile: Profile

- }

-

- type Query {

- users: [User!]! @requireAuth

- }

-

- input CreateUserInput {

- email: String!

- name: String

- }

-

- input UpdateUserInput {

- email: String

- name: String

- }

-

- type Mutation {

- createUser(input: CreateUserInput!): User! @requireAuth

- updateUser(id: Int!, input: UpdateUserInput!): User! @requireAuth

- deleteUser(id: Int!): User! @requireAuth

- }

-`

-```

-

-And the service will export an object with the relation as a property:

-

-```javascript{9-13}

-// ./api/src/services/users/users.js

-

-import { db } from 'src/lib/db'

-

-export const users = () => {

- return db.user.findMany()

-}

-

-export const User = {

- profile: (_obj, { root }) => {

- db.user.findUnique({ where: { id: root.id } }).profile(),

- }

-}

-```

-

-### generate secret

-

-Generate a secret key using a cryptographically-secure source of entropy. Commonly used when setting up dbAuth.

-

-| Arguments & Options | Description |

-| :------------------ | :------------------------------------------------- |

-| `--raw` | Print just the key, without any informational text |

-

-**Usage**

-

-Using the `--raw` option you can easily append a secret key to your .env file, like so:

-

-```

-echo "SESSION_SECRET=$(yarn --silent rw g secret --raw)" >> .env

-```

-

-### generate service

-

-Generate a service component.

-

-```terminal

-yarn redwood generate service

-```

-

-Services are where Redwood puts its business logic. They can be used by your GraphQL API or any other place in your backend code. See [How Redwood Works with Data](tutorial/chapter2/side-quest.md).

-

-| Arguments & Options | Description |

-| -------------------- | ------------------------------------------------------------------------------------ |

-| `name` | Name of the service |

-| `--force, -f` | Overwrite existing files |

-| `--typescript, --ts` | Generate TypeScript files Enabled by default if we detect your project is TypeScript |

-| `--tests` | Generate test and scenario files [default: true] |

-

-

-**Destroying**

-

-```

-yarn redwood d service

-```

-

-**Example**

-

-Generating a user service:

-

-```terminal

-~/redwood-app$ yarn redwood generate service user

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood g service user

- ✔ Generating service files...

- ✔ Writing `./api/src/services/users/users.scenarios.js`...

- ✔ Writing `./api/src/services/users/users.test.js`...

- ✔ Writing `./api/src/services/users/users.js`...

-Done in 1.02s.

-```

-

-The generated service component will export a `findMany` query:

-

-```javascript

-// ./api/src/services/users/users.js

-

-import { db } from 'src/lib/db'

-

-export const users = () => {

- return db.user.findMany()

-}

-```

-

-### generate types

-

-Generates supplementary code (project types)

-

-```terminal

-yarn redwood generate types

-```

-

-**Usage**

-

-```

-~/redwood-app$ yarn redwood generate types

-yarn run v1.22.10

-$ /redwood-app/node_modules/.bin/redwood g types

-$ /redwood-app/node_modules/.bin/rw-gen

-

-Generating...

-

-- .redwood/schema.graphql

-- .redwood/types/mirror/api/src/services/posts/index.d.ts

-- .redwood/types/mirror/web/src/components/BlogPost/index.d.ts

-- .redwood/types/mirror/web/src/layouts/BlogLayout/index.d.ts

-...

-- .redwood/types/mirror/web/src/components/Post/PostsCell/index.d.ts

-- .redwood/types/includes/web-routesPages.d.ts

-- .redwood/types/includes/all-currentUser.d.ts

-- .redwood/types/includes/web-routerRoutes.d.ts

-- .redwood/types/includes/api-globImports.d.ts

-- .redwood/types/includes/api-globalContext.d.ts

-- .redwood/types/includes/api-scenarios.d.ts

-- api/types/graphql.d.ts

-- web/types/graphql.d.ts

-

-... and done.

-```

-

-### generate script

-

-Generates an arbitrary Node.js script in `./scripts/` that can be used with `redwood execute` command later.

-

-| Arguments & Options | Description |

-| -------------------- | ------------------------------------------------------------------------------------ |

-| `name` | Name of the service |

-| `--typescript, --ts` | Generate TypeScript files Enabled by default if we detect your project is TypeScript |

-

-Scripts have access to services and libraries used in your project. Some examples of how this can be useful:

-

-- create special database seed scripts for different scenarios

-- sync products and prices from your payment provider

-- running cleanup jobs on a regular basis e.g. delete stale/expired data

-- sync data between platforms e.g. email from your db to your email marketing platform

-

-**Usage**

-

-```

-❯ yarn rw g script syncStripeProducts

-

- ✔ Generating script file...

- ✔ Successfully wrote file `./scripts/syncStripeProducts.ts`

- ✔ Next steps...

-

- After modifying your script, you can invoke it like:

-

- yarn rw exec syncStripeProducts

-

- yarn rw exec syncStripeProducts --param1 true

-```

-

-## info

-

-Print your system environment information.

-

-```terminal

-yarn redwood info

-```

-

-This command's primarily intended for getting information others might need to know to help you debug:

-

-```terminal

-~/redwood-app$ yarn redwood info

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/redwood info

-

- System:

- OS: Linux 5.4 Ubuntu 20.04 LTS (Focal Fossa)

- Shell: 5.0.16 - /usr/bin/bash

- Binaries:

- Node: 13.12.0 - /tmp/yarn--1589998865777-0.9683603763419713/node

- Yarn: 1.22.4 - /tmp/yarn--1589998865777-0.9683603763419713/yarn

- Browsers:

- Chrome: 78.0.3904.108

- Firefox: 76.0.1

- npmPackages:

- @redwoodjs/core: ^0.7.0-rc.3 => 0.7.0-rc.3

-

-Done in 1.98s.

-```

-

-## lint

-

-Lint your files.

-

-```terminal

-yarn redwood lint

-```

-

-[Our ESLint configuration](https://github.com/redwoodjs/redwood/blob/master/packages/eslint-config/index.js) is a mix of [ESLint's recommended rules](https://eslint.org/docs/rules/), [React's recommended rules](https://www.npmjs.com/package/eslint-plugin-react#list-of-supported-rules), and a bit of our own stylistic flair:

-

-- no semicolons

-- comma dangle when multiline

-- single quotes

-- always use parenthesis around arrow functions

-- enforced import sorting

-

-| Option | Description |

-| :------ | :---------------- |

-| `--fix` | Try to fix errors |

-

-## prisma

-

-Run Prisma CLI with experimental features.

-

-```

-yarn redwood prisma

-```

-

-Redwood's `prisma` command is a lightweight wrapper around the Prisma CLI. It's the primary way you interact with your database.

-

-> **What do you mean it's a lightweight wrapper?**

->

-> By lightweight wrapper, we mean that we're handling some flags under the hood for you.

-> You can use the Prisma CLI directly (`yarn prisma`), but letting Redwood act as a proxy (`yarn redwood prisma`) saves you a lot of keystrokes.

-> For example, Redwood adds the `--preview-feature` and `--schema=api/db/schema.prisma` flags automatically.

->

-> If you want to know exactly what `yarn redwood prisma ` runs, which flags it's passing, etc., it's right at the top:

->

-> ```sh{3}

-> $ yarn redwood prisma migrate dev

-> yarn run v1.22.10

-> $ ~/redwood-app/node_modules/.bin/redwood prisma migrate dev

-> Running prisma cli:

-> yarn prisma migrate dev --schema "~/redwood-app/api/db/schema.prisma"

-> ...

-> ```

-

-Since `yarn redwood prisma` is just an entry point into all the database commands that the Prisma CLI has to offer, we won't try to provide an exhaustive reference of everything you can do with it here. Instead what we'll do is focus on some of the most common commands; those that you'll be running on a regular basis, and how they fit into Redwood's workflows.

-

-For the complete list of commands, see the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference). It's the authority.

-

-Along with the CLI reference, bookmark Prisma's [Migration Flows](https://www.prisma.io/docs/concepts/components/prisma-migrate/prisma-migrate-flows) doc—it'll prove to be an invaluable resource for understanding `yarn redwood prisma migrate`.

-

-| Command | Description |

-| :------------------ | :----------------------------------------------------------- |

-| `db ` | Manage your database schema and lifecycle during development |

-| `generate` | Generate artifacts (e.g. Prisma Client) |

-| `migrate ` | Update the database schema with migrations |

-

-### prisma db

-

-Manage your database schema and lifecycle during development.

-

-```

-yarn redwood prisma db

-```

-

-The `prisma db` namespace contains commands that operate directly against the database.

-

-#### prisma db pull

-

-Pull the schema from an existing database, updating the Prisma schema.

-

-> 👉 Quick link to the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference#db-pull).

-

-```

-yarn redwood prisma db pull

-```

-

-This command, formerly `introspect`, connects to your database and adds Prisma models to your Prisma schema that reflect the current database schema.

-

-> Warning: The command will Overwrite the current schema.prisma file with the new schema. Any manual changes or customization will be lost. Be sure to back up your current schema.prisma file before running `db pull` if it contains important modifications.

-

-#### prisma db push

-

-Push the state from your Prisma schema to your database.

-

-> 👉 Quick link to the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference#db-push).

-

-```

-yarn redwood prisma db push

-```

-

-This is your go-to command for prototyping changes to your Prisma schema (`schema.prisma`).

-Prior to to `yarn redwood prisma db push`, there wasn't a great way to try out changes to your Prisma schema without creating a migration.

-This command fills the void by "pushing" your `schema.prisma` file to your database without creating a migration. You don't even have to run `yarn redwood prisma generate` afterward—it's all taken care of for you, making it ideal for iterative development.

-

-#### prisma db seed

-

-Seed your database.

-

-> 👉 Quick link to the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference#db-seed-preview).

-

-```

-yarn redwood prisma db seed

-```

-

-This command seeds your database by running your project's `seed.js|ts` file which you can find in your `scripts` directory.

-

-Prisma's got a great [seeding guide](https://www.prisma.io/docs/guides/prisma-guides/seed-database) that covers both the concepts and the nuts and bolts.

-

-> **Important:** Prisma Migrate also triggers seeding in the following scenarios:

->

-> - you manually run the `yarn redwood prisma migrate reset` command

-> - the database is reset interactively in the context of using `yarn redwood prisma migrate dev`—for example, as a result of migration history conflicts or database schema drift

->

-> If you want to use `yarn redwood prisma migrate dev` or `yarn redwood prisma migrate reset` without seeding, you can pass the `--skip-seed` flag.

-

-While having a great seed might not be all that important at the start, as soon as you start collaborating with others, it becomes vital.

-

-**How does seeding actually work?**

-

-If you look at your project's `package.json` file, you'll notice a `prisma` section:

-

-```json

- "prisma": {

- "seed": "yarn rw exec seed"

- },

-```

-

-Prisma runs any command found in the `seed` setting when seeding via `yarn rw prisma db seed` or `yarn rw prisma migrate reset`.

-Here we're using the Redwood [`exec` cli command](#exec) that runs a script.

-

-If you wanted to seed your database using a different method (like `psql` and an `.sql` script), you can do so by changing the "seed" script command.

-

-**More About Seeding**

-

-In addition, you can [code along with Ryan Chenkie](https://www.youtube.com/watch?v=2LwTUIqjbPo), and learn how libraries like [faker](https://www.npmjs.com/package/faker) can help you create a large, realistic database fast, especially in tandem with Prisma's [createMany](https://www.prisma.io/docs/reference/api-reference/prisma-client-reference#createmany).

-

-

-

-

-

-

-

-

-

-

-

-**Log Formatting**

-

-If you use the Redwood Logger as part of your seed script, you can pipe the command to the LogFormatter to output prettified logs.

-

-For example, if your `scripts.seed.js` imports the `logger`:

-

-```js

-// scripts/seed.js

-import { db } from 'api/src/lib/db'

-import { logger } from 'api/src/lib/logger'

-

-export default async () => {

- try {

- const posts = [

- {

- title: 'Welcome to the blog!',

- body: "I'm baby single- origin coffee kickstarter lo.",

- },

- {

- title: 'A little more about me',

- body: 'Raclette shoreditch before they sold out lyft.',

- },

- {

- title: 'What is the meaning of life?',

- body: 'Meh waistcoat succulents umami asymmetrical, hoodie post-ironic paleo chillwave tote bag.',

- },

- ]

-

- Promise.all(

- posts.map(async (post) => {

- const newPost = await db.post.create({

- data: { title: post.title, body: post.body },

- })

-

- logger.debug({ data: newPost }, 'Added post')

- })

- )

- } catch (error) {

- logger.error(error)

- }

-}

-```

-

-You can pipe the script output to the formatter:

-

-```bash

-yarn rw prisma db seed | yarn rw-log-formatter

-```

-

-> Note: Just be sure to set `data` attribute, so the formatter recognizes the content.

-> For example: `logger.debug({ data: newPost }, 'Added post')`

-

-### prisma migrate

-

-Update the database schema with migrations.

-

-> 👉 Quick link to the [Prisma Concepts](https://www.prisma.io/docs/concepts/components/prisma-migrate).

-

-```

-yarn redwood prisma migrate

-```

-

-As a database toolkit, Prisma strives to be as holistic as possible. Prisma Migrate lets you use Prisma schema to make changes to your database declaratively, all while keeping things deterministic and fully customizable by generating the migration steps in a simple, familiar format: SQL.

-

-Since migrate generates plain SQL files, you can edit those SQL files before applying the migration using `yarn redwood prisma migrate --create-only`. This creates the migration based on the changes in the Prisma schema, but doesn't apply it, giving you the chance to go in and make any modifications you want. [Daniel Norman's tour of Prisma Migrate](https://www.youtube.com/watch?v=0LKhksstrfg) demonstrates this and more to great effect.

-

-Prisma Migrate has separate commands for applying migrations based on whether you're in dev or in production. The Prisma [Migration flows](https://www.prisma.io/docs/concepts/components/prisma-migrate/prisma-migrate-flows) goes over the difference between these workflows in more detail.

-

-#### prisma migrate dev

-

-Create a migration from changes in Prisma schema, apply it to the database, trigger generators (e.g. Prisma Client).

-

-> 👉 Quick link to the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference#migrate-dev).

-

-```

-yarn redwood prisma migrate dev

-```

-

-

-

-

-

-

-

-

-

-

-

-#### prisma migrate deploy

-

-Apply pending migrations to update the database schema in production/staging.

-

-> 👉 Quick link to the [Prisma CLI Reference](https://www.prisma.io/docs/reference/api-reference/command-reference#migrate-deploy).

-

-```

-yarn redwood prisma migrate deploy

-```

-

-#### prisma migrate reset

-

-This command deletes and recreates the database, or performs a "soft reset" by removing all data, tables, indexes, and other artifacts.

-

-It'll also re-seed your database by automatically running the `db seed` command. See [prisma db seed](#prisma-db-seed).

-

-> **_Important:_** For use in development environments only

-

-## record

-

-> This command is experimental and its behavior may change.

-

-Commands for working with RedwoodRecord.

-

-### record init

-

-Parses `schema.prisma` and caches the datamodel as JSON. Reads relationships between models and adds some configuration in `api/src/models/index.js`.

-

-```

-yarn rw record init

-```

-

-## redwood-tools (alias rwt)

-

-Redwood's companion CLI development tool. You'll be using this if you're contributing to Redwood. See [Contributing](https://github.com/redwoodjs/redwood/blob/main/CONTRIBUTING.md#cli-reference-redwood-tools) in the Redwood repo.

-

-## setup

-

-Initialize configuration and integrate third-party libraries effortlessly.

-

-```

-yarn redwood setup

-```

-

-| Commands | Description |

-| ------------------ | ------------------------------------------------------------------------------------------ |

-| `auth` | Set up auth configuration for a provider |

-| `custom-web-index` | Set up an `index.js` file, so you can customize how Redwood web is mounted in your browser |

-| `deploy` | Set up a deployment configuration for a provider |

-| `generator` | Copy default Redwood generator templates locally for customization |

-| `i18n` | Set up i18n |

-| `tsconfig` | Add relevant tsconfig so you can start using TypeScript |

-| `ui` | Set up a UI design or style library |

-| `webpack` | Set up a webpack config file in your project so you can add custom config |

-

-### setup auth

-

-Integrate an auth provider.

-

-```

-yarn redwood setup auth

-```

-

-| Arguments & Options | Description |

-| :------------------ | :---------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

-| `provider` | Auth provider to configure. Choices are `auth0`, `azureActiveDirectory`, `clerk`, `dbAuth`, `ethereum`, `firebase`, `goTrue`, `magicLink`, `netlify`, `nhost`, and `supabase` |

-| `--force, -f` | Overwrite existing configuration |

-

-**Usage**

-

-See [Authentication](authentication.md).

-

-### setup custom-web-index

-

-Redwood automatically mounts your `` to the DOM, but if you want to customize how that happens, you can use this setup command to generate an `index.js` file in `web/src`.

-

-```

-yarn redwood setup custom-web-index

-```

-

-| Arguments & Options | Description |

-| :------------------ | :----------------------- |

-| `--force, -f` | Overwrite existing files |

-

-### setup generator

-

-Copies a given generator's template files to your local app for customization. The next time you generate that type again, it will use your custom template instead of Redwood's default.

-

-```

-yarn rw setup generator

-```

-

-| Arguments & Options | Description |

-| :------------------ | :------------------------------------------------------------ |

-| `name` | Name of the generator template(s) to copy (see help for list) |

-| `--force, -f` | Overwrite existing copied template files |

-

-**Usage**

-

-If you wanted to customize the page generator template, run the command:

-

-```

-yarn rw setup generator page

-```

-

-And then check `web/generators/page` for the page, storybook and test template files. You don't need to keep all of these templates—you could customize just `page.tsx.template` and delete the others and they would still be generated, but using the default Redwood templates.

-

-The only exception to this rule is the scaffold templates. You'll get four directories, `assets`, `components`, `layouts` and `pages`. If you want to customize any one of the templates in those directories, you will need to keep all the other files inside of that same directory, even if you make no changes besides the one you care about. (This is due to the way the scaffold looks up its template files.) For example, if you wanted to customize only the index page of the scaffold (the one that lists all available records in the database) you would edit `web/generators/scaffold/pages/NamesPage.tsx.template` and keep the other pages in that directory. You _could_ delete the other three directories (`assets`, `components`, `layouts`) if you don't need to customize them.

-

-**Name Variants**

-

-Your template will receive the provided `name` in a number of different variations.

-

-For example, given the name `fooBar` your template will receive the following _variables_ with the given _values_

-

-| Variable | Value |

-| :------------------------ | :------------ |

-| `pascalName` | `FooBar` |

-| `camelName` | `fooBar` |

-| `singularPascalName` | `FooBar` |

-| `pluralPascalName` | `FooBars` |

-| `singularCamelName` | `fooBar` |

-| `pluralCamelName` | `fooBars` |

-| `singularParamName` | `foo-bar` |

-| `pluralParamName` | `foo-bars` |

-| `singularConstantName` | `FOO_BAR` |

-| `pluralConstantName` | `FOO_BARS` |

-

-**Example**

-

-Copying the cell generator templates:

-

-```terminal

-~/redwood-app$ yarn rw setup generator cell

-yarn run v1.22.4

-$ /redwood-app/node_modules/.bin/rw setup generator cell

- ✔ Copying generator templates...

- ✔ Wrote templates to /web/generators/cell

-✨ Done in 2.33s.

-```

-

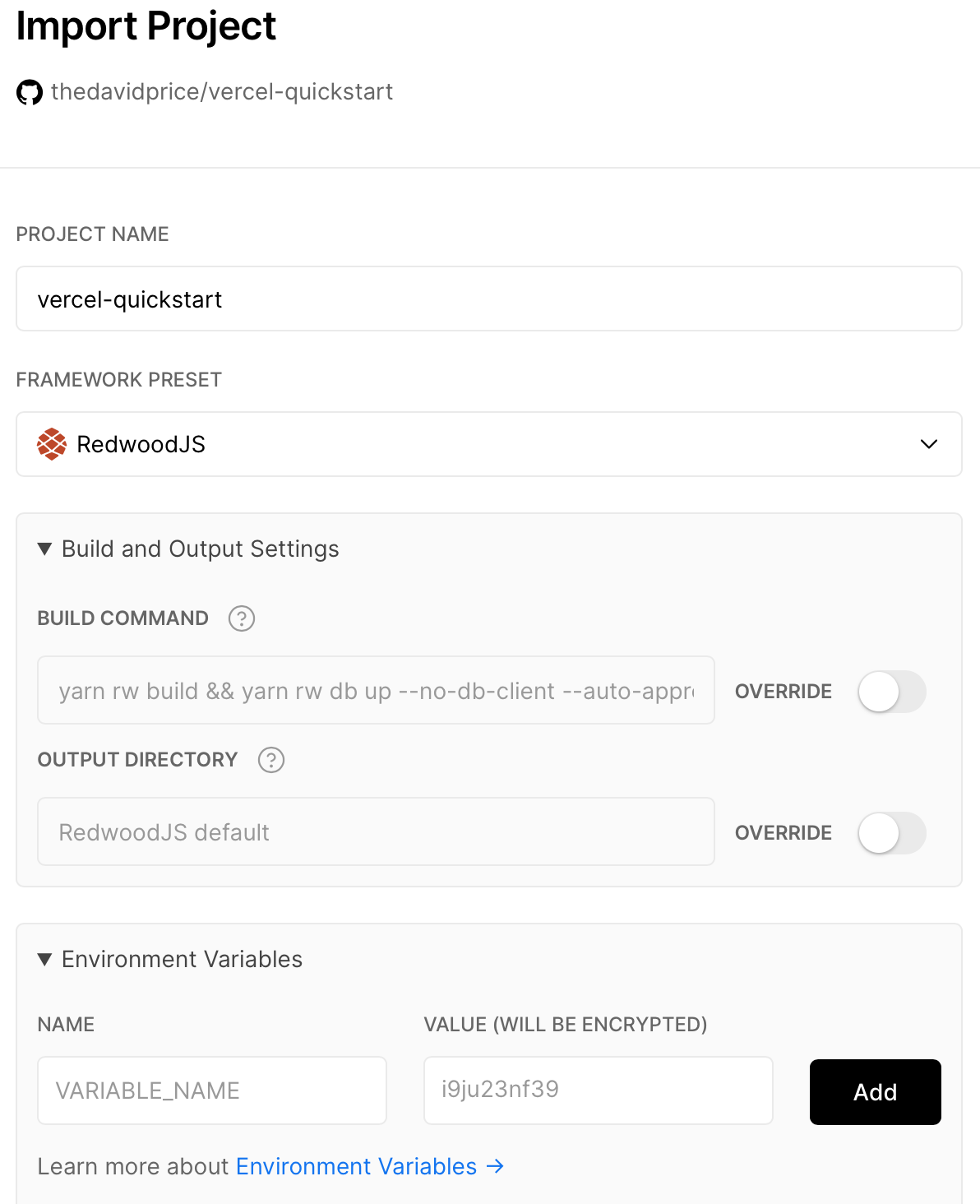

-### setup deploy (config)

-

-Set up a deployment configuration.

-

-```

-yarn redwood setup deploy

-```

-

-| Arguments & Options | Description |

-| :------------------ | :---------------------------------------------------------------------------------------------------- |

-| `provider` | Deploy provider to configure. Choices are `aws-serverless`, `netlify`, `render`, or `vercel` |

-| `--database, -d` | Database deployment for Render only [choices: "none", "postgresql", "sqlite"] [default: "postgresql"] |

-| `--force, -f` | Overwrite existing configuration [default: false] |

-

-#### setup deploy netlify

-

-When configuring Netlify deployment, the `setup deploy netlify` command generates a `netlify.toml` [configuration file](https://docs.netlify.com/configure-builds/file-based-configuration/) with the defaults needed to build and deploy a RedwoodJS site on Netlify.

-

-The `netlify.toml` file is a configuration file that specifies how Netlify builds and deploys your site — including redirects, branch and context-specific settings, and more.

-

-This configuration file also defines the settings needed for [Netlify Dev](https://docs.netlify.com/configure-builds/file-based-configuration/#netlify-dev) to detect that your site uses the RedwoodJS framework. Netlify Dev serves your RedwoodJS app as if it runs on the Netlify platform and can serve functions, handle Netlify [headers](https://docs.netlify.com/configure-builds/file-based-configuration/#headers) and [redirects](https://docs.netlify.com/configure-builds/file-based-configuration/#redirects).

-

-Netlify Dev can also create a tunnel from your local development server that allows you to share and collaborate with others using `netlify dev --live`.

-

-```

-// See: netlify.toml

-// ...

-[dev]

- # To use [Netlify Dev](https://www.netlify.com/products/dev/),

- # install netlify-cli from https://docs.netlify.com/cli/get-started/#installation

- # and then use netlify link https://docs.netlify.com/cli/get-started/#link-and-unlink-sites

- # to connect your local project to a site already on Netlify

- # then run netlify dev and our app will be accessible on the port specified below

- framework = "redwoodjs"

- # Set targetPort to the [web] side port as defined in redwood.toml

- targetPort = 8910

- # Point your browser to this port to access your RedwoodJS app

- port = 8888

-```

-

-In order to use [Netlify Dev](https://www.netlify.com/products/dev/) you need to:

-

-- install the latest [netlify-cli](https://docs.netlify.com/cli/get-started/#installation)

-- use [netlify link](https://docs.netlify.com/cli/get-started/#link-and-unlink-sites) to connect to your Netlify site

-- ensure that the `targetPort` matches the [web] side port in `redwood.toml`

-- run `netlify dev` and your site will be served on the specified `port` (e.g., 8888)

-- if you wish to share your local server with others, you can run `netlify dev --live`

-

-> Note: To detect the RedwoodJS framework, please use netlify-cli v3.34.0 or greater.

-

-### setup tsconfig

-

-Add a `tsconfig.json` to both the web and api sides so you can start using [TypeScript](typescript.md).

-

-```

-yarn redwood setup tsconfig

-```

-

-| Arguments & Options | Description |

-| :------------------ | :----------------------- |

-| `--force, -f` | Overwrite existing files |

-

-### setup ui

-

-Set up a UI design or style library. Right now the choices are [Chakra UI](https://chakra-ui.com/) and [TailwindCSS](https://tailwindcss.com/).

-

-```

-yarn rw setup ui

-```

-

-| Arguments & Options | Description |

-| :------------------ | :-------------------------------------------------------------- |

-| `library` | Library to configure. Choices are `chakra-ui` and `tailwindcss` |

-| `--force, -f` | Overwrite existing configuration |

-

-## storybook

-

-Starts Storybook locally

-

-```terminal

-yarn redwood storybook

-```

-

-[Storybook](https://storybook.js.org/docs/react/get-started/introduction) is a tool for UI development that allows you to develop your components in isolation, away from all the conflated cruft of your real app.

-

-> "Props in, views out! Make it simple to reason about."

-

-RedwoodJS supports Storybook by creating stories when generating cells, components, layouts and pages. You can then use these to describe how to render that UI component with representative data.

-

-| Arguments & Options | Description |

-| :------------------ | :------------------------------------------------ |

-| `--open` | Open Storybook in your browser on start |

-| `--build` | Build Storybook |

-| `--port` | Which port to run Storybook on (defaults to 7910) |

-

-## test

-

-Run Jest tests for api and web.

-

-```terminal

-yarn redwood test [side..]

-```

-

-| Arguments & Options | Description |

-| ------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

-| `sides or filter` | Which side(s) to test, and/or a regular expression to match against your test files to filter by |

-| `--help` | Show help |

-| `--version` | Show version number |

-| `--watch` | Run tests related to changed files based on hg/git (uncommitted files). Specify the name or path to a file to focus on a specific set of tests [default: true] |

-| `--watchAll` | Run all tests |

-| `--collectCoverage` | Show test coverage summary and output info to `coverage` directory in project root. See this directory for an .html coverage report |

-| `--clearCache` | Delete the Jest cache directory and exit without running tests |

-| `--db-push` | Syncs the test database with your Prisma schema without requiring a migration. It creates a test database if it doesn't already exist [default: true]. This flag is ignored if your project doesn't have an `api` side. [👉 More details](#prisma-db-push). |

-

-> **Note** all other flags are passed onto the jest cli. So for example if you wanted to update your snapshots you can pass the `-u` flag

-

-## type-check

-

-Runs a TypeScript compiler check on both the api and the web sides.

-

-```terminal

-yarn redwood type-check [side]

-```

-

-| Arguments & Options | Description |

-| ------------------- | ------------------------------------------------------------------------------ |

-| `side` | Which side(s) to run. Choices are `api` and `web`. Defaults to `api` and `web` |

-

-**Usage**

-

-See [Running Type Checks](typescript.md#running-type-checks).

-

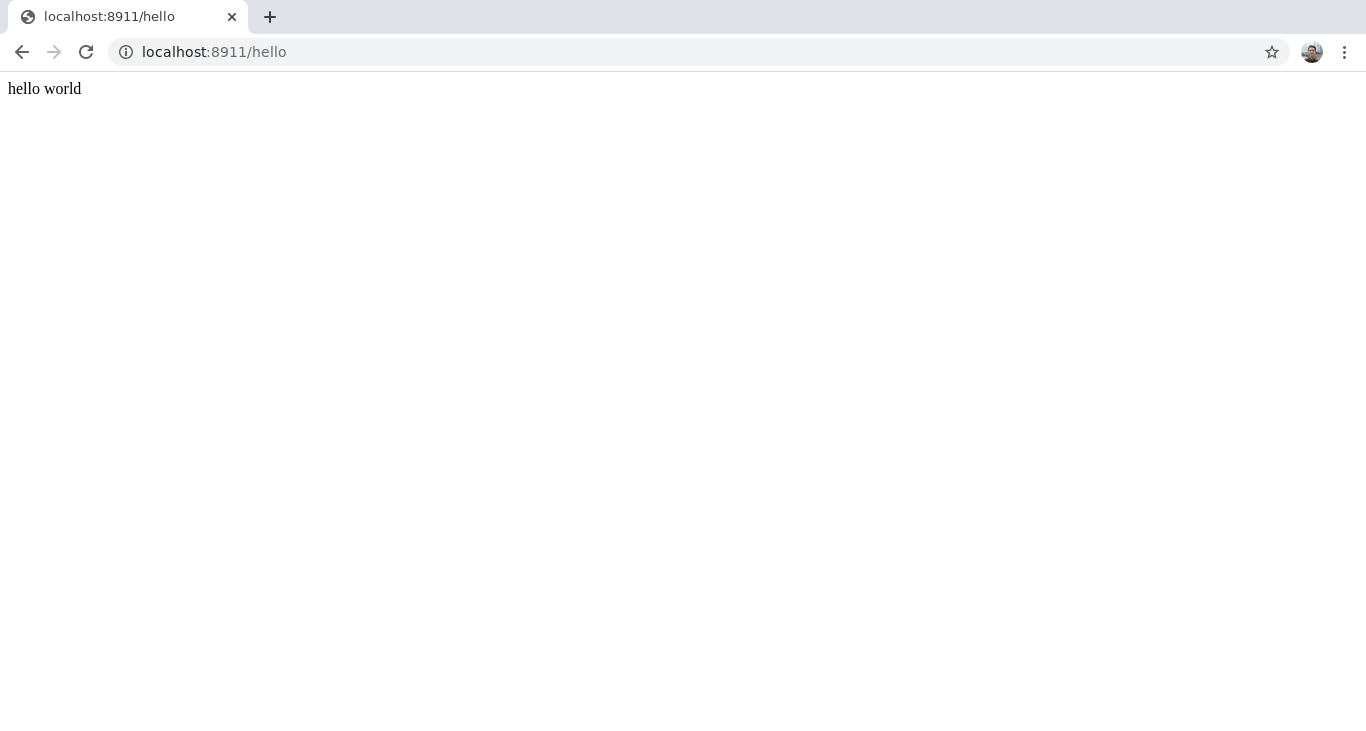

-## serve

-

-Runs a server that serves both the api and the web sides.

-

-```terminal

-yarn redwood serve [side]

-```

-

-> You should run `yarn rw build` before running this command to make sure all the static assets that will be served have been built.

-

-`yarn rw serve` is useful for debugging locally or for self-hosting—deploying a single server into a serverful environment. Since both the api and the web sides run in the same server, CORS isn't a problem.

-

-| Arguments & Options | Description |

-| ------------------- | ------------------------------------------------------------------------------ |

-| `side` | Which side(s) to run. Choices are `api` and `web`. Defaults to `api` and `web` |

-| `--port` | What port should the server run on [default: 8911] |

-| `--socket` | The socket the server should run. This takes precedence over port |

-

-### serve api

-

-Runs a server that only serves the api side.

-

-```

-yarn rw serve api

-```

-

-This command uses `apiUrl` in your `redwood.toml`. Use this command if you want to run just the api side on a server (e.g. running on Render).

-

-| Arguments & Options | Description |

-| ------------------- | ----------------------------------------------------------------- |

-| `--port` | What port should the server run on [default: 8911] |

-| `--socket` | The socket the server should run. This takes precedence over port |

-| `--apiRootPath` | The root path where your api functions are served |

-

-For the full list of Server Configuration settings, see [this documentation](app-configuration-redwood-toml.md#api).

-If you want to format your log output, you can pipe the command to the Redwood LogFormatter:

-

-```

-yarn rw serve api | yarn rw-log-formatter

-```

-

-### serve web

-

-Runs a server that only serves the web side.

-

-```

-yarn rw serve web

-```

-

-This command serves the contents in `web/dist`. Use this command if you're debugging (e.g. great for debugging prerender) or if you want to run your api and web sides on separate servers, which is often considered a best practice for scalability (since your api side likely has much higher scaling requirements).

-

-> **But shouldn't I use nginx and/or equivalent technology to serve static files?**

->

-> Probably, but it can be a challenge to setup when you just want something running quickly!

-

-| Arguments & Options | Description |

-| ------------------- | ------------------------------------------------------------------------------------- |

-| `--port` | What port should the server run on [default: 8911] |

-| `--socket` | The socket the server should run. This takes precedence over port |

-| `--apiHost` | Forwards requests from the `apiUrl` (defined in `redwood.toml`) to the specified host |

-

-If you want to format your log output, you can pipe the command to the Redwood LogFormatter:

-

-```

-yarn rw serve web | yarn rw-log-formatter

-```

-

-## upgrade

-

-Upgrade all `@redwoodjs` packages via an interactive CLI.

-

-```terminal

-yarn redwood upgrade

-```

-

-This command does all the heavy-lifting of upgrading to a new release for you.

-

-Besides upgrading to a new stable release, you can use this command to upgrade to either of our unstable releases, `canary` and `rc`, or you can upgrade to a specific release version.

-

-A canary release is published to npm every time a PR is merged to the `main` branch, and when we're getting close to a new release, we publish release candidates.

-

-| Option | Description |

-| :-------------- | :----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

-| `--dry-run, -d` | Check for outdated packages without upgrading |

-| `--tag, -t` | Choices are "canary", "rc", or a specific version (e.g. "0.19.3"). WARNING: Unstable releases in the case of "canary" and "rc", which will force upgrade packages to the most recent release of the specified tag. |

-

-**Example**

-

-Upgrade to the most recent canary:

-

-```terminal

-yarn redwood upgrade -t canary

-```

-

-Upgrade to a specific version:

-

-```terminal

-yarn redwood upgrade -t 0.19.3

-```

diff --git a/docs/versioned_docs/version-1.0/connection-pooling.md b/docs/versioned_docs/version-1.0/connection-pooling.md

deleted file mode 100644

index c6bf7d300212..000000000000

--- a/docs/versioned_docs/version-1.0/connection-pooling.md

+++ /dev/null

@@ -1,75 +0,0 @@

-# Connection Pooling

-

-> ⚠ **Work in Progress** ⚠️

->

-> There's more to document here. In the meantime, you can check our [community forum](https://community.redwoodjs.com/search?q=connection%20pooling) for answers.

->

-> Want to contribute? Redwood welcomes contributions and loves helping people become contributors.

-> You can edit this doc [here](https://github.com/redwoodjs/redwoodjs.com/blob/main/docs/connectionPooling.md).

-> If you have any questions, just ask for help! We're active on the [forums](https://community.redwoodjs.com/c/contributing/9) and on [discord](https://discord.com/channels/679514959968993311/747258086569541703).

-

-Production Redwood apps should enable connection pooling in order to properly scale with your Serverless functions.

-## Prisma Pooling with PgBouncer

-

-PgBouncer holds a connection pool to the database and proxies incoming client connections by sitting between Prisma Client and the database. This reduces the number of processes a database has to handle at any given time. PgBouncer passes on a limited number of connections to the database and queues additional connections for delivery when space becomes available.

-

-

-To use Prisma Client with PgBouncer from a serverless function, add the `?pgbouncer=true` flag to the PostgreSQL connection URL:

-

-```

-postgresql://USER:PASSWORD@HOST:PORT/DATABASE?pgbouncer=true

-```

-

-Typically, your PgBouncer port will be 6543 which is different than the Postgres default of 5432.

-

-> Note that since Prisma Migrate uses database transactions to check out the current state of the database and the migrations table, if you attempt to run Prisma Migrate commands in any environment that uses PgBouncer for connection pooling, you might see an error.

->

-> To work around this issue, you must connect directly to the database rather than going through PgBouncer when migrating.

-

-For more information on Prisma and PgBouncer, please refer to Prisma's Guide on [Configuring Prisma Client with PgBouncer](https://www.prisma.io/docs/guides/performance-and-optimization/connection-management/configure-pg-bouncer).

-

-## Supabase

-

-For Postgres running on [Supabase](https://supabase.io) see: [PgBouncer is now available in Supabase](https://supabase.io/blog/2021/04/02/supabase-pgbouncer#using-connection-pooling-in-supabase).

-

-All new Supabase projects include connection pooling using [PgBouncer](https://www.pgbouncer.org/).

-

-We recommend that you connect to your Supabase Postgres instance using SSL which you can do by setting `sslmode` to `require` on the connection string:

-

-```

-// not pooled typically uses port 5432

-postgresql://postgres:mydb.supabase.co:5432/postgres?sslmode=require

-// pooled typically uses port 6543

-postgresql://postgres:mydb.supabase.co:6543/postgres?sslmode=require&pgbouncer=true

-```

-

-## Heroku

-For Postgres, see [Postgres Connection Pooling](https://devcenter.heroku.com/articles/postgres-connection-pooling).

-

-Heroku does not officially support MySQL.

-

-

-## Digital Ocean

-For Postgres, see [How to Manage Connection Pools](https://www.digitalocean.com/docs/databases/postgresql/how-to/manage-connection-pools)

-

-Connection Pooling for MySQL is not yet supported.

-

-## AWS

-Use [Amazon RDS Proxy](https://aws.amazon.com/rds/proxy) for MySQL or PostgreSQL.

-

-From the [AWS Docs](https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/rds-proxy.html#rds-proxy.limitations):

->Your RDS Proxy must be in the same VPC as the database. The proxy can't be publicly accessible.

-

-Because of this limitation, with out-of-the-box configuration, you can only use RDS Proxy if you're deploying your Lambda Functions to the same AWS account. Alternatively, you can use RDS directly, but you might require larger instances to handle your production traffic and the number of concurrent connections.

-

-

-## Why Connection Pooling?

-

-Relational databases have a maximum number of concurrent client connections.

-

-* Postgres allows 100 by default

-* MySQL allows 151 by default

-

-In a traditional server environment, you would need a large amount of traffic (and therefore web servers) to exhaust these connections, since each web server instance typically leverages a single connection.

-

-In a Serverless environment, each function connects directly to the database, which can exhaust limits quickly. To prevent connection errors, you should add a connection pooling service in front of your database. Think of it as a load balancer.

diff --git a/docs/versioned_docs/version-1.0/contributing-overview.md b/docs/versioned_docs/version-1.0/contributing-overview.md

deleted file mode 100644

index fa0c63a2bc04..000000000000

--- a/docs/versioned_docs/version-1.0/contributing-overview.md

+++ /dev/null

@@ -1,177 +0,0 @@

----

-slug: contributing

----

-

-# Contributing: Overview and Orientation

-

-Love Redwood and want to get involved? You’re in the right place and in good company! As of this writing, there are more than [250 contributors](https://github.com/redwoodjs/redwood/blob/main/README.md#contributors) who have helped make Redwood awesome by contributing code and documentation. This doesn't include all those who participate in the vibrant, helpful, and encouraging Forums and Discord, which are both great places to get started if you have any questions.

-

-There are several ways you can contribute to Redwood:

-

-- join the [community Forums](https://community.redwoodjs.com/) and [Discord server](https://discord.gg/jjSYEQd) — encourage and help others 🙌

-- [triage issues on the repo](https://github.com/redwoodjs/redwood/issues) and [review PRs](https://github.com/redwoodjs/redwood/pulls) 🩺

-- write and edit [docs](#contributing-docs) ✍️

-- and of course, write code! 👩💻

-

-_Before interacting with the Redwood community, please read and understand our [Code of Conduct](https://github.com/redwoodjs/redwood/blob/main/CODE_OF_CONDUCT.md#contributor-covenant-code-of-conduct)._

-

-> ⚡️ **Quick Links**

->

-> There are several contributing docs and references, each covering specific topics:

->

-> 1. 🧭 **Overview and Orientation** (👈 you are here)

-> 2. 📓 [Reference: Contributing to the Framework Packages](https://github.com/redwoodjs/redwood/blob/main/CONTRIBUTING.md)

-> 3. 🪜 [Step-by-step Walkthrough](contributing-walkthrough.md) (including Video Recording)

-> 4. 📈 [Current Project Status: v1 Release Board](https://github.com/orgs/redwoodjs/projects/6)

-> 5. 🤔 What should I work on?

-> - ["Help Wanted" v1 Triage Board](https://redwoodjs.com/good-first-issue)

-> - [Discovery Process and Open Issues](#what-should-i-work-on)

-

-## The Characteristics of a Contributor

-More than committing code, contributing is about human collaboration and relationship. Our community mantra is **“By helping each other be successful with Redwood, we make the Redwood project successful.”** We have a specific vision for the effect this project and community will have on you — it should give you superpowers to build+create, progress in skills, and help advance your career.

-

-So who do you need to become to achieve this? Specifically, what characteristics, skills, and capabilities will you need to cultivate through practice? Here are our suggestions:

-- Empathy

-- Gratitude

-- Generosity

-

-All of these are applicable in relation to both others and yourself. The goal of putting them into practice is to create trust that will be a catalyst for risk-taking (another word to describe this process is “learning”!). These are the ingredients necessary for productive, positive collaboration.

-

-And you thought all this was just about opening a PR 🤣 Yes, it’s a super rewarding experience. But that’s just the beginning!

-

-## What should I work on?

-Even if you know the mechanics, it’s hard to get started without a starting place. Our best advice is this — dive into the Redwood Tutorial, read the docs, and build your own experiment with Redwood. Along the way, you’ll find typos, out-of-date (or missing) documentation, code that could work better, or even opportunities for improving and adding features. You’ll be engaging in the Forums and Chat and developing a feel for priorities and needs. This way, you’ll naturally follow your own interests and sooner than later intersect “things you’re interested in” + “ways to help improve Redwood”.

-

-There are other more direct ways to get started as well, which are outlined below.

-

-### Roadmap to Redwood v1: Project Boards and GitHub Issues

-Over the next few months, our focus is to achieve a v1.0.0 release of Redwood. You can read Tom’s important announcement about the v1 release candidate process [via this forum post](https://community.redwoodjs.com/t/what-the-1-0-release-candidate-phase-means-and-when-1-0-will-drop/2604).

-

-> **What a v1 release candidate means:**

->

-> 1. all core features are complete and

-> 2. we are done making breaking changes

->

-> During the release candidate cycle, we are completing all remaining tasks necessary to publish v1.0.0 GA.

-

-The Redwood Core Team is working publicly — progress is updated daily on the [Release Project Board](https://github.com/orgs/redwoodjs/projects/6). There’s also a Triage Board, including an important tab view of Issues that are priorities but need community help.

-- 👉 **Start here**: [v1 Help Wanted tab on the Triage Project Board](https://github.com/orgs/redwoodjs/projects/4) (sorted by difficulty)

-

-

-Eventually, all this leads you back to Redwood’s GitHub Issues page. Here you’ll find open items that need help, which are organized by labels. There are four labels helpful for contributing:

-1. [Good First Issue](https://github.com/redwoodjs/redwood/issues?q=is%3Aissue+is%3Aopen+label%3A%22good+first+issue%22): these items are more likely to be an accessible entry point to the Framework. It’s less about skill level and more about focused scope.

-2. [Help Wanted](https://github.com/redwoodjs/redwood/issues?q=is%3Aissue+is%3Aopen+label%3A%22help+wanted%22): these items especially need contribution help from the community.

-3. [v1 Priority](https://github.com/redwoodjs/redwood/issues?q=is%3Aissue+is%3Aopen+label%3Av1%2Fpriority+): to reach Redwood v1.0.0, we need to close all Issues with this label.

-4. [Bugs 🐛](https://github.com/redwoodjs/redwood/issues?q=is%3Aissue+is%3Aopen+label%3Abug%2Fconfirmed): Last but not least, we always need help with bugs. Some are technically less challenging than others. Sometimes the best way you can help is to attempt to reproduce the bug and confirm whether or not it’s still an issue.

-

-**The sweet spot is a “v1 Priority” Issue that’s either a “Good First Issue” or “Help Wanted”.** Yes, please!

-

-### Create a New Issue

-Anyone can create a new Issue. If you’re not sure that your feature or idea is something to work on, start the discussion with an Issue. Describe the idea and problem + solution as clearly as possible, including examples or pseudo code if applicable. It’s also very helpful to `@` mention a maintainer or Core Team member that shares the area of interest.

-

-Just know that there’s a lot of Issues that shuffle every day. If no one replies, it’s just because people are busy. Reach out in the Forums, Chat, or comment in the Issue. We intend to reply to every Issue that’s opened. If yours doesn’t have a reply, then give us a nudge!

-

-Lastly, it can often be helpful to start with brief discussion in the community Chat or Forums. Sometimes that’s the quickest way to get feedback and a sense of priority before opening an Issue.

-

-## Contributing Code

-

-Redwood's composed of many packages that are designed to work together. Some of these packages are designed to be used outside Redwood too!

-

-Before you start contributing, you'll want to set up your local development environment. The Redwood repo's top-level [contributing guide](https://github.com/redwoodjs/redwood/blob/main/CONTRIBUTING.md#local-development) walks you through this. Make sure to give it an initial read.

-

-For details on contributing to a specific package, see the package's README (links provided in the table below). Each README has a section named Roadmap. If you want to get involved but don't quite know how, the Roadmap's a good place to start. See anything that interests you? Go for it! And be sure to let us know—you don't have to have a finished product before opening an issue or pull request. In fact, we're big fans of [Readme Driven Development](https://tom.preston-werner.com/2010/08/23/readme-driven-development.html).

-

-What you want to do not on the roadmap? Well, still go for it! We love spikes and proof-of-concepts. And if you have a question, just ask!

-

-### RedwoodJS Framework Packages

-|Package|Description|

-|:-|:-|

-|[`@redwoodjs/api-server`](https://github.com/redwoodjs/redwood/blob/main/packages/api-server/README.md)|Run a Redwood app using Express server (alternative to serverless API)|

-|[`@redwoodjs/api`](https://github.com/redwoodjs/redwood/blob/main/packages/api/README.md)|Infrastruction components for your applications UI including logging, webhooks, authentication decoders and parsers, as well as tools to test custom serverless functions and webhooks|

-|[`@redwoodjs/auth`](https://github.com/redwoodjs/redwood/blob/main/packages/auth/README.md#contributing)|A lightweight wrapper around popular SPA authentication libraries|

-|[`@redwoodjs/cli`](https://github.com/redwoodjs/redwood/blob/main/packages/cli/README.md)|All the commands for Redwood's built-in CLI|

-|[`@redwoodjs/core`](https://github.com/redwoodjs/redwood/blob/main/packages/core/README.md)|Defines babel plugins and config files|

-|[`@redwoodjs/create-redwood-app`](https://github.com/redwoodjs/redwood/blob/main/packages/create-redwood-app/README.md)|Enables `yarn create redwood-app`—downloads the latest release of Redwood and extracts it into the supplied directory|

-|[`@redwoodjs/dev-server`](https://github.com/redwoodjs/redwood/blob/main/packages/dev-server/README.md)|Configuration for the local development server|

-|[`@redwoodjs/eslint-config`](https://github.com/redwoodjs/redwood/blob/main/packages/eslint-config/README.md)|Defines Redwood's eslint config|

-|[`@redwoodjs/eslint-plugin-redwood`](https://github.com/redwoodjs/redwood/blob/main/packages/eslint-plugin-redwood/README.md)|Defines eslint plugins; currently just prohibits the use of non-existent pages in `Routes.js`|

-|[`@redwoodjs/forms`](https://github.com/redwoodjs/redwood/blob/main/packages/forms/README.md)|Provides Form helpers|

-|[`@redwoodjs/graphql-server`](https://github.com/redwoodjs/redwood/blob/main/packages/graphql-server/README.md)|Exposes functions to build the GraphQL API, provides services with `context`, and a set of envelop plugins to supercharge your GraphQL API with logging, authentication, error handling, directives and more|

-|[`@redwoodjs/internal`](https://github.com/redwoodjs/redwood/blob/main/packages/internal/README.md)|Provides tooling to parse Redwood configs and get a project's paths|

-|[`@redwoodjs/router`](https://github.com/redwoodjs/redwood/blob/main/packages/router/README.md)|The built-in router for Redwood|

-|[`@redwoodjs/structure`](https://github.com/redwoodjs/redwood/blob/main/packages/structure/README.md)|Provides a way to build, validate and inspect an object graph that represents a complete Redwood project|

-|[`@redwoodjs/testing`](https://github.com/redwoodjs/redwood/blob/main/packages/testing/README.md)|Provides helpful defaults when testing a Redwood project's web side|

-|[`@redwoodjs/web`](https://github.com/redwoodjs/redwood/blob/main/packages/web/README.md)|Configures a Redwood's app web side: wraps the Apollo Client in `RedwoodApolloProvider`; defines the Cell HOC|

-

-## Contributing Docs

-

-First off, thank you for your interest in contributing docs! Redwood prides itself on good developer experience, and that includes good documentation.

-

-Before you get started, there's an implicit doc-distinction that we should make explicit: all the docs on redwoodjs.com are for helping people develop apps using Redwood, while all the docs on the Redwood repo are for helping people contribute to Redwood.

-

-Although Developing and Contributing docs are in different places, they most definitely should be linked and referenced as needed. For example, it's appropriate to have a "Contributing" doc on redwoodjs.com that's context-appropriate, but it should link to the Framework's [CONTRIBUTING.md](https://github.com/redwoodjs/redwood/blob/main/CONTRIBUTING.md) (the way this doc does).

-

-### How Redwood Thinks about Docs

-

-Before we get into the how-to, a little explanation. When thinking about docs, we find [divio's documentation system](https://documentation.divio.com/) really useful. It's not necessary that a doc always have all four of the dimensions listed, but if you find yourself stuck, you can ask yourself questions like "Should I be explaining? Am I explaining too much? Too little?" to reorient yourself while writing.

-

-### Docs for Developing Redwood Apps

-

-redwoodjs.com has three kinds of Developing docs: References, How To's, and The Tutorial.

-You can find References and How To's within their respective directories on the redwood/redwood repo: [docs/](https://github.com/redwoodjs/redwood/tree/main/docs) and [how-to/](https://github.com/redwoodjs/redwood/tree/main/docs/how-to).

-

-The Tutorial is a standalone document that serves a specific purpose as an introduction to Redwood, an aspirational roadmap, and an example of developer experience. As such, it's distinct from the categories mentioned, although it's most similar to How To's.

-

-#### References

-

-References are explanation-driven how-to content. They're more direct and to-the-point than The Tutorial and How To's. The idea is much more about finding something or getting something done than any kind of learning journey.

-

-Before you take on a doc, you should read [Forms](forms.md) and [Router](router.md); they have the kind of content you should be striving for. They're comprehensive yet conversational.

-

-In general, don't be afraid to go into too much detail. We'd rather you err on the side of too much than too little. One tip for finding good content is searching the forum and repo for "prior art"—what are people talking about where this comes up?

-

-#### How To's

-

-How To's are tutorial-style content focused on a specific problem-solution. They usually have a beginner in mind (if not, they should indicate that they don't—put 'Advanced' or 'Deep-Dive', etc., in the title or introduction). How To's may include some explanatory text as asides, but they shouldn't be the majority of the content.

-

-#### Making a Doc Findable

-

-If you write it, will they read it? We think they will—if they can find it.

-

-After you've finished writing, step back for a moment and consider the word(s) or phrase(s) people will use to find what you just wrote. For example, let's say you were writing a doc about configuring a Redwood app. If you didn't know much about configuring a Redwood app, a heading (in the nav bar to the left) like "redwood.toml" wouldn't make much sense, even though it _is_ the main configuration file. You'd probably look for "Redwood Config" or "Settings", or type "how to change Redwood App settings" in the "Search the docs" bar up top, or in Google.

-

-That is to say, the most useful headings aren't always the most literal ones. Indexing is more than just underlining the "important" words in a text—it's identifying and locating the concepts and topics that are the most relevant to our readers, the users of our documentation.

-

-So, after you've finished writing, reread what you wrote with the intention of making a list of two to three keywords or phrases. Then, try to use each of those in three places, in this order of priority:

-

-- the left-nav menu title

-- the page title or the first right-nav ("On this page") section title

-- the introductory paragraph

-

-### Docs for Contributing to the Redwood Repo

-

-These docs are in the Framework repo, redwoodjs/redwood, and explain how to contribute to Redwood packages. They're the docs linked to in the table above.

-

-In general, they should consist of more straightforward explanations, are allowed to be technically heavy, and should be written for a more experienced audience. But as a best practice for collaborative projects, they should still provide a Vision + Roadmap and identify the project-point person(s) (or lead(s)).

-

-## What makes for a good Pull Request?

-In general, we don’t have a formal structure for PRs. Our goal is to make it as efficient as possible for anyone to open a PR. But there are some good practices, which are flexible. Just keep in mind that after opening a PR there’s more to do before getting to the finish line:

-1. Reviews from other contributors and maintainers

-2. Update code and, after maintainer approval, merge-in changes to the `main` branch

-3. Once PR is merged, it will be released and added to the next version Release Notes with a link for anyone to look at the PR and understand it.

-

-Some tips and advice:

-- **Connect the dots and leave a breadcrumb**: link to related Issues, Forum discussions, etc. Help others follow the trail leading up to this PR.

-- **A Helpful Description**: What does the code in the PR do and what problem does it solve? How can someone use the code? Code sample, Screenshot, Quick Video… Any or all of this is so so good.

-- **Draft or Work in Progress**: You don’t have to finish the code to open a PR. Once you have a start, open it up! Most often the best way to move an Issue forward is to see the code in action. Also, often this helps identify ways forward before you spend a lot of time polishing.

-- **Questions, Items for Discussion, Etc.**: Another reason to open a Draft PR is to ask questions and get direction via review.

-- **Loop in a Maintainer for Feedback and Review**: ping someone with an `@`. And nudge again in a few days if there’s no reply. We appreciate it and truly don’t want the PR to get lost in the shuffle!

-- **Next Steps**: Once the PR is merged, will there be a follow up step? If so, link to an Issue. How about Docs to-do or Docs to-merge?

-

-The best thing you can do is look through existing PRs, which will give you a feel for how things work and what you think is helpful.

-

-### Example PR

-If you’re looking for an example of “what makes a good PR”, look no further than this one by Kim-Adeline:

-- [Convert component generator to TS #632](https://github.com/redwoodjs/redwood/pull/632)

-

-Not every PR needs this much information. But it’s definitely helpful when it does!

diff --git a/docs/versioned_docs/version-1.0/contributing-walkthrough.md b/docs/versioned_docs/version-1.0/contributing-walkthrough.md

deleted file mode 100644

index e0e186ea0768..000000000000

--- a/docs/versioned_docs/version-1.0/contributing-walkthrough.md

+++ /dev/null

@@ -1,234 +0,0 @@

-# Contributing: Step-by-Step Walkthrough (with Video)

-

-> ⚡️ **Quick Links**

->

-> There are several contributing docs and references, each covering specific topics:

->

-> 1. 🧭 [Overview and Orientation](contributing-overview.md)

-> 2. 📓 [Reference: Contributing to the Framework Packages](https://github.com/redwoodjs/redwood/blob/main/CONTRIBUTING.md)

-> 3. 🪜 **Step-by-step Walkthrough** (👈 you are here)

-> 4. 📈 [Current Project Status: v1 Release Board](https://github.com/orgs/redwoodjs/projects/6)

-> 5. 🤔 What should I work on?

-> - ["Help Wanted" v1 Triage Board](https://redwoodjs.com/good-first-issue)

-> - [Discovery Process and Open Issues](contributing-overview.md#what-should-i-work-on)

-

-

-## Video Recording of Complete Contributing Process

-The following recording is from a Contributing Workshop, following through the exact steps outlined below. The Workshop includes additional topics along with Q&A discussion.

-

-

-

-## Prologue: Getting Started with Redwood and GitHub (and git)

-These are the foundations for contributing, which you should be familiar with before starting the walkthrough.

-

-[**The Redwood Tutorial**](tutorial/foreword.md)

-

-The best (and most fun) way to learn Redwood and the underlying tools and technologies.

-

-**Docs and How To**

-

-- Start with the [Introduction](https://github.com/redwoodjs/redwood/blob/main/README.md) Doc

-- And browse through [How To's](how-to/index)

-

-### GitHub (and Git)

-Diving into Git and the GitHub workflow can feel intimidating if you haven’t experienced it before. The good news is there’s a lot of great material to help you learn and be committing in no time!

-

-- [Introduction to GitHub](https://lab.github.com/githubtraining/introduction-to-github) (overview of concepts and workflow)

-- [First Day on GitHub](https://lab.github.com/githubtraining/first-day-on-github) (including Git)

-- [First Week on GitHub](https://lab.github.com/githubtraining/first-week-on-github) (parts 3 and 4 might be helpful)

-

-## The Full Workflow: From Local Development to a New PR

-

-### Definitions

-#### Redwood “Project”

-We refer to the codebase of a Redwood application as a Project. This is what you install when you run `yarn create redwood-app `. It’s the thing you are building with Redwood.

-

-Lastly, you’ll find the template used to create a new project (when you run create redwood-app) here in GitHub: [redwoodjs/redwood/packages/create-redwood-app/template/](https://github.com/redwoodjs/redwood/tree/main/packages/create-redwood-app/template)

-

-We refer to this as the **CRWA Template or Project Template**.

-

-#### Redwood “Framework”

-The Framework is the codebase containing all the packages (and other code) that is published on NPMjs.com as `@redwoodjs/`. The Framework repository on GitHub is here: [https://github.com/redwoodjs/redwood](https://github.com/redwoodjs/redwood)

-

-### Development tools

-These are the tools used and recommended by the Core Team.

-

-**VS Code**

-[Download VS Code](https://code.visualstudio.com/download)

-This has quickly become the de facto editor for JavaScript and TypeScript. Additionally, we have added recommended VS Code Extensions to use when developing both the Framework and a Project. You’ll see a pop-up window asking you about installing the extensions when you open up the code.

-

-**GitHub Desktop**

-[Download GitHub Desktop](https://desktop.github.com)

-You’ll need to be comfortable using Git at the command line. But the thing ew like best about GitHub Desktop is how easy it makes workflow across GitHub -- GitHub Desktop -- VS Code. You don’t have to worry about syncing permissions or finding things. You can start from a repo on GitHub.com and use Desktop to do everything from “clone and open on your computer” to returning back to the site to “open a PR on GitHub”.

-

-**[Mac OS] iTerm and Oh-My-Zsh**

-There’s nothing wrong with Terminal (on Mac) and bash. (If you’re on Windows, we highly recommend using Git for Windows and Git bash.) But we enjoy using iTerm ([download](https://iterm2.com)) and Zsh much more (use [Oh My Zsh](https://ohmyz.sh)). Heads up, you can get lost in the world of theming and adding plugins. We recommend keeping it simple for awhile before taking the customization deep dive

-😉

-

-**[Windows] Git for Windows with Git Bash or WSL(2)**

-Unfortunately, there are a lot of “gotchas” when it comes to working with Javascript-based frameworks on Windows. We do our best to point out (and resolve) issues, but our priority focus is on developing a Redwood app vs contributing to the Framework. (If you’re interested, there’s a lengthy Forum conversation about this with many suggestions.)

-

-All that said, we highly recommend using one of the following setups to maximize your workflow:

-1. Use [Git for Windows and Git Bash](how-to/windows-development-setup.md) (included in installation)