|

| 1 | +{ |

| 2 | + "cells": [ |

| 3 | + { |

| 4 | + "cell_type": "markdown", |

| 5 | + "metadata": {}, |

| 6 | + "source": [ |

| 7 | + "# Bi-directional Recurrent Neural Network Example\n", |

| 8 | + "\n", |

| 9 | + "Build a bi-directional recurrent neural network (LSTM) with TensorFlow 2.0.\n", |

| 10 | + "\n", |

| 11 | + "- Author: Aymeric Damien\n", |

| 12 | + "- Project: https://github.com/aymericdamien/TensorFlow-Examples/" |

| 13 | + ] |

| 14 | + }, |

| 15 | + { |

| 16 | + "cell_type": "markdown", |

| 17 | + "metadata": {}, |

| 18 | + "source": [ |

| 19 | + "## BiRNN Overview\n", |

| 20 | + "\n", |

| 21 | + "<img src=\"https://ai2-s2-public.s3.amazonaws.com/figures/2016-11-08/191dd7df9cb91ac22f56ed0dfa4a5651e8767a51/1-Figure2-1.png\" alt=\"nn\" style=\"width: 600px;\"/>\n", |

| 22 | + "\n", |

| 23 | + "References:\n", |

| 24 | + "- [Long Short Term Memory](http://deeplearning.cs.cmu.edu/pdfs/Hochreiter97_lstm.pdf), Sepp Hochreiter & Jurgen Schmidhuber, Neural Computation 9(8): 1735-1780, 1997.\n", |

| 25 | + "\n", |

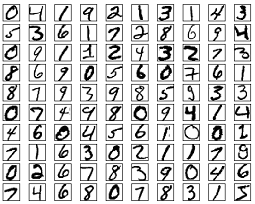

| 26 | + "## MNIST Dataset Overview\n", |

| 27 | + "\n", |

| 28 | + "This example is using MNIST handwritten digits. The dataset contains 60,000 examples for training and 10,000 examples for testing. The digits have been size-normalized and centered in a fixed-size image (28x28 pixels) with values from 0 to 1. For simplicity, each image has been flattened and converted to a 1-D numpy array of 784 features (28*28).\n", |

| 29 | + "\n", |

| 30 | + "\n", |

| 31 | + "\n", |

| 32 | + "To classify images using a recurrent neural network, we consider every image row as a sequence of pixels. Because MNIST image shape is 28*28px, we will then handle 28 sequences of 28 timesteps for every sample.\n", |

| 33 | + "\n", |

| 34 | + "More info: http://yann.lecun.com/exdb/mnist/" |

| 35 | + ] |

| 36 | + }, |

| 37 | + { |

| 38 | + "cell_type": "code", |

| 39 | + "execution_count": 1, |

| 40 | + "metadata": {}, |

| 41 | + "outputs": [], |

| 42 | + "source": [ |

| 43 | + "from __future__ import absolute_import, division, print_function\n", |

| 44 | + "\n", |

| 45 | + "# Import TensorFlow v2.\n", |

| 46 | + "import tensorflow as tf\n", |

| 47 | + "from tensorflow.keras import Model, layers\n", |

| 48 | + "import numpy as np" |

| 49 | + ] |

| 50 | + }, |

| 51 | + { |

| 52 | + "cell_type": "code", |

| 53 | + "execution_count": 2, |

| 54 | + "metadata": {}, |

| 55 | + "outputs": [], |

| 56 | + "source": [ |

| 57 | + "# MNIST dataset parameters.\n", |

| 58 | + "num_classes = 10 # total classes (0-9 digits).\n", |

| 59 | + "num_features = 784 # data features (img shape: 28*28).\n", |

| 60 | + "\n", |

| 61 | + "# Training Parameters\n", |

| 62 | + "learning_rate = 0.001\n", |

| 63 | + "training_steps = 1000\n", |

| 64 | + "batch_size = 32\n", |

| 65 | + "display_step = 100\n", |

| 66 | + "\n", |

| 67 | + "# Network Parameters\n", |

| 68 | + "# MNIST image shape is 28*28px, we will then handle 28 sequences of 28 timesteps for every sample.\n", |

| 69 | + "num_input = 28 # number of sequences.\n", |

| 70 | + "timesteps = 28 # timesteps.\n", |

| 71 | + "num_units = 32 # number of neurons for the LSTM layer." |

| 72 | + ] |

| 73 | + }, |

| 74 | + { |

| 75 | + "cell_type": "code", |

| 76 | + "execution_count": 3, |

| 77 | + "metadata": {}, |

| 78 | + "outputs": [], |

| 79 | + "source": [ |

| 80 | + "# Prepare MNIST data.\n", |

| 81 | + "from tensorflow.keras.datasets import mnist\n", |

| 82 | + "(x_train, y_train), (x_test, y_test) = mnist.load_data()\n", |

| 83 | + "# Convert to float32.\n", |

| 84 | + "x_train, x_test = np.array(x_train, np.float32), np.array(x_test, np.float32)\n", |

| 85 | + "# Flatten images to 1-D vector of 784 features (28*28).\n", |

| 86 | + "x_train, x_test = x_train.reshape([-1, 28, 28]), x_test.reshape([-1, num_features])\n", |

| 87 | + "# Normalize images value from [0, 255] to [0, 1].\n", |

| 88 | + "x_train, x_test = x_train / 255., x_test / 255." |

| 89 | + ] |

| 90 | + }, |

| 91 | + { |

| 92 | + "cell_type": "code", |

| 93 | + "execution_count": 4, |

| 94 | + "metadata": {}, |

| 95 | + "outputs": [], |

| 96 | + "source": [ |

| 97 | + "# Use tf.data API to shuffle and batch data.\n", |

| 98 | + "train_data = tf.data.Dataset.from_tensor_slices((x_train, y_train))\n", |

| 99 | + "train_data = train_data.repeat().shuffle(5000).batch(batch_size).prefetch(1)" |

| 100 | + ] |

| 101 | + }, |

| 102 | + { |

| 103 | + "cell_type": "code", |

| 104 | + "execution_count": 5, |

| 105 | + "metadata": {}, |

| 106 | + "outputs": [], |

| 107 | + "source": [ |

| 108 | + "# Create LSTM Model.\n", |

| 109 | + "class BiRNN(Model):\n", |

| 110 | + " # Set layers.\n", |

| 111 | + " def __init__(self):\n", |

| 112 | + " super(BiRNN, self).__init__()\n", |

| 113 | + " # Define 2 LSTM layers for forward and backward sequences.\n", |

| 114 | + " lstm_fw = layers.LSTM(units=num_units)\n", |

| 115 | + " lstm_bw = layers.LSTM(units=num_units, go_backwards=True)\n", |

| 116 | + " # BiRNN layer.\n", |

| 117 | + " self.bi_lstm = layers.Bidirectional(lstm_fw, backward_layer=lstm_bw)\n", |

| 118 | + " # Output layer (num_classes).\n", |

| 119 | + " self.out = layers.Dense(num_classes)\n", |

| 120 | + "\n", |

| 121 | + " # Set forward pass.\n", |

| 122 | + " def call(self, x, is_training=False):\n", |

| 123 | + " x = self.bi_lstm(x)\n", |

| 124 | + " x = self.out(x)\n", |

| 125 | + " if not is_training:\n", |

| 126 | + " # tf cross entropy expect logits without softmax, so only\n", |

| 127 | + " # apply softmax when not training.\n", |

| 128 | + " x = tf.nn.softmax(x)\n", |

| 129 | + " return x\n", |

| 130 | + "\n", |

| 131 | + "# Build LSTM model.\n", |

| 132 | + "birnn_net = BiRNN()" |

| 133 | + ] |

| 134 | + }, |

| 135 | + { |

| 136 | + "cell_type": "code", |

| 137 | + "execution_count": 6, |

| 138 | + "metadata": {}, |

| 139 | + "outputs": [], |

| 140 | + "source": [ |

| 141 | + "# Cross-Entropy Loss.\n", |

| 142 | + "# Note that this will apply 'softmax' to the logits.\n", |

| 143 | + "def cross_entropy_loss(x, y):\n", |

| 144 | + " # Convert labels to int 64 for tf cross-entropy function.\n", |

| 145 | + " y = tf.cast(y, tf.int64)\n", |

| 146 | + " # Apply softmax to logits and compute cross-entropy.\n", |

| 147 | + " loss = tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=x)\n", |

| 148 | + " # Average loss across the batch.\n", |

| 149 | + " return tf.reduce_mean(loss)\n", |

| 150 | + "\n", |

| 151 | + "# Accuracy metric.\n", |

| 152 | + "def accuracy(y_pred, y_true):\n", |

| 153 | + " # Predicted class is the index of highest score in prediction vector (i.e. argmax).\n", |

| 154 | + " correct_prediction = tf.equal(tf.argmax(y_pred, 1), tf.cast(y_true, tf.int64))\n", |

| 155 | + " return tf.reduce_mean(tf.cast(correct_prediction, tf.float32), axis=-1)\n", |

| 156 | + "\n", |

| 157 | + "# Adam optimizer.\n", |

| 158 | + "optimizer = tf.optimizers.Adam(learning_rate)" |

| 159 | + ] |

| 160 | + }, |

| 161 | + { |

| 162 | + "cell_type": "code", |

| 163 | + "execution_count": 7, |

| 164 | + "metadata": {}, |

| 165 | + "outputs": [], |

| 166 | + "source": [ |

| 167 | + "# Optimization process. \n", |

| 168 | + "def run_optimization(x, y):\n", |

| 169 | + " # Wrap computation inside a GradientTape for automatic differentiation.\n", |

| 170 | + " with tf.GradientTape() as g:\n", |

| 171 | + " # Forward pass.\n", |

| 172 | + " pred = birnn_net(x, is_training=True)\n", |

| 173 | + " # Compute loss.\n", |

| 174 | + " loss = cross_entropy_loss(pred, y)\n", |

| 175 | + " \n", |

| 176 | + " # Variables to update, i.e. trainable variables.\n", |

| 177 | + " trainable_variables = birnn_net.trainable_variables\n", |

| 178 | + "\n", |

| 179 | + " # Compute gradients.\n", |

| 180 | + " gradients = g.gradient(loss, trainable_variables)\n", |

| 181 | + " \n", |

| 182 | + " # Update W and b following gradients.\n", |

| 183 | + " optimizer.apply_gradients(zip(gradients, trainable_variables))" |

| 184 | + ] |

| 185 | + }, |

| 186 | + { |

| 187 | + "cell_type": "code", |

| 188 | + "execution_count": 8, |

| 189 | + "metadata": {}, |

| 190 | + "outputs": [ |

| 191 | + { |

| 192 | + "name": "stdout", |

| 193 | + "output_type": "stream", |

| 194 | + "text": [ |

| 195 | + "step: 100, loss: 1.306422, accuracy: 0.625000\n", |

| 196 | + "step: 200, loss: 0.973236, accuracy: 0.718750\n", |

| 197 | + "step: 300, loss: 0.673558, accuracy: 0.781250\n", |

| 198 | + "step: 400, loss: 0.439304, accuracy: 0.875000\n", |

| 199 | + "step: 500, loss: 0.303866, accuracy: 0.906250\n", |

| 200 | + "step: 600, loss: 0.414652, accuracy: 0.875000\n", |

| 201 | + "step: 700, loss: 0.241098, accuracy: 0.937500\n", |

| 202 | + "step: 800, loss: 0.204522, accuracy: 0.875000\n", |

| 203 | + "step: 900, loss: 0.398520, accuracy: 0.843750\n", |

| 204 | + "step: 1000, loss: 0.217469, accuracy: 0.937500\n" |

| 205 | + ] |

| 206 | + } |

| 207 | + ], |

| 208 | + "source": [ |

| 209 | + "# Run training for the given number of steps.\n", |

| 210 | + "for step, (batch_x, batch_y) in enumerate(train_data.take(training_steps), 1):\n", |

| 211 | + " # Run the optimization to update W and b values.\n", |

| 212 | + " run_optimization(batch_x, batch_y)\n", |

| 213 | + " \n", |

| 214 | + " if step % display_step == 0:\n", |

| 215 | + " pred = birnn_net(batch_x, is_training=True)\n", |

| 216 | + " loss = cross_entropy_loss(pred, batch_y)\n", |

| 217 | + " acc = accuracy(pred, batch_y)\n", |

| 218 | + " print(\"step: %i, loss: %f, accuracy: %f\" % (step, loss, acc))" |

| 219 | + ] |

| 220 | + } |

| 221 | + ], |

| 222 | + "metadata": { |

| 223 | + "kernelspec": { |

| 224 | + "display_name": "Python 2", |

| 225 | + "language": "python", |

| 226 | + "name": "python2" |

| 227 | + }, |

| 228 | + "language_info": { |

| 229 | + "codemirror_mode": { |

| 230 | + "name": "ipython", |

| 231 | + "version": 2 |

| 232 | + }, |

| 233 | + "file_extension": ".py", |

| 234 | + "mimetype": "text/x-python", |

| 235 | + "name": "python", |

| 236 | + "nbconvert_exporter": "python", |

| 237 | + "pygments_lexer": "ipython2", |

| 238 | + "version": "2.7.15" |

| 239 | + } |

| 240 | + }, |

| 241 | + "nbformat": 4, |

| 242 | + "nbformat_minor": 2 |

| 243 | +} |

0 commit comments