⭐️ Our series works: [MMStar] [ShareGPT4Video] [ShareGPT4Omni]

🚀🚀🚀 Official implementation of ShareGPT4V: Improving Large Multi-modal Models with Better Captions in ECCV 2024.

-

Authors: Lin Chen*, Jinsong Li*, Xiaoyi Dong, Pan Zhang, Conghui He, Jiaqi Wang, Feng Zhao📧, Dahua Lin📧

-

Institutes: University of Science and Technology of China; Shanghai AI Laboratory

-

Resources: [Paper] [Project Page] [

ShareGPT4V Dataset]

ShareGPT4V Dataset] -

Models: [ShareGPT4V-7B] [ShareCaptioner]

-

ShareGPT4V-7B Demo [OpenXLab] [🤗HuggingFace] [Colab]

-

Share-Captioner Demo [OpenXlab] [🤗HuggingFace]

- 🔥 A large-scale highly descriptive image-text dataset

- 🔥 100K GPT4-Vision-generated captions, 1.2M high-quality captions

- 🔥 A general image captioner, approaching GPT4-Vision's caption capability.

- 🔥 A superior large multi-modal model, ShareGPT4V-7B

[2024/7/2] Happy to announce that ShareGPT4V is accepted by ECCV 2024!

[2024/5/8] We released ShareGPT4Video, a large-scale video-caption dataset, with 40K captions annotated by GPT4V and 4.8M captions annotated by our ShareCaptioner-Video. The total videos last with 300 hours and 3000 hours separately!

[2024/4/1] We released an elite vision-indispensable multi-modal benchmark, MMStar. Have fun!🚀

[2023/12/14] We released the ShareGPT4V-13B model. Have fun!🚀

[2023/12/13] Training and evaluation code is available.

[2023/12/13] Local ShareCaptioner is available now! You can utilize it to generate high-quality captions for your dataset with batch inference by directly run tools/share-cap_batch_infer.py.

[2023/11/23] We release the web demo of general Share-Captioner!💥

[2023/11/23] We release code to build your local demo of ShareGPT4V-7B!💥

[2023/11/22] Web demo and checkpoint are available now!💥

[2023/11/21] ShareGPT4V Dataset is available now!💥

[2023/11/20] The paper and project page are released!

- Training and evaluation code for ShareGPT4V-7B

- Local ShareCaptioner

- Web demo and local demo of ShareGPT4V-7B

- Checkpoints of ShareGPT4V-7B

See more details in ModelZoo.md.

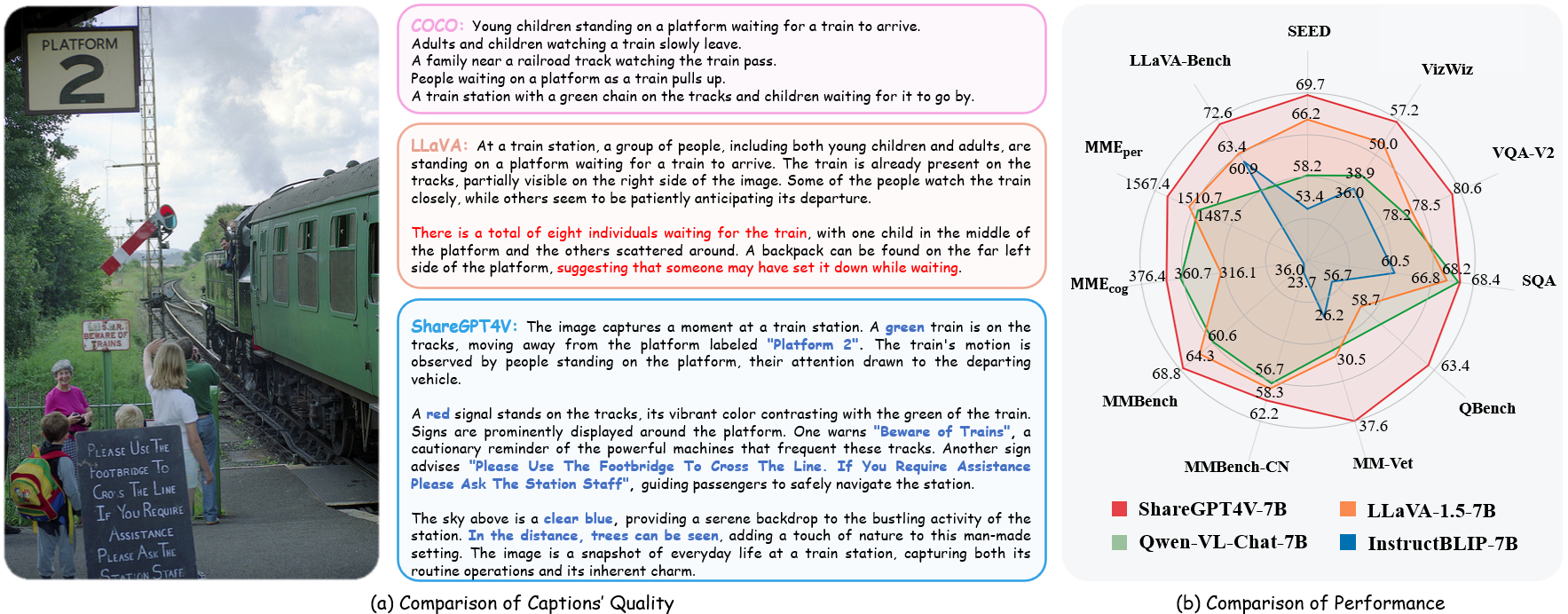

| Name | LLM | Checkpoint | LLaVA-Bench-Wild | MME-perception | MME-cognition | MMBench | MMBench-CN | SEED-image | MM-Vet | QBench | SQA-image | VQA-v2 | VizWiz | GQA | TextVQA |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ShareGPT4V-7B | Vicuna-7B | ShareGPT4V-7B | 72.6 | 1567.4 | 376.4 | 68.8 | 62.2 | 69.7 | 37.6 | 63.4 | 68.4 | 80.6 | 57.2 | 63.3 | 60.4 |

| ShareGPT4V-13B | Vicuna-13B | ShareGPT4V-13B | 79.9 | 1618.7 | 303.2 | 68.5 | 63.7 | 70.8 | 43.1 | 65.2 | 71.2 | 81.0 | 55.6 | 64.8 | 62.2 |

Example Code

from share4v.model.builder import load_pretrained_model

from share4v.mm_utils import get_model_name_from_path

from share4v.eval.run_share4v import eval_model

model_path = "Lin-Chen/ShareGPT4V-7B"

tokenizer, model, image_processor, context_len = load_pretrained_model(

model_path=model_path,

model_base=None,

model_name=get_model_name_from_path(model_path)

)Check out the details wth the load_pretrained_model function in share4v/model/builder.py.

You can also use the eval_model function in share4v/eval/run_llava.py to get the output easily. By doing so, you can use this code on Colab directly after downloading this repository.

model_path = "Lin-Chen/ShareGPT4V-7B"

prompt = "What is the most common catchphrase of the character on the right?"

image_file = "examples/breaking_bad.png"

args = type('Args', (), {

"model_path": model_path,

"model_base": None,

"model_name": get_model_name_from_path(model_path),

"query": prompt,

"conv_mode": None,

"image_file": image_file,

"sep": ",",

"temperature": 0,

"top_p": None,

"num_beams": 1,

"max_new_tokens": 512

})()

eval_model(args)git clone https://github.com/InternLM/InternLM-XComposer --depth=1

cd projects/ShareGPT4V

conda create -n share4v python=3.10 -y

conda activate share4v

pip install --upgrade pip

pip install -e .

pip install -e ".[train]"

pip install flash-attn --no-build-isolationYou can build your local demo by:

# run script

python tools/app.py

You should follow this instruction Data.md to manage the datasets. Currently, we provide direct download access to the web data. However, to avoid potential disputes, we plan to release URLs for these datasets rather than the raw data in the near future.

ShareGPT4V model training consists of two stages: (1) feature alignment stage: use our ShareGPT4V-PT dataset with 1.2M ShareCaptioner-generated high-quality image-text pairs to finetune the vision encoder, projector, and the LLM to align the textual and visual modalities; (2) visual instruction tuning stage: finetune the projector and LLM to teach the model to follow multimodal instructions.

To train on fewer GPUs, you can reduce the per_device_train_batch_size and increase the gradient_accumulation_steps accordingly. Always keep the global batch size the same: per_device_train_batch_size x gradient_accumulation_steps x num_gpus.

We use a similar set of hyperparameters as ShareGPT4V-7B in finetuning. Both hyperparameters used in pretraining and finetuning are provided below.

- Pretraining

| Hyperparameter | Global Batch Size | Learning rate | Epochs | Max length | Weight decay |

|---|---|---|---|---|---|

| ShareGPT4V-7B | 256 | 2e-5 | 1 | 2048 | 0 |

- Finetuning

| Hyperparameter | Global Batch Size | Learning rate | Epochs | Max length | Weight decay |

|---|---|---|---|---|---|

| ShareGPT4V-7B | 128 | 2e-5 | 1 | 2048 | 0 |

First, you should download the MLP projector pretrained by LLaVA-1.5 with LAION-CC-SBU-558K. Because a rough modality alignment process is beneficial before using high quality detailed captions for modality alignment.

You can run projects/ShareGPT4V/scripts/sharegpt4v/slurm_pretrain_7b.sh to pretrain the model. Remember to specify the projector path in the script. In this stage, we fine-tuned the second half of the vision encoder's blocks, projector, and LLM.

In our setup we used 16 A100 (80G) GPUs and the whole pre-training process lasted about 12 hours. You can adjust the number of gradient accumulation steps to reduce the number of GPUs.

In this stage, we finetune the projector and LLM with sharegpt4v_mix665k_cap23k_coco-ap9k_lcs3k_sam9k_div2k.json.

You can run projects/ShareGPT4V/scripts/sharegpt4v/slurm_finetune_7b.sh to finetune the model.

In our setup we used 16 A100 (80G) GPUs and the whole pre-training process lasted about 7 hours. You can adjust the number of gradient accumulation steps to reduce the number of GPUs.

To ensure the reproducibility, we evaluate the models with greedy decoding. We do not evaluate using beam search to make the inference process consistent with the chat demo of real-time outputs.

See Evaluation.md.

- LLaVA: the codebase we built upon. Thanks for their wonderful work.

- Vicuna: the amazing open-sourced large language model!

If you find our work helpful for your research, please consider giving a star ⭐ and citation 📝

@article{chen2023sharegpt4v,

title={ShareGPT4V: Improving Large Multi-Modal Models with Better Captions},

author={Chen, Lin and Li, Jisong and Dong, Xiaoyi and Zhang, Pan and He, Conghui and Wang, Jiaqi and Zhao, Feng and Lin, Dahua},

journal={arXiv preprint arXiv:2311.12793},

year={2023}

}

@article{chen2024sharegpt4video,

title={ShareGPT4Video: Improving Video Understanding and Generation with Better Captions},

author={Chen, Lin and Wei, Xilin and Li, Jinsong and Dong, Xiaoyi and Zhang, Pan and Zang, Yuhang and Chen, Zehui and Duan, Haodong and Lin, Bin and Tang, Zhenyu and others},

journal={arXiv preprint arXiv:2406.04325},

year={2024}

}

@article{chen2024we,

title={Are We on the Right Way for Evaluating Large Vision-Language Models?},

author={Chen, Lin and Li, Jinsong and Dong, Xiaoyi and Zhang, Pan and Zang, Yuhang and Chen, Zehui and Duan, Haodong and Wang, Jiaqi and Qiao, Yu and Lin, Dahua and others},

journal={arXiv preprint arXiv:2403.20330},

year={2024}

}